When you’re investigation and planning large repositories for data (backups, archive, file servers, ISO/VHD stores, …) and you’d like to leverage Windows Data Deduplication you have too keep in mind that the maximum supported size for an NTFS volume is 64TB. They can be a lot bigger but that’s the maximum supported. Why, well they guarantee everything will perform & scale up to that size and all NTFS functionality will be available. Functionality on like volume shadow copies or snapshots. NFTS volumes can not be lager than 64TB or you cannot create a snapshot. And guess what data deduplication seems to depend on?

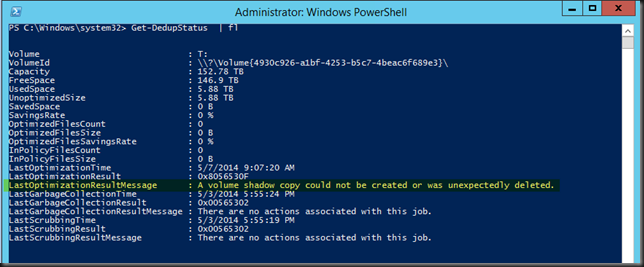

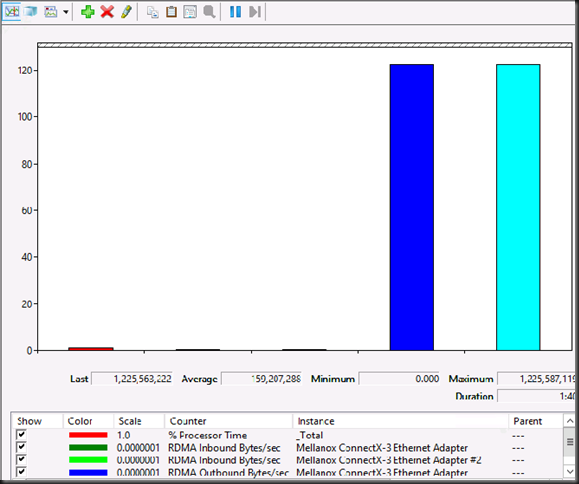

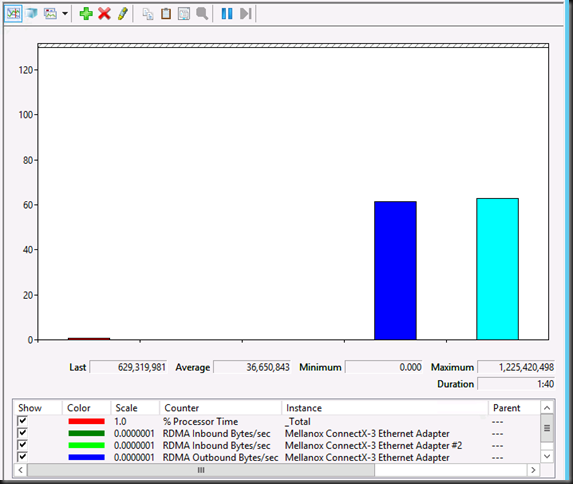

Here’s the output of Get-DedupeStatus for a > 150TB volume:

Note “LastOptimizationResultMessage : A volume shadow copy could not be created or was unexpectedly deleted”.

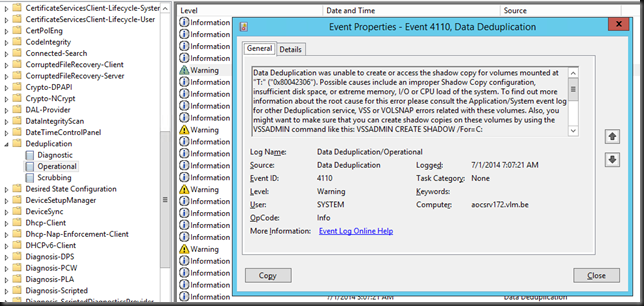

Looking in the Deduplication even log we find more evidence of this.

Data Deduplication was unable to create or access the shadow copy for volumes mounted at "T:" ("0x80042306"). Possible causes include an improper Shadow Copy configuration, insufficient disk space, or extreme memory, I/O or CPU load of the system. To find out more information about the root cause for this error please consult the Application/System event log for other Deduplication service, VSS or VOLSNAP errors related with these volumes. Also, you might want to make sure that you can create shadow copies on these volumes by using the VSSADMIN command like this: VSSADMIN CREATE SHADOW /For=C:

Operation:

Creating shadow copy set.

Running the deduplication job.

Context:

Volume name: T: (\?Volume{4930c926-a1bf-4253-b5c7-4beac6f689e3})

Now there are multiple possible issues that might cause this but if you’ve got a serious amount of data to backup, please check the size of your LUN, especially if it’s larger then 64TB or flirting with that size. It’s temping I know, especially when you only focus on dedup efficiencies. But, you’ll never get any dedupe results on a > 64TB volume. Now you don’t get any warning for this when you configure deduplication. So if you don’t know this you can easily run into this issue. So next to making sure you have enough free space, CPU cycles and memory, keep the partitions you want to dedupe a reasonable size. I’m sticking to +/- 50TB max.

I have blogged before on the maximum supported LUN size and the fact that VSS can’t handle anything bigger that 64TB here Windows Server 2012 64TB Volumes And The New Check Disk Approach. So while you can create volumes of many hundreds of TB you’ll need a hardware provider that supports bigger LUNs if you need snapshots and the software needing these snapshots must be able to leverage that hardware VSS provider. For backups and data protection this is a common scenario. In case you ask, I’ve done a quick crazy test where I tried to leverage a hardware VSS provider in combination with Windows Server data deduplication. A LUN of 50TB worked just fine but I saw no usage of any hardware VSS provider here. Even if you have a hardware VSS provider, it’s not being used for data deduplication (not that I could establish with a quick test anyway) and to the best of my knowledge I don’t think it’s possible, as these have not exactly been written with this use case in mind. Comments on this are welcome, as I had no more time do dig in deeper.