Introduction

With Windows Server 2016 came the hope and promise of improved backups for Hyper-V environments. And indeed Microsoft delivered on that and has given us faster, more scalable and more reliable backups. With VHD sets also came the promise of host based backups for guest clusters.

The problem is that this promise or, as it is perhaps better to be mild and careful, that expectation has not been met. Decent, robust host based backups of guest clusters in Windows Server 2016 are still not a reality. For me this means it blocked a few scenarios and we’re working on alternatives. This is a missed opportunity I think for MSFT to excel at virtualization.

The problem

Doing host based backup of guest clusters with VHD Set disks is supported in Windows Server 2016 under certain conditions.

At RTM it became clear that CSV inside the guest cluster was not supported.

You need a healthy cluster with all disks one line

These requirements are reflected in Errors discovered during backup of VHDS in guest clusters

Error code: ‘32768’. Failed to create checkpoint on collection ‘Hyper-V Collection’

Reason: We failed to query the cluster service inside the Guest VM. Check that cluster feature is installed and running.

Error code: ‘32770’. Active-active access is not supported for the shared VHDX in VM group

Reason: The VHD Set disk is used as a Cluster Shared Volume. This cannot be checkpointed

Error code: ‘32775’. More than one VM claimed to be the owner of shared VHDX in VM group ‘Hyper-V Collection’

Reason: Actually we test if the VHDS is used by exactly one owner. So having 0 owner also creates this error. The reason was that the shared drive was offline in the guest cluster

Unfortunately, this is not the only problems people are facing. Quite often the backup software doesn’t support backing up VHD Sets or when it does they fail. Some of those failings like being unable to checkpoint the VHD Set have been addressed via Windows Updates. But there are others issues.

Let’s look at the two most common ones.

Issue 1

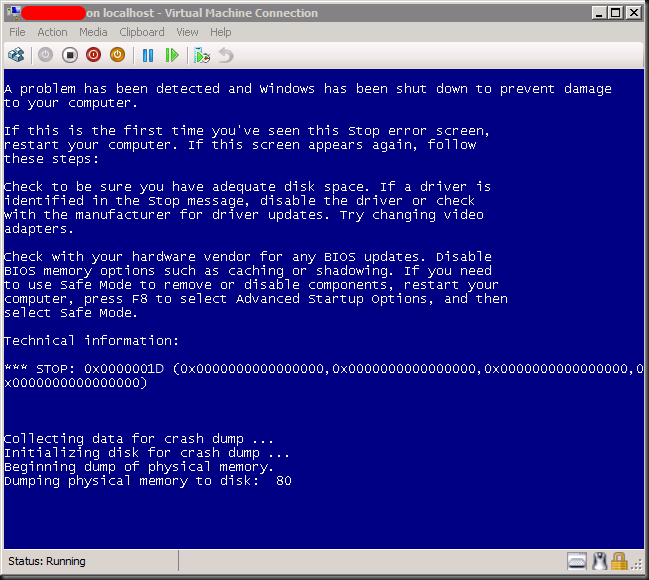

You can make one backup an all subsequent backups fail. This is due to the avhdx files being in used and locked. This means that as long as the cluster is up and running the recovery checkpoint chain keeps growing. This can be “cleaned” or merged but only by taking down the cluster.

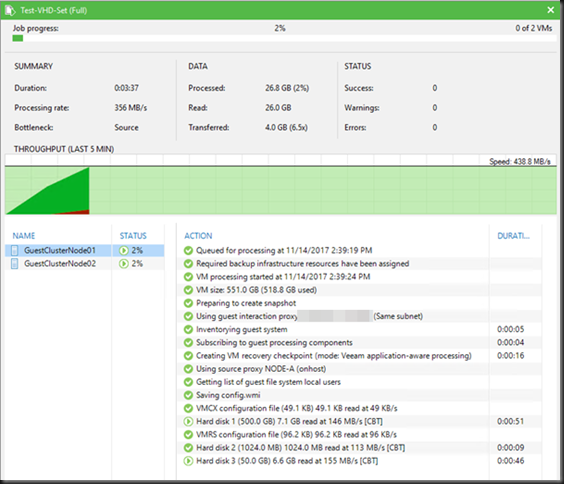

At the first backup live seems good.

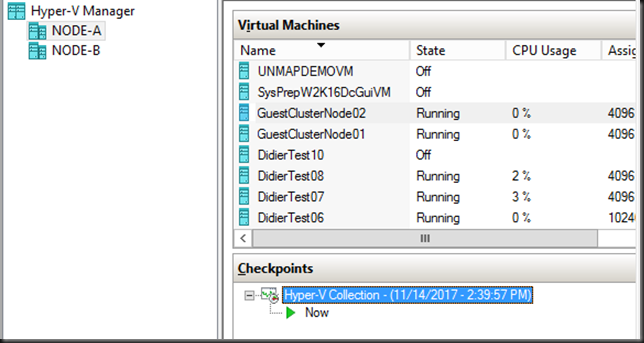

The recovery checkpoint as a collection is indeed working.

All attempts at another backup fail.

Shutting down all cluster VMs and starting them up again does merge the recovery checkpoints.

Issue 2

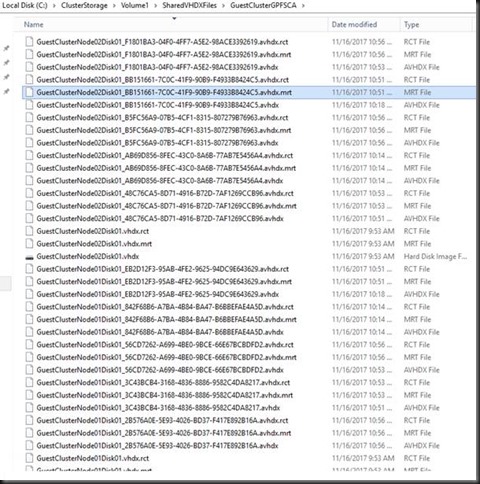

You can make backups, successfully but the recovery checkpoints never get merged.

This sounds “better” but it isn’t. There is no way to merge the checkpoint. Manually merging the checkpoints of a VHD Set is bad voodoo.

Both situations get you into problems and I have found no solution so far. At the time or writing I’m back at the “never ending” recovery checkpoint chain situation. But that can change back to the 1st issue I guess. Sigh.

I have found no solution so far

For now I have been unable to solve these problem. There is no fix or even a workaround. The only to get out if this stale mate is to shut down every node of the guest clusters and then restart them all. Just a restart of the guest nodes of the cluster doesn’t do the trick of releasing the checkpoints files and merging them. While this allows you to take one backup successfully again, the problem returns immediately. For you reference that was my issue with the October 2017 CU (KB)

The other scenario we run into is that the backups do work but the recovery checkpoints never ever merge. Not even when you shut down the all the guest VM cluster nodes and start them. With frequent backup that turns into a disaster of a never ending chain of recovery checkpoints. This is actually the situation I was in again after the November 2017 updates on both guests & hosts (KB4049065: Update for Windows Server 2016 for x64-based Systems and KB4048953: 2017-11 Cumulative Update for Windows Server 2016 for x64-based Systems).

To me this situation is blocking the use of guest clustering with VHD Sets where a backup is required. For many reasons we do not wish to go the route of iSCSI or vFC to the guest. That doesn’t cut it for us.

Conclusion

Host level backups of guest clusters in Windows Server 2016 are still a no go. This despite the good hopes we had with VHD Sets to address this limitation and which we were eagerly awaiting. For many of us this is a show stopper for the successful virtualization guest clusters. Every month we try again and we’re not getting anywhere. Hence the frustration and the disappointment.

More than 1 year after Windows Server 2016 RTM we still cannot do consistent host level backup a Hyper-V guest cluster, not even those without CSV, but also not those with standard clustered disks. Trust me on the fact that many of us have given this feedback to Microsoft. They know and I suggest you keep voicing your concerns to them in order to keep it on their radar screen and higher on the priority list. You can do this by opening support calls and by asking for it on user voice. Please Microsoft, we need these workloads to be first class citizens. I’m clearly not the only unhappy camper out there as noticeable in various support forums: Cannot create checkpoint when shared vhdset (.vhds) is used by VM – ‘not part of a checkpoint collection’ error and Backing up a Windows Failover Cluster with Shared vhdx?