Introduction

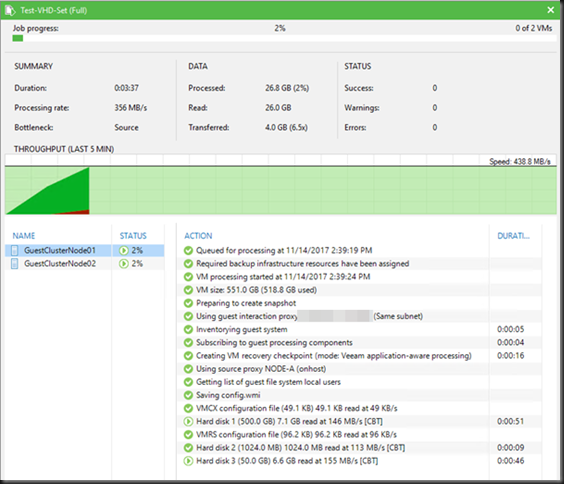

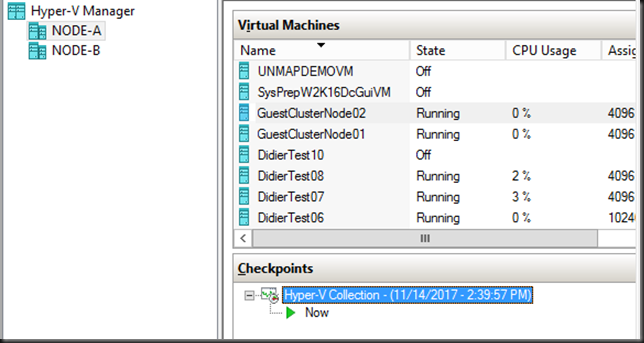

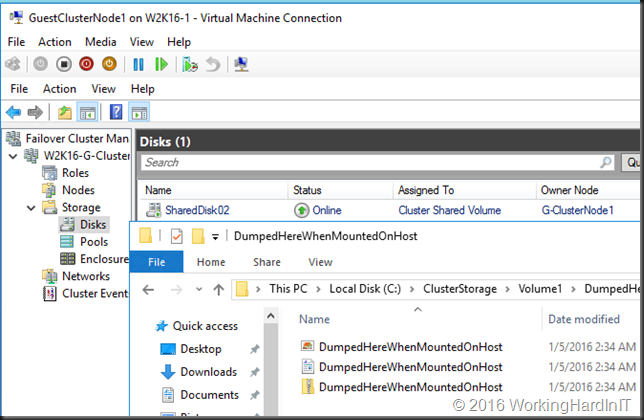

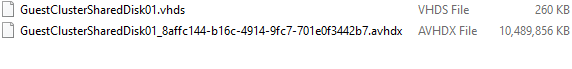

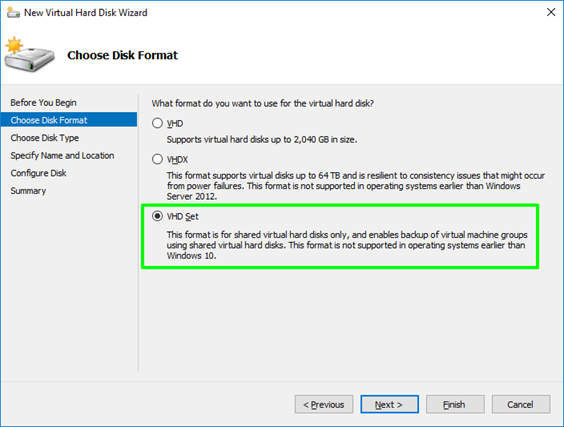

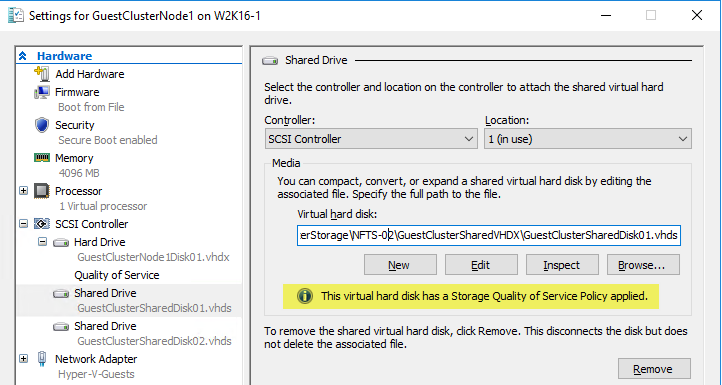

It’s not a secret that while guest clustering with VHDSets works very well. We’ve had some struggles in regards to host level backups however. Right now I leverage Veeam Agent for Windows (VAW) to do in guest backups. The most recent versions of VAW support Windows Failover Clustering. I’d love to leverage host level backups but I was struggling to make this reliable for quite a while. As it turned out recently there are some virtual machine permission issues involved we need to fix. Both Microsoft and Veeam have published guidance on this in a KB article. We automated correcting the permissions on the folder with VHDS files & checkpoints for host level Hyper-V guest cluster backup

The KB articles

Early August Microsoft published KB article with all the tips when thins fail Errors when backing up VMs that belong to a guest cluster in Windows. Veeam also recapitulated on the needed conditions and setting to leverage guest clustering and performing host level backups. The Veeam article is Backing up Hyper-V guest cluster based on VHD set. Read these articles carefully and make sure all you need to do has been done.

For some reason another prerequisite is not mentioned in these articles. It is however discussed in ConfigStoreRootPath cluster parameter is not defined and here https://docs.microsoft.com/en-us/powershell/module/hyper-v/set-vmhostcluster?view=win10-ps You will need to set this to make proper Hyper-V collections needed for recovery checkpoints on VHD Sets. It is a very unknown setting with very little documentation.

But the big news here is fixing a permissions related issue!

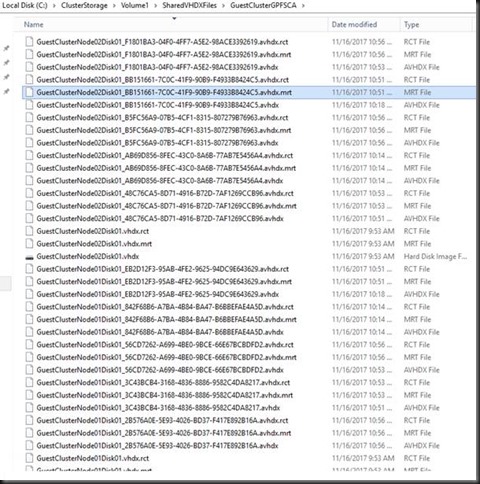

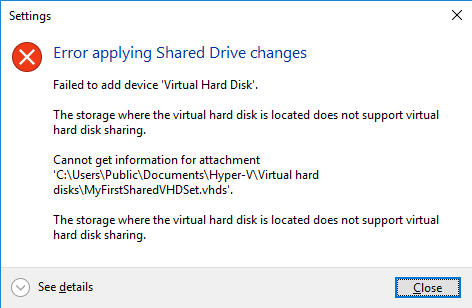

The latest addition in the list of attention points is a permission issue. These permissions are not correct by default for the guest cluster VMs shared files. This leads to the hard to pin point error.

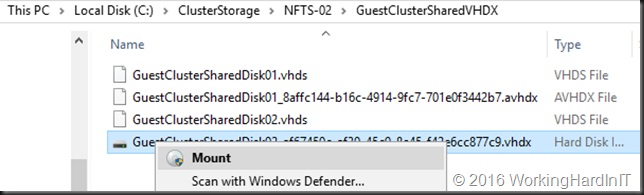

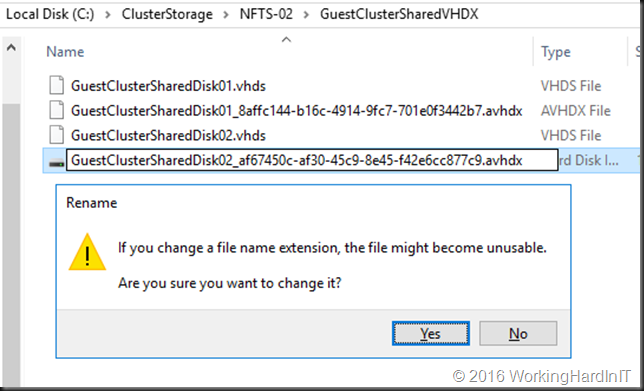

Error Event 19100 Hyper-V-VMMS 19100 ‘BackupVM’ background disk merge failed to complete: General access denied error (0x80070005). To fix this issue, the folder that holds the VHDS files and their snapshot files must be modified to give the VMMS process additional permissions. To do this, follow these steps for correcting the permissions on the folder with VHDS files & checkpoints for host level Hyper-V guest cluster backup.

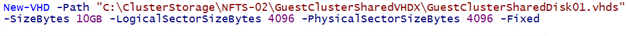

Determine the GUIDS of all VMs that use the folder. To do this, start PowerShell as administrator, and then run the following command:

get-vm | fl name, id

Output example:

Name : BackupVM

Id : d3599536-222a-4d6e-bb10-a6019c3f2b9b

Name : BackupVM2

Id : a0af7903-94b4-4a2c-b3b3-16050d5f80f

For each VM GUID, assign the VMMS process full control by running the following command:

icacls <Folder with VHDS> /grant “NT VIRTUAL MACHINE\<VM GUID>”:(OI)F

Example:

icacls “c:\ClusterStorage\Volume1\SharedClusterDisk” /grant “NT VIRTUAL MACHINE\a0af7903-94b4-4a2c-b3b3-16050d5f80f2”:(OI)F

icacls “c:\ClusterStorage\Volume1\SharedClusterDisk” /grant “NT VIRTUAL MACHINE\d3599536-222a-4d6e-bb10-a6019c3f2b9b”:(OI)F

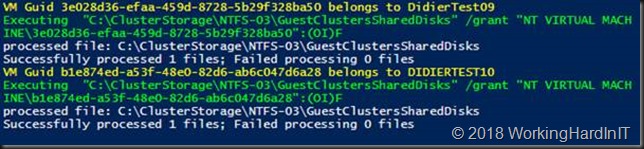

My little PowerShell script

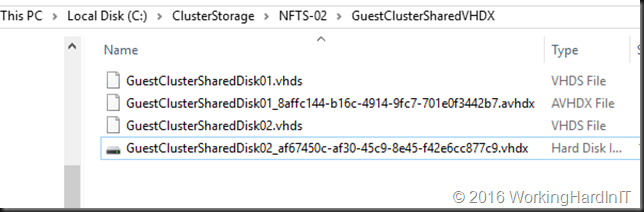

As the above is tedious manual labor with a lot of copy pasting. This is time consuming and tedious at best. With larger guest clusters the probability of mistakes increases. To fix this we write a PowerShell script to handle this for us.

#Didier Van Hoye

#Twitter: @WorkingHardInIT

#Blog: https://blog.Workinghardinit.work

#Correct shared VHD Set disk permissions for all nodes in guests cluster

$GuestCluster = "DemoGuestCluster"

$HostCluster = "LAB-CLUSTER"

$PathToGuestClusterSharedDisks = "C:\ClusterStorage\NTFS-03\GuestClustersSharedDisks"

$GuestClusterNodes = Get-ClusterNode -Cluster $GuestCluster

ForEach ($GuestClusterNode in $GuestClusterNodes)

{

#Passing the cluster name to -computername only works in W2K16 and up.

#As this is about VHDS you need to be running 2016, so no worries here.

$GuestClusterNodeGuid = (Get-VM -Name $GuestClusterNode.Name -ComputerName $HostCluster).id

Write-Host $GuestClusterNodeGuid "belongs to" $GuestClusterNode.Name

$IcalsExecute = """$PathToGuestClusterSharedDisks""" + " /grant " + """NT VIRTUAL MACHINE\"+ $GuestClusterNodeGuid + """:(OI)F"

write-Host "Executing " $IcalsExecute

CMD.EXE /C "icacls $IcalsExecute"

}

Below is an example of the output of this script. It provides some feedback on what is happening.

Correcting the permissions on the folder with VHDS files & checkpoints for host level Hyper-V guest cluster backup

PowerShell for the win. This saves you some searching and typing and potentially making some mistakes along the way. Have fun. More testing is underway to make sure things are now predictable and stable. We’ll share our findings with you.