Dell was the 1st OEM to actively support and deliver Microsoft Storage Spaces solutions to its customers.

They recognized the changing landscape of storage and saw that this was one of the option customers are interested in. When DELL adds their logistical prowess and support infrastructure into the equation it helps deliver Storage Spaces to more customers. It removes barriers.

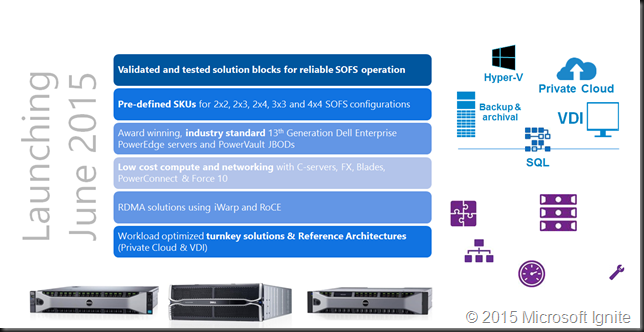

In June 2015 DELL launched their newest offering based on generation 13 hardware.

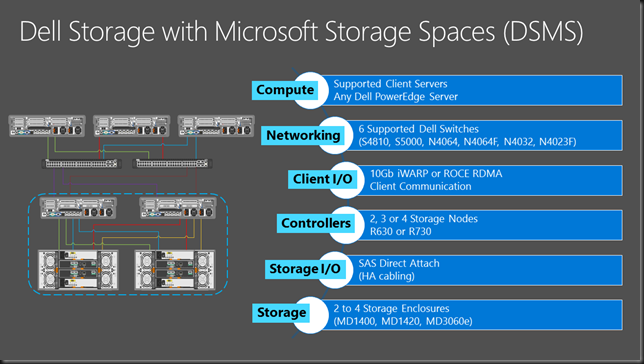

Recently DELL has published it’s docs and manuals for Storage Spaces with the MD1420 JBOD Storage Spaces with the MD1420 JBOD

You can find some more information on DELL storage spaces here and here.

I’m looking forward to what they’ll offer in 2016 in regards to Storage Spaces Direct (S2D) and networking (10/25/40/50/100Gbps). I’m expecting that to be a results of some years experience combined with the most recent networking stack and storage components. 12gbps SAS controller, NVMe options in Storage Spaces Direct. Dell has the economies of scale & knowledge to be one of the best an major players in this area. Let’s hope they leverage this to all our advantage. They could (and should) be the first to market with the most recent & most modern hardware to make these solutions shine when Windows Server 2016 RTM somewhere next year.