Introduction

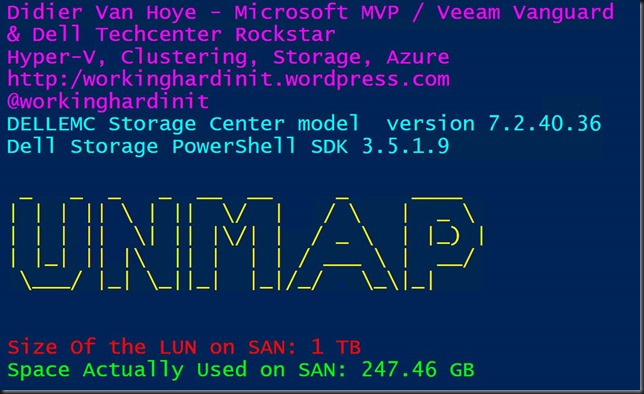

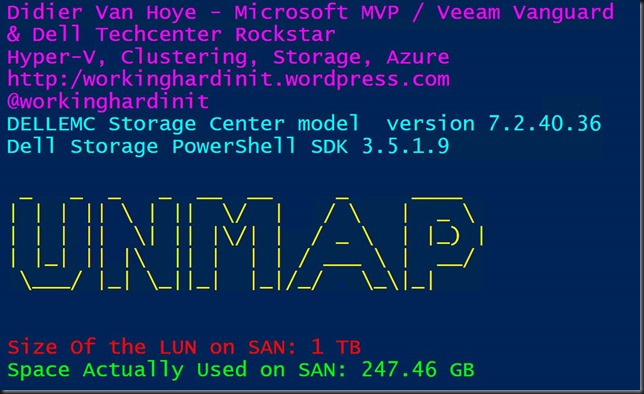

During demo’s I give on the effectiveness of storage efficiencies (UNMAP, ODX) in Hyper-V I use some PowerShell code to help show his. Trim in the virtual machine and on the Hyper-V host pass along information about deleted blocks to a thin provisioned storage array. That means that every layer can be as efficient as possible. Here’s a picture of me doing a demo to monitor the UNMAP/TRIM effect on a thin provisioned SAN.

The script shows how a thin provisioned LUN on a SAN (DELL SC Series) grows in actual used spaced when data is being created or copied inside VMs. When data is hard deleted TRIM/UNMAP prevents dynamically expanding VHDX files form growing more than they need to. When a VM is shut down it even shrinks. The same info is passed on to the storage array. So, when data is deleted we can see the actual space used in a thin provisioned LUN on the SAN go down. That makes for a nice demo. I have some more info on the benefits and the potential issues of UNMAP if used carelessly here.

Scripting options for the DELL SC Series (Compellent)

Your storage array needs to support thin provisioning and TRIM/UNMAP with Windows Server Hyper-V. If so all you need is PowerShell library your storage vendor must provide. For the DELL Compellent series that use to be the PowerShell Command Set (2008) which made them an early adopter of PowerShell automation in the industry. That evolved with the array capabilities and still works to day with the older SC series models. In 2015, Dell Storage introduced the Enterprise Manager API (EM-API) and also the Dell Storage PowerShell SDK, which uses the EM-API. This works over a EM Data Collector server and no longer directly to the management IP of the controllers. This is the only way to work for the newer SC series models.

It’s a powerful tool to have and allows for automation and orchestration of your storage environment when you have wrapped your head around the PowerShell commands.

That does mean that I needed to replace my original PowerShell Command Set scripts. Depending on what those scripts do this can be done easily and fast or it might require some more effort.

Monitoring UNMAP/TRIM effect on a thin provisioned SAN with PowerShell

As a short demo let me show case the Command Set and the DELL Storage PowerShell SDK version of a script monitor the UNMAP/TRIM effect on a thin provisioned SAN with PowerShell.

Command Set version

Bar the way you connect to the array the difference is in the commandlets. In Command Set retrieving the storage info is done as follows:

$SanVolumeToMonitor = “MyDemoSANVolume”

#Get the size of the volume

$CompellentVolumeSize = (Get-SCVolume -Name $SanVolumeToMonitor).Size

#Get the actual disk space consumed in that volume

$CompellentVolumeReakDiskSpaceUsed = (Get-SCVolume -Name $SanVolumeToMonitor).TotalDiskSpaceConsumed

In the DELL Storage PowerShell SDK version it is not harder, just different than it used to be.

$SanVolumeToMonitor = “MyDemoSANVolume”

$Volume = Get-DellScVolume -StorageCenter $StorageCenter -Name $SanVolumeToMonitor

$VolumeStats = Get-DellScVolumeStorageUsage -Instance $Volume.InstanceID

#Get the size of the volume

$CompellentVolumeSize = ($VolumeStats).ConfiguredSpace

#Get the actual disk space consumed in that volume

$CompellentVolumeRealDiskSpaceUsed = ($VolumeStats).ActiveSpace

Which gives …

I hope this gave you some inspiration to get started automating your storage provisioning and governance. On premises or cloud, a GUI and a click have there place, but automation is the way to go. As a bonus, the complete script is below.

#region PowerShell to keep the PoSh window on top during demos

$signature = @’

[DllImport("user32.dll")]

public static extern bool SetWindowPos(

IntPtr hWnd,

IntPtr hWndInsertAfter,

int X,

int Y,

int cx,

int cy,

uint uFlags);

‘@

$type = Add-Type -MemberDefinition $signature -Name SetWindowPosition -Namespace SetWindowPos -Using System.Text -PassThru

$handle = (Get-Process -id $Global:PID).MainWindowHandle

$alwaysOnTop = New-Object -TypeName System.IntPtr -ArgumentList (-1)

$type::SetWindowPos($handle, $alwaysOnTop, 0, 0, 0, 0, 0x0003) | Out-null

#endregion

function WriteVirtualDiskVolSize () {

$Volume = Get-DellScVolume -Connection $Connection -StorageCenter $StorageCenter -Name $SanVolumeToMonitor

$VolumeStats = Get-DellScVolumeStorageUsage -Connection $Connection -Instance $Volume.InstanceID

#Get the size of the volume

$CompellentVolumeSize = ($VolumeStats).ConfiguredSpace

#Get the actual disk space consumed in that volume.

$CompellentVolumeRealDiskSpaceUsed = ($VolumeStats).ActiveSpace

Write-Host -Foregroundcolor Magenta "Didier Van Hoye - Microsoft MVP / Veeam Vanguard

& Dell Techcenter Rockstar"

Write-Host -Foregroundcolor Magenta "Hyper-V, Clustering, Storage, Azure, RDMA, Networking"

Write-Host -Foregroundcolor Magenta "http:/blog.workinghardinit.work"

Write-Host -Foregroundcolor Magenta "@workinghardinit"

Write-Host -Foregroundcolor Cyan "DELLEMC Storage Center model $SCModel version" $SCVersion.version

Write-Host -Foregroundcolor Cyan "Dell Storage PowerShell SDK" (Get-Module DellStorage.ApiCommandSet).version

Write-host -foregroundcolor Yellow "

_ _ _ _ __ __ _ ____

| | | || \ | || \/ | / \ | _ \

| | | || \| || |\/| | / _ \ | |_) |

| |_| || |\ || | | | / ___ \ | __/

\___/ |_| \_||_| |_|/_/ \_\|_|

"

Write-Host ""-ForegroundColor Red

Write-Host "Size Of the LUN on SAN: $CompellentVolumeSize" -ForegroundColor Red

Write-Host "Space Actually Used on SAN: $CompellentVolumeRealDiskSpaceUsed" -ForegroundColor Green

#Wait a while before you run these queries again.

Start-Sleep -Milliseconds 1000

}

#If the Storage Center module isn't loaded, do so!

if (!(Get-Module DellStorage.ApiCommandSet)) {

import-module "C:\SysAdmin\Tools\DellStoragePowerShellSDK\DellStorage.ApiCommandSet.dll"

}

$DsmHostName = "MyDSMHost.domain.local"

$DsmUserName = "MyAdminName"

$DsmPwd = "MyPass"

$SCName = "MySCName"

# Prompt for the password

$DsmPassword = (ConvertTo-SecureString -AsPlainText $DsmPwd -Force)

# Create the connection

$Connection = Connect-DellApiConnection -HostName $DsmHostName `

-User $DsmUserName `

-Password $DsmPassword

$StorageCenter = Get-DellStorageCenter -Connection $Connection -name $SCName

$SCVersion = $StorageCenter | Select-Object Version

$SCModel = (Get-DellScController -Connection $Connection -StorageCenter $StorageCenter -InstanceName "Top Controller").model.Name.toupper()

$SanVolumeToMonitor = "MyDemoSanVolume"

#Just let the script run in a loop indefinitely.

while ($true) {

Clear-Host

WriteVirtualDiskVolSize

}

![clip_image001_thumb[1] clip_image001_thumb[1]](https://blog.workinghardinit.work/wp-content/uploads/2018/08/clip_image001_thumb1_thumb.png)

![clip_image003_thumb[1] clip_image003_thumb[1]](https://blog.workinghardinit.work/wp-content/uploads/2018/08/clip_image003_thumb1_thumb.png)