Introduction

As an observer of the changes in the hypervisor market in 2024 and 2025, you have undoubtedly noted considerable commotion and dissent in the market. I did not have to deal with it as I adopted and specialized in Hyper-V from day one. Even better, I am pleased to see that many more people now have the opportunity to experience Hyper-V and appreciate its benefits.

While the UI management is not as sleek and is more fragmented than that of some competitors, it offers all the necessary features available for free. Additionally, PowerShell automation enables you to create any tooling you desire, tailored to your specific needs. Do that well, and you do not need System Center Virtual Machine Manager for added capabilities. Denying the technical capabilities and excellence of Hyper-V only diminishes the credibility and standing of those who do so in the community.

That has been my approach for many years, running mission-critical, real-time data-sensitive workloads on Hyper-V clusters. So yes, Microsoft could have managed the tooling experience a bit better, and that would have put them in an even better position to welcome converting customers. Despite that, adoption has been rising significantly over the last 18 months and not just in the SME market.

Commotion, fear, uncertainty, and doubt

The hypervisor world commotion has led to people looking at other hypervisors to support their business, either partially or wholesale. The moment you run workloads on a hypervisor, you must be able to protect, manage, move, and restore these workloads when the need to do so arises. Trust me, no matter how blessed you are, that moment comes to us all. The extent to which you can handle it, on a scale from minimal impact to severe impact, depends on the nature of the issue and your preparedness to address it.

Customers with a more diverse hypervisor landscape means that data protection vendors need to support those hypervisors. I think that most people will recognize that developing high-quality software, managing its lifecycle, and supporting it in the real world requires significant investment. So then comes the question, which ones to support? What percentage of customers will go for hypervisor x versus y or z? I leave that challenge to people like Anton Gostev and his team of experts. What I can say is that Hyper-V has taken a significant leap in adoption, as it is a mature and capable platform built and supported by Microsoft.

The second rise of Hyper-V

Over the past 18 months, I have observed a significant increase in the adoption of Hyper-V. And why not? It is a mature and capable platform built and supported by Microsoft. The latter makes moving to it a less stressful choice as the ecosystem and community are large and well-established. I believe that Hyper-V is one of the primary beneficiaries of the hypervisor turmoil. Adoption is experiencing a second, significant rise. For Veeam, this was not a problem. They have provided excellent Hyper-V support for a long time, and I have been a pleased customer, building some of the best and most performant backup fabrics on our chosen hardware.

But who are those customers adopting Hyper-V? Are they small and medium businesses (SME) or managed service providers? Or is Hyper-V making headway with big corporate enterprises as well? Well, neither Microsoft nor Veeam shares such data with me. So, what do I do? Weak to strong signal intelligence! I observe what companies are doing and what they are saying, in combination with what people ask me directly. That has me convinced that some larger corporations have made the move to Hyper-V. Some of the stronger signals came from Veeam.

Current and future Veeam Releases

Let’s look at the more recent releases of Veeam Backup & Replication. With version 12.3, support for Windows Server 2025 arrived very fast after the general availability of that OS. Hyper-V, by the way, is getting all the improvements and new capabilities for Hyper-V just as much as Azure Local. That indicates Microsoft’s interest in making Hyper-V an excellent option for any customer, regardless of how they choose to run it, be it on local storage, with shared storage, on Storage Spaces Direct (S2D), or Azure Local. That is a strong, positive signal compared to previous statements. Naturally, Hyper-V benefits from Veeam’s ongoing efforts to resolve issues, enhance features, and add capabilities, providing the best possible backup fabric for everyone. I will discuss that in later articles.

Now, the strong signal and very positive signal from Veeam regarding Hyper-V came with updates to Veeam Recovery Orchestrator. Firstly, Veeam Recovery Orchestrator 7.2 (released on February 18th, 2025) introduced support for Hyper-V environments. What does that tell me? The nature, size, and number of customers leveraging Hyper-V that need and are willing to pay for Veeam Recovery Orchestrator have grown to a point where Veeam is willing to invest in developing and supporting it. That is new! On the Product Update page, https://community.veeam.com/product-updates/veeam-recovery-orchestrator-7-2-9827, you can find more information. The one requirement that sticks out is the need for System Center Virtual Machine Manager. Look at these key considerations:

- System Center Virtual Machine Manager (SCVMM) 2022 & CSV storage registered in SCVMM is supported.

- Direct connections to Hyper-V hosts are not supported.

But not that much later, on July 9th, 2025, in Veeam Recovery Orchestrator 7.2.1 (see https://community.veeam.com/product-updates/veeam-recovery-orchestrator-7-2-1-10876), we find these significant enhancements:

- Support for Azure Local recovery target: You can now use Azure Local as a recovery target for both vSphere and Hyper-V workloads, expanding flexibility and cloud recovery options.

- Hyper-V direct-connected cluster support: Extended Hyper-V functionality enables support for direct-connected clusters, eliminating the need for SCVMM. This move simplifies deployment and management for Hyper-V environments.

- MFA integration for VRO UI: Multi-Factor Authentication (MFA) can now be enabled to secure logins to the VRO user interface, providing enhanced security and compliance. Microsoft Authenticator and Google Authenticator apps are supported.

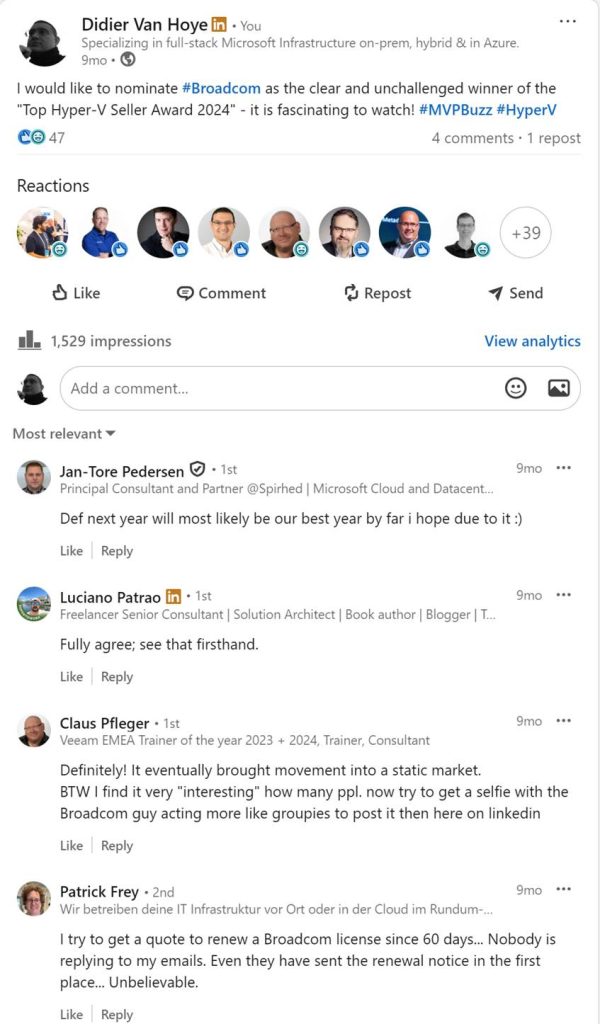

Especially 1 and 2 are essential, as they enable Veeam Recovery Orchestrator to support many more Hyper-V customers. Again, this is a strong signal that Hyper-V is making inroads. Enough so for Veeam to invest. Ironically, we have Broadcom to thank for this. Which is why in November 2024, I nominated Broadcom as the clear and unchallenged winner of the “Top Hyper-V Seller Award 2024” (https://www.linkedin.com/posts/didiervanhoye_broadcom-mvpbuzz-hyperv-activity-7257391073910566912-bTTF/)

Conclusion

Hyper-V and Veeam are a potent combination that continues to evolve as market demands change. Twelve years ago, I was testing out Veeam Backup & Replication, and 6 months later, I became a Veeam customer. I am still convinced that for my needs and those of the environments I support, I have made a great choice.

The longevity of the technology, which evolves in response to customer and security needs, is a key factor in determining great technology choices. In that respect, Hyper-V and Veeam have performed exceptionally well, striking multiple bullseye shots without missing a beat. And missing out on the hypervisor drama, we have hit the bullseye once more!