Introduction

In Shared nothing live migration with a virtual switch change and VLAN ID configuration I published a sample script. The script works well. But there are two areas of improvement. The first one is here in Checkpoint references a non-existent virtual switch. This post is about the second one. Here I show that I also need to check the virtual switch QoS mode during migrations. A couple of the virtual machines on the source nodes had absolute minimum and/or maximum bandwidth set. On the target nodes, all the virtual switches are created by PowerShell. This defaults to weight mode for QoS, which is the more sensible option, albeit not always the easiest or practical one for people to use.

Virtual switch QoS mode during migrations

First a quick recap of what we are doing. The challenge was to shared nothing live migrate virtual machines to a host with different virtual switch names and VLAN IDs. We did so by adding dummy virtual switches to the target host. This made share nothing live migration possible. On arrival of the virtual machine on the target host, we immediately connect the virtual network adapters to the final virtual switch and set the correct VLAN IDs. That works very well. You drop 1 or at most 2 pings, this is as good as it gets.

This goes wrong under the following conditions:

- The source virtual switch has QoS mode absolute.

- Virtual network adapter connected to the source virtual switch has MinimumBandwidthAbsolute and/or MaximumBandwidth set.

- The target virtual switch with QoS mode weighted

This will cause connectivity loss as you cannot set absolute values to a virtual network attached to a weighted virtual switch. So connecting the virtual to the new virtual switch just fails and you lose connectivity. Remember that the virtual machine is connected to a dummy virtual switch just to make the live migration work and we need to swap it over immediately. The VLAN ID does get set correctly actually. Let’s deal with this.

Steps to fix this issue

First of all, we adapt the script to check the QoS mode on the source virtual switches. If it is set to absolute we know we need to check for any settings of MinimumBandwidthAbsolute and MaximumBandwidth on the virtual adapters connected to those virtual switches. These changes are highlighted in the demo code below.

Secondly, we adapt the script to check every virtual network adapter for its bandwidth management settings. If we find configured MinimumBandwidthAbsolute and MaximumBandwidth values we set these to 0 and as such disable the bandwidth settings. This makes sure that connecting the virtual network adapters to the new virtual switch with QoS mode weighted will succeed. These changes are highlighted in the demo code below.

Finally, the complete script

#The source Hyper-V host

$SourceNode = 'NODE-A'

#The LUN where you want to storage migrate your VMs away from

$SourceRootPath = "C:\ClusterStorage\Volume1*"

#The source Hyper-V host

#The target Hypr-V host

$TargetNode = 'ZULU'

#The storage pathe where you want to storage migrate your VMs to

$TargetRootPath = "C:\ClusterStorage\Volume1"

$OldVirtualSwitch01 = 'vSwitch-VLAN500'

$OldVirtualSwitch02 = 'vSwitch-VLAN600'

$NewVirtualSwitch = 'ConvergedVirtualSwitch'

$VlanId01 = 500

$VlanId02 = 600

if ((Get-VMSwitch -name $OldVirtualSwitch01 ).BandwidthReservationMode -eq 'Absolute') {

$OldVirtualSwitch01QoSMode = 'Absolute'

}

if ((Get-VMSwitch -name $OldVirtualSwitch01 ).BandwidthReservationMode -eq 'Absolute') {

$OldVirtualSwitch02QoSMode = 'Absolute'

}

#Grab all the VM we find that have virtual disks on the source CSV - WARNING for W2K12 you'll need to loop through all cluster nodes.

$AllVMsOnRootPath = Get-VM -ComputerName $SourceNode | where-object { $_.HardDrives.Path -like $SourceRootPath }

#We loop through all VMs we find on our SourceRoootPath

ForEach ($VM in $AllVMsOnRootPath) {

#We generate the final VM destination path

$TargetVMPath = $TargetRootPath + "\" + ($VM.Name).ToUpper()

#Grab the VM name

$VMName = $VM.Name

$VM.VMid

$VMName

#If the VM is still clusterd, get it removed form the cluster as live shared nothing migration will otherwise fail.

if ($VM.isclustered -eq $True) {

write-Host -ForegroundColor Magenta $VM.Name "is clustered and is being removed from cluster"

Remove-ClusterGroup -VMId $VM.VMid -Force -RemoveResources

Do { Start-Sleep -seconds 1 } While ($VM.isclustered -eq $True)

write-Host -ForegroundColor Yellow $VM.Name "has been removed from cluster"

}

#If the VM checkpoint, notify the user of the script as this will cause issues after swicthing to the new virtual

#switch on the target node. Live migration will fail between cluster nodes if the checkpoints references 1 or more

#non existing virtual switches. These must be removed prior to of after completing the shared nothing migration.

#The script does this after the migration automatically, not before as I want it to be untouched if the shared nothing

#migration fails.

$checkpoints = get-vmcheckpoint -VMName $VM.Name

if ($Null -ne $checkpoints) {

write-host -foregroundcolor yellow "This VM has checkpoints"

write-host -foregroundcolor yellow "This VM will be migrated to the new host"

write-host -foregroundcolor yellow "Only after a succesfull migration will ALL the checpoints be removed"

}

#Do the actual storage migration of the VM, $DestinationVMPath creates the default subfolder structure

#for the virtual machine config, snapshots, smartpaging & virtual hard disk files.

Move-VM -Name $VMName -ComputerName $VM.ComputerName -IncludeStorage -DestinationStoragePath $TargetVMPath -DestinationHost $TargetNode

$MovedVM = Get-VM -ComputerName $TargetNode -Name $VMName

$vNICOnOldvSwitch01 = Get-VMNetworkAdapter -ComputerName $TargetNode -VMName $MovedVM.VMName | where-object SwitchName -eq $OldVirtualSwitch01

if ($Null -ne $vNICOnOldvSwitch01) {

foreach ($VMNetworkadapater in $vNICOnOldvSwitch01) {

if ($OldVirtualSwitch01QoSMode -eq 'Absolute') {

if (0 -ne $VMNetworkAdapter.bandwidthsetting.Maximumbandwidth) {

write-host -foregroundcolor cyan "Network adapter $VMNetworkAdapter.Name of VM $VMName MaximumBandwidth will be reset to 0."

Set-VMNetworkAdapter -Name $VMNetworkAdapter.Name -VMName $MovedVM.Name -ComputerName $TargetNode -MaximumBandwidth 0

}

if (0 -ne $VMNetworkAdapter.bandwidthsetting.MinimumBandwidthAbsolute) {

write-host -foregroundcolor cyan "Network adapter $VMNetworkAdapter.Name of VM $VMName MaximuBandwidthAbsolute will be reset to 0."

Set-VMNetworkAdapter -Name $VMNetworkAdapter.Name -VMName $MovedVM.Name -ComputerName $TargetNode -MinimumBandwidthAbsolute 0

}

}

write-host 'Moving to correct vSwitch'

Connect-VMNetworkAdapter -VMNetworkAdapter $vNICOnOldvSwitch01 -SwitchName $NewVirtualSwitch

write-host "Setting VLAN $VlanId01"

Set-VMNetworkAdapterVlan -VMNetworkAdapter $vNICOnOldvSwitch01 -Access -VLANid $VlanId01

}

}

$vNICsOnOldvSwitch02 = Get-VMNetworkAdapter -ComputerName $TargetNode -VMName $MovedVM.VMName | where-object SwitchName -eq $OldVirtualSwitch02

if ($NULL -ne $vNICsOnOldvSwitch02) {

foreach ($VMNetworkadapater in $vNICsOnOldvSwitch02) {

if ($OldVirtualSwitch02QoSMode -eq 'Absolute') {

if ($Null -ne $VMNetworkAdapter.bandwidthsetting.Maximumbandwidth) {

write-host -foregroundcolor cyan "Network adapter $VMNetworkAdapter.Name of VM $VMName MaximumBandwidth will be reset to 0."

Set-VMNetworkAdapter -Name $VMNetworkAdapter.Name -VMName $MovedVM.Name -ComputerName $TargetNode -MaximumBandwidth 0

}

if ($Null -ne $VMNetworkAdapter.bandwidthsetting.MinimumBandwidthAbsolute) {

write-host -foregroundcolor cyan "Network adapter $VMNetworkAdapter.Name of VM $VMName MaximumBandwidth will be reset to 0."

Set-VMNetworkAdapter -Name $VMNetworkAdapter.Name -VMName $MovedVM.Name -ComputerName $TargetNode -MinimumBandwidthAbsolute 0

}

}

write-host 'Moving to correct vSwitch'

Connect-VMNetworkAdapter -VMNetworkAdapter $vNICsOnOldvSwitch02 -SwitchName $NewVirtualSwitch

write-host "Setting VLAN $VlanId02"

Set-VMNetworkAdapterVlan -VMNetworkAdapter $vNICsOnOldvSwitch02 -Access -VLANid $VlanId02

}

}

#If the VM has checkpoints, this is when we remove them.

$checkpoints = get-vmcheckpoint -ComputerName $TargetNode -VMName $MovedVM.VMName

if ($Null -ne $checkpoints) {

write-host -foregroundcolor yellow "This VM has checkpoints and they will ALL be removed"

$CheckPoints | Remove-VMCheckpoint

}

}

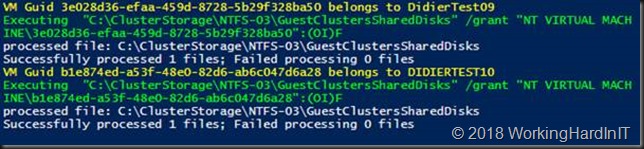

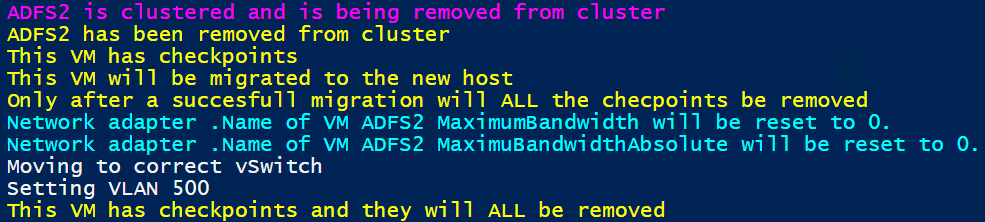

Below is the output of a VM where we had to change the switch name, enable a VLAN ID, deal with absolute QoS settings and remove checkpoints. All this without causing downtime. Nor did we change the original virtual machine in case the shared nothing migration fails.

Some observations

The fact that we are using PowerShell is great. You can only set weighted bandwidth limits via PowerShell. The GUI is only for absolute values and it will throw an error trying to use it when the virtual switch is configured as weighted.

This means you can embed setting the weights in your script if you so desire. If you do, read up on how to handle this best. trying to juggle the weight settings to be 100 in a dynamic environment is a bit of a challenge. So use the default flow pool and keep the number of virtual network adapters with unique settings to a minimum.

Conclusion

To avoid downtime we removed all the set minimum and maximum bandwidth settings on any virtual network adapter. By doing so we ensured that the swap to the new virtual switch right after the successful shared nothing live migration will succeed. If you want you can set weights on the virtual network adapters afterward. But as the bandwidth on these new hosts is now a redundant 25 Gbps, the need was no longer there. As a result, we just left them without. this can always be configured later if it turns out to be needed.

Warning: this is a demo script. It lacks error handling and logging. It can also contain mistakes. But hey you get it for free to adapt and use. Test and adapt this in a lab. You are responsible for what you do in your environments. Running scripts downloaded from the internet without any validation make you a certified nut case. That is not my wrongdoing.

I hope this helps some of you. thanks for reading.