There was a brief moment of “this can’t be good” the sys admin looked at the file size of the backup folders and compared it to the size reported for the files. Sure I had told him that Windows inbox deduplication rocked but this had to be too good to be true or deduplication had just eaten all the backup files and he was “toast”. It was neither. But that requires some explanation. The good news is that Windows Data Deduplication combined with a backup product that supports it like VEEAM will save you a ton of money on deduplication licenses some charge and storage costs.

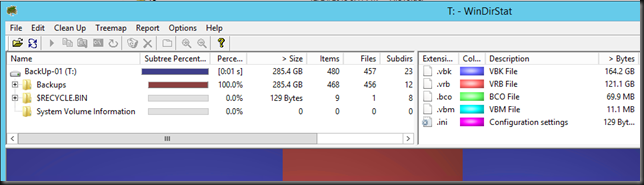

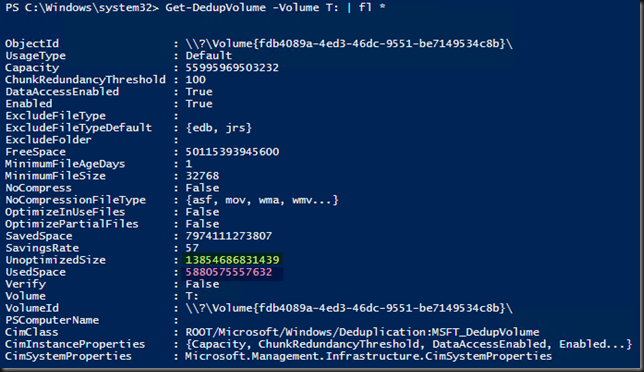

This is what he saw, and what caused the raised eye brow. 12.4TB reduced to 285GB.

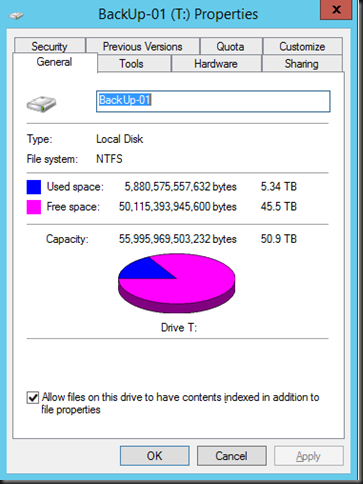

Deduplication can’t be that great, right? Did something go wrong? Checking the properties of ALL selected files themselves did not report anything else but compared to the volume info for used space something seems very wrong. That’s supposed to be 5.34 TB.

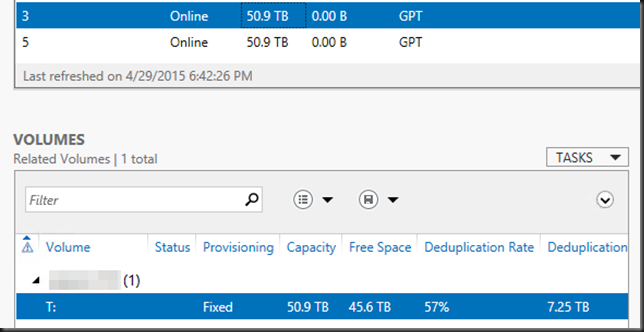

The volume properties report the effective spaces consumed on the volume, so that reflects the true deduplication results. You can confirm this with PowerShell

A savings rate of 57% and 5.34 TB of actually consumes space (5880575557632 bytes) and an unoptimized size of 12.4 TB. Just as server manager reports.

So what is explorer up to at the folder and file level? Nothing, it just can’t show you the complete picture. Windows Data Deduplication stores duplicated chunks into the System Volume Information folder. Windows explorer runs under your account and has no access to that folder and doesn’t report the size of all chunks in there. The only thing it does reports are the non duplicated bits that are left in the source folder. In our case where the backups reside. The result is, as said, raised eyebrows.

The same is true for any other tool actually, like WinDirStat in the blow screenshot.

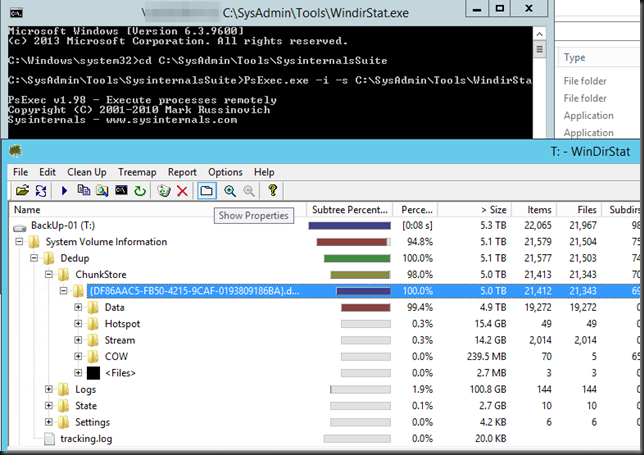

When we run this tools as system we get a different picture and you can navigate to the actual ChunkStore and learn more about the internals.