Introduction

Let’s take a look at setting up Discrete Device Assignment with a GPU. Windows Server 2016 introduces Discrete Device Assignment (DDA). This allows a PCI Express connected device, that supports this, to be connected directly through to a virtual machine.

The idea behind this is to gain extra performance. In our case we’ll use one of the four display adapters in our NVIDIA GROD K1 to assign to a VM via DDA. The 3 other can remain for use with RemoteFX. Perhaps we could even leverage DDA for GPUs that do not support RemoteFX to be used directly by a VM, we’ll see.

As we directly assign the hardware to VM we need to install the drivers for that hardware inside of that VM just like you need to do with real hardware.

I refer you to the starting blog of a series on DDA in Windows 2016:

- Discrete Device Assignment — Description and background

- Discrete Device Assignment — Machines and devices

- Discrete Device Assignment — GPUs

- Discrete Device Assignment — Guests and Linux

Here you can get a wealth of extra information. My experimentations with this feature relied heavily on these blogs and MSFT provide GitHub script to query a host for DDA capable devices. That was very educational in regards to finding out the PowerShell we needed to get DDA to work! Please see A 1st look at Discrete Device Assignment in Hyper-V to see the output of this script and how we identified that our NVIDIA GRID K1 card was a DDA capable candidate.

Requirements

There are some conditions the host system needs to meet to even be able to use DDA. The host needs to support Access Control services which enables pass through of PCI Express devices in a secure manner. The host also need to support SLAT and Intel VT-d2 or AMD I/O MMU. This is dependent on UEFI, which is not a big issue. All my W2K12R2 cluster nodes & member servers run UEFI already anyway. All in all, these requirements are covered by modern hardware. The hardware you buy today for Windows Server 2012 R2 meets those requirements when you buy decent enterprise grade hardware such as the DELL PowerEdge R730 series. That’s the model I had available to test with. Nothing about these requirements is shocking or unusual.

A PCI express device that is used for DDA cannot be used by the host in any way. You’ll see we actually dismount it form the host. It also cannot be shared amongst VMs. It’s used exclusively by the VM it’s assigned to. As you can imagine this is not a scenario for live migration and VM mobility. This is a major difference between DDA and SR-IOV or virtual fibre channel where live migration is supported in very creative, different ways. Now I’m not saying Microsoft will never be able to combine DDA with live migration, but to the best of my knowledge it’s not available today.

The host requirements are also listed here: https://technet.microsoft.com/en-us/library/mt608570.aspx

- The processor must have either Intel’s Extended Page Table (EPT) or AMD’s Nested Page Table (NPT).

The chipset must have:

- Interrupt remapping: Intel’s VT-d with the Interrupt Remapping capability (VT-d2) or any version of AMD I/O Memory Management Unit (I/O MMU).

- DMA remapping: Intel’s VT-d with Queued Invalidations or any AMD I/O MMU.

- Access control services (ACS) on PCI Express root ports.

- The firmware tables must expose the I/O MMU to the Windows hypervisor. Note that this feature might be turned off in the UEFI or BIOS. For instructions, see the hardware documentation or contact your hardware manufacturer.

You get this technology both on premises with Windows Server 2016 as and with virtual machines running Windows Server 2016; Windows 10 (1511 or higher) and Linux distros that support it. It’s also an offering on high end Azure VMs (IAAS). It supports both Generation 1 and generation 2 virtual machines. All be it that generation 2 is X64 bit only, this might be important for certain client VMs. We’ve dumped 32 bit Operating systems over decade ago so to me this is a non-issue.

For this article I used a DELL PowerEdge R730, a NVIIA GRID K1 GPU. Windows Server 2016 TPv4 with CU of March 2016 and Windows 10 Insider Build 14295.

Microsoft supports 2 devices at the moment:

- GPUs and coprocessors

- NVMe (Non-Volatile Memory express) SSD controllers

Other devices might work but you’re dependent on the hardware vendor for support. Maybe that’s OK for you, maybe it’s not.

Below I describe the steps to get DDA working. There’s also a rough video out on my Vimeo channel: Discrete Device Assignment with a GPU in Windows 2016 TPv4.

Setting up Discrete Device Assignment with a GPU

Preparing a Hyper-V host with a GPU for Discrete Device Assignment

First of all, you need a Windows Server 2016 Host running Hyper-V. It needs to meet the hardware specifications discussed above, boot form EUFI with VT-d enabled and you need a PCI Express GPU to work with that can be used for discrete device assignment.

It pays to get the most recent GPU driver installed and for our NVIDIA GRID K1 which was 362.13 at the time of writing.

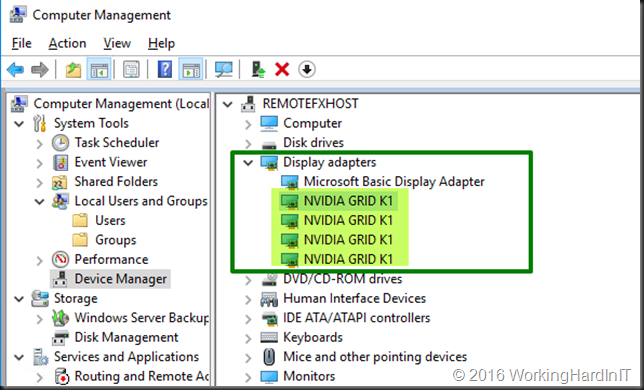

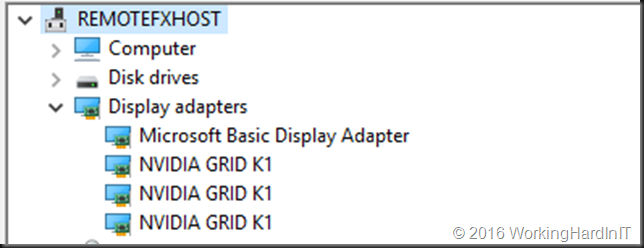

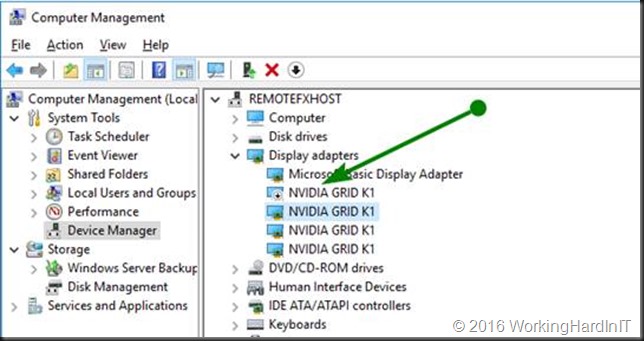

On the host when your installation of the GPU and drivers is OK you’ll see 4 NIVIDIA GRID K1 Display Adapters in device manager.

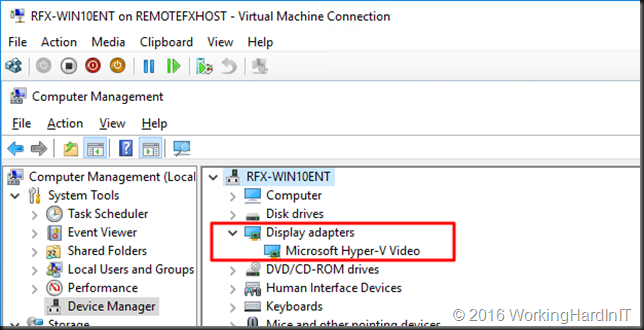

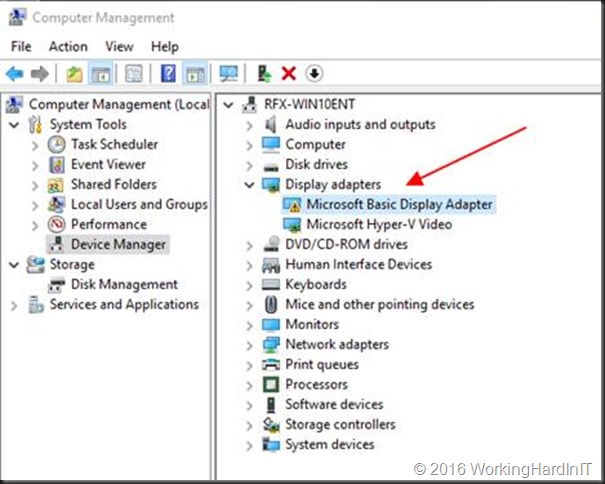

We create a generation 2 VM for this demo. In case you recuperate a VM that already has a RemoteFX adapter in use, remove it. You want a VM that only has a Microsoft Hyper-V Video Adapter.

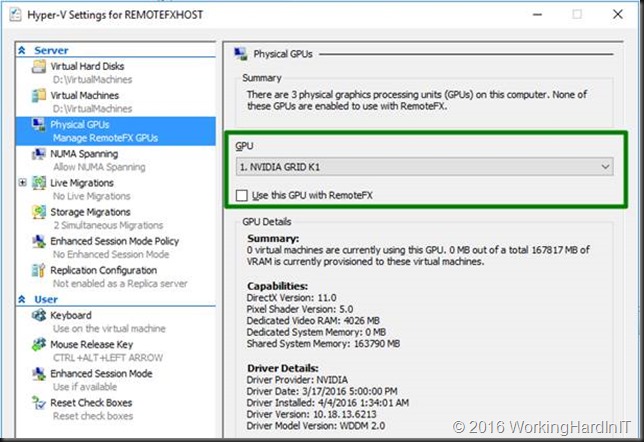

In Hyper-V manager I also exclude the NVDIA GRID K1 GPU I’ll configure for DDA from being used by RemoteFX. In this show case that we’ll use the first one.

OK, we’re all set to start with our DDA setup for an NVIDIA GRID K1 GPU!

Assign the PCI Express GPU to the VM

Prepping the GPU and host

As stated above to have a GPU assigned to a VM we must make sure that the host no longer has use of it. We do this by dismounting the display adapter which renders it unavailable to the host. Once that is don we can assign that device to a VM.

Let’s walk through this. Tip: run PoSh or the ISE as an administrator.

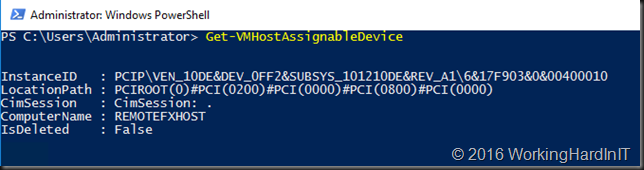

We run Get-VMHostAssignableDevice. This return nothing as no devices yet have been made available for DDA.

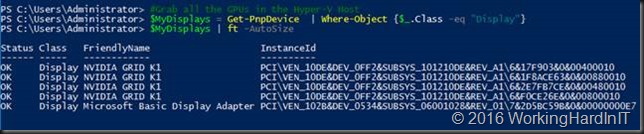

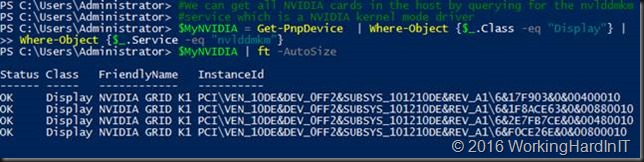

I now want to find my display adapters

#Grab all the GPUs in the Hyper-V Host

$MyDisplays = Get-PnpDevice | Where-Object {$_.Class -eq “Display”}

$MyDisplays | ft -AutoSize

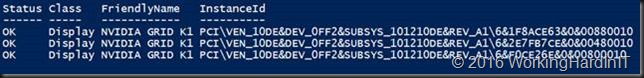

This returns

As you can see it list all adapters. Let’s limit this to the NVIDIA ones alone.

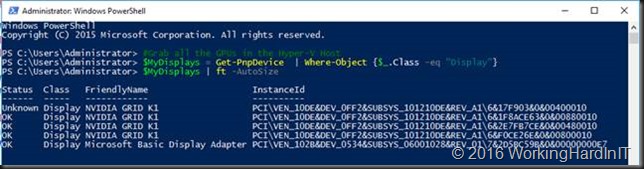

#We can get all NVIDIA cards in the host by querying for the nvlddmkm

#service which is a NVIDIA kernel mode driver

$MyNVIDIA = Get-PnpDevice | Where-Object {$_.Class -eq “Display”} |

Where-Object {$_.Service -eq “nvlddmkm”}

$MyNVIDIA | ft -AutoSize

If you have multiple type of NVIDIA cared you might also want to filter those out based on the friendly name. In our case with only one GPU this doesn’t filter anything. What we really want to do is excluded any display adapter that has already been dismounted. For that we use the -PresentOnly parameter.

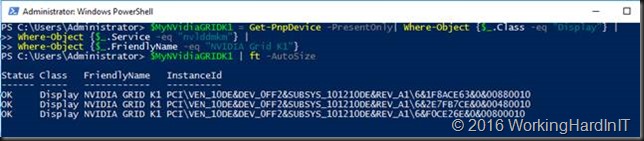

#We actually only need the NVIDIA GRID K1 cards, let’s filter some #more,there might be other NVDIA GPUs.We might already have dismounted #some of those GPU before. For this exercise we want to work with the #ones that are mountedt he paramater -PresentOnly will do just that.

$MyNVidiaGRIDK1 = Get-PnpDevice -PresentOnly| Where-Object {$_.Class -eq “Display”} |

Where-Object {$_.Service -eq “nvlddmkm”} |

Where-Object {$_.FriendlyName -eq “NVIDIA Grid K1”}

$MyNVidiaGRIDK1 | ft -AutoSize

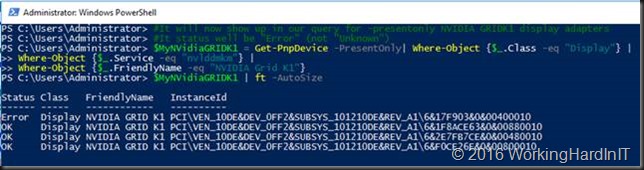

Extra info: When you have already used one of the display adapters for DDA (Status “UnKnown”). Like in the screenshot below.

We can filter out any already unmounted device by using the -PresentOnly parameter. As we could have more NVIDIA adaptors in the host, potentially different models, we’ll filter that out with the FriendlyName so we only get the NVIDIA GRID K1 display adapters.

In the example above you see 3 display adapters as 1 of the 4 on the GPU is already dismounted. The “Unkown” one isn’t returned anymore.

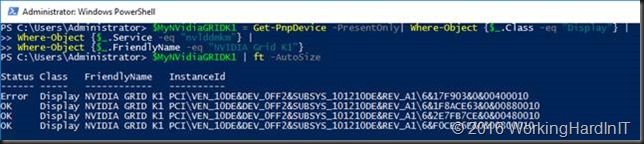

Anyway, when we run

$MyNVidiaGRIDK1 = Get-PnpDevice -PresentOnly| Where-Object {$_.Class -eq “Display”} |

Where-Object {$_.Service -eq “nvlddmkm”} |

Where-Object {$_.FriendlyName -eq “NVIDIA Grid K1”}

$MyNVidiaGRIDK1 | ft -AutoSize

We get an array with the display adapters relevant to us. I’ll use the first (which I excluded form use with RemoteFX). In a zero based array this means I disable that display adapter as follows:

Disable-PnpDevice -InstanceId $MyNVidiaGRIDK1[0].InstanceId -Confirm:$false

Your screen might flicker when you do this. This is actually like disabling it in device manager as you can see when you take a peek at it.

When you now run

$MyNVidiaGRIDK1 = Get-PnpDevice -PresentOnly| Where-Object {$_.Class -eq “Display”} |

Where-Object {$_.Service -eq “nvlddmkm”} |

Where-Object {$_.FriendlyName -eq “NVIDIA Grid K1”}

$MyNVidiaGRIDK1 | ft -AutoSize

Again you’ll see

The disabled adapter has error as a status. This is the one we will dismount so that the host no longer has access to it. The array is zero based we grab the data about that display adapter.

#Grab the data (multi string value) for the display adapater

$DataOfGPUToDDismount = Get-PnpDeviceProperty DEVPKEY_Device_LocationPaths -InstanceId $MyNVidiaGRIDK1[0].InstanceId

$DataOfGPUToDDismount | ft -AutoSize

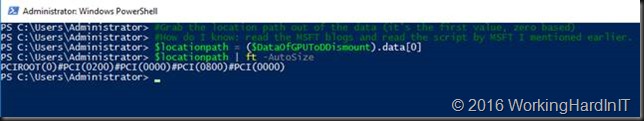

We grab the location path out of that data (it’s the first value, zero based, in the multi string value).

#Grab the location path out of the data (it’s the first value, zero based)

#How do I know: read the MSFT blogs and read the script by MSFT I mentioned earlier.

$locationpath = ($DataOfGPUToDDismount).data[0]

$locationpath | ft -AutoSize

This locationpath is what we need to dismount the display adapter.

#Use this location path to dismount the display adapter

Dismount-VmHostAssignableDevice -locationpath $locationpath -force

Once you dismount a display adapter it becomes available for DDA. When we now run

$MyNVidiaGRIDK1 = Get-PnpDevice -PresentOnly| Where-Object {$_.Class -eq “Display”} |

Where-Object {$_.Service -eq “nvlddmkm”} |

Where-Object {$_.FriendlyName -eq “NVIDIA Grid K1”}

$MyNVidiaGRIDK1 | ft -AutoSize

We get:

As you can see the dismounted display adapter is no longer present in display adapters when filtering with -presentonly. It’s also gone in device manager.

Yes, it’s gone in device manager. There’s only 3 NVIDIA GRID K1 adaptors left. Do note that the device is unmounted and as such unavailable to the host but it is still functional and can be assigned to a VM.That device is still fully functional. The remaining NVIDIA GRID K1 adapters can still be used with RemoteFX for VMs.

It’s not “lost” however. When we adapt our query to find the system devices that have dismounted I the Friendly name we can still get to it (needed to restore the GPU to the host when needed). This means that -PresentOnly for system has a different outcome depending on the class. It’s no longer available in the display class, but it is in the system class.

And we can also see it in System devices node in Device Manager where is labeled as “PCI Express Graphics Processing Unit – Dismounted”.

We now run Get-VMHostAssignableDevice again see that our dismounted adapter has become available to be assigned via DDA.

This means we are ready to assign the display adapter exclusively to our Windows 10 VM.

Assigning a GPU to a VM via DDA

You need to shut down the VM

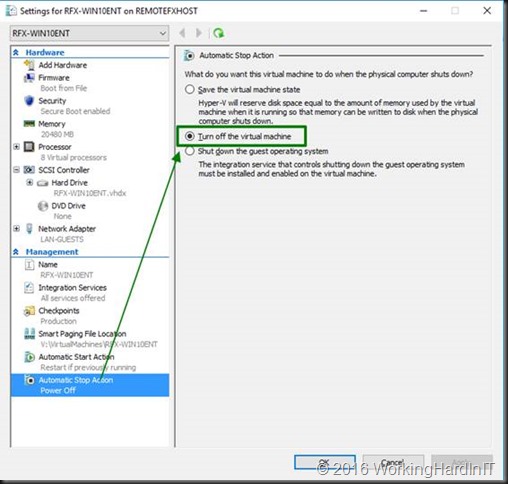

Change the automatic stop action for the VM to “turn off”

This is mandatory our you can’t assign hardware via DDA. It will throw an error if you forget this.

I also set my VM configuration as described in https://blogs.technet.microsoft.com/virtualization/2015/11/23/discrete-device-assignment-gpus/

I give it up to 4GB of memory as that’s what this NVIDIA model seems to support. According to the blog the GPUs work better (or only work) if you set -GuestControlledCacheTypes to true.

“GPUs tend to work a lot faster if the processor can run in a mode where bits in video memory can be held in the processor’s cache for a while before they are written to memory, waiting for other writes to the same memory. This is called “write-combining.” In general, this isn’t enabled in Hyper-V VMs. If you want your GPU to work, you’ll probably need to enable it”

#Let’s set the memory resources on our generation 2 VM for the GPU

Set-VM RFX-WIN10ENT -GuestControlledCacheTypes $True -LowMemoryMappedIoSpace 2000MB -HighMemoryMappedIoSpace 4000MB

You can query these values with Get-VM RFX-WIN10ENT | fl *

We now assign the display adapter to the VM using that same $locationpath

Add-VMAssignableDevice -LocationPath $locationpath -VMName RFX-WIN10ENT

Boot the VM, login and go to device manager.

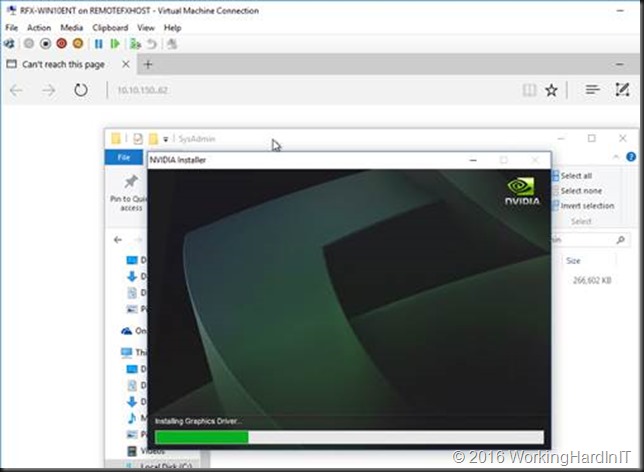

We now need to install the device driver for our NVIDIA GRID K1 GPU, basically the one we used on the host.

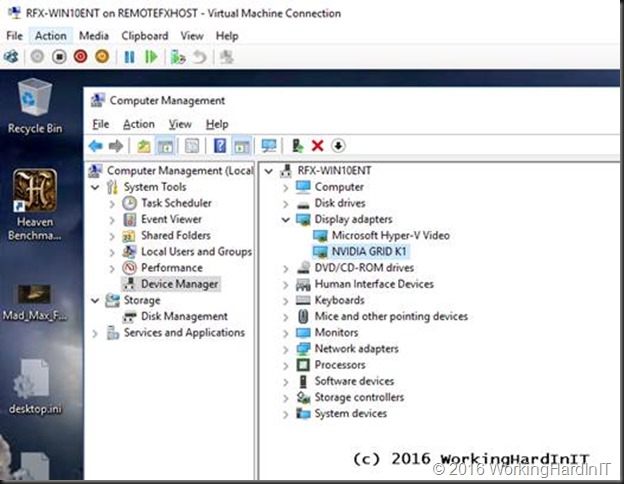

Once that’s done we can see our NVIDIA GRID K1 in the guest VM. Cool!

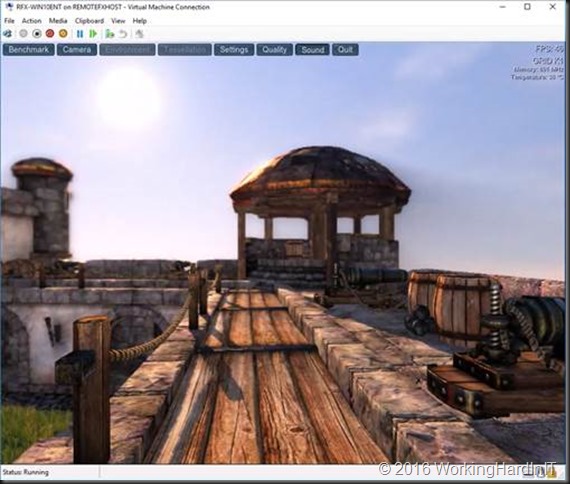

You’ll need a restart of the VM in relation to the hardware change. And the result after all that hard work is very nice graphical experience compared to RemoteFX

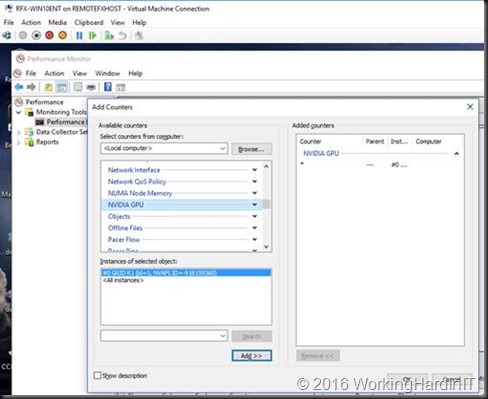

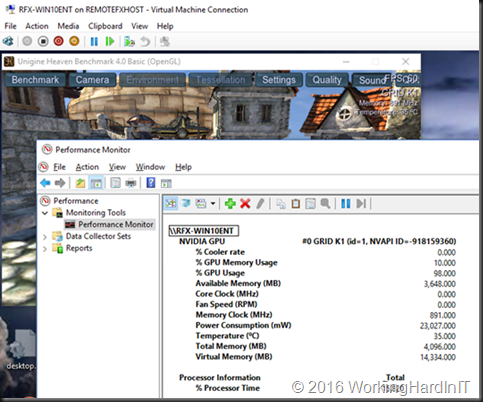

What you don’t believe it’s using an NVIDIA GPU inside of a VM? Open up perfmon in the guest VM and add counters, you’ll find the NVIDIA GPU one and see you have a GRID K1 in there.

Start some GP intensive process and see those counters rise.

Remove a GPU from the VM & return it to the host.

When you no longer need a GPU for DDA to a VM you can reverse the process to remove it from the VM and return it to the host.

Shut down the VM guest OS that’s currently using the NVIDIA GPU graphics adapter.

In an elevated PowerShell prompt or ISE we grab the locationpath for the dismounted display adapter as follows

$DisMountedDevice = Get-PnpDevice -PresentOnly |

Where-Object {$_.Class -eq “System” -AND $_.FriendlyName -like “PCI Express Graphics Processing Unit – Dismounted”}

$DisMountedDevice | ft -AutoSize

We only have one GPU that’s dismounted so that’s easy. When there are more display adapters unmounted this can be a bit more confusing. Some documentation might be in order to make sure you use the correct one.

We then grab the locationpath for this device, which is at location 0 as is an array with one entry (zero based). So in this case we could even leave out the index.

$LocationPathOfDismountedDA = ($DisMountedDevice[0] | Get-PnpDeviceProperty DEVPKEY_Device_LocationPaths).data[0]

$LocationPathOfDismountedDA

Using that locationpath we remove the DDA GPU from the VM

#Remove the display adapter from the VM.

Remove-VMAssignableDevice -LocationPath $LocationPathOfDismountedDA -VMName RFX-WIN10ENT

We now mount the display adapter on the host using that same locationpath

#Mount the display adapter again.

Mount-VmHostAssignableDevice -locationpath $LocationPathOfDismountedDA

We grab the display adapter that’s now back as disabled under device manager of in an “error” status in the display class of the pnpdevices.

#It will now show up in our query for -presentonly NVIDIA GRIDK1 display adapters

#It status will be “Error” (not “Unknown”)

$MyNVidiaGRIDK1 = Get-PnpDevice -PresentOnly| Where-Object {$_.Class -eq “Display”} |

Where-Object {$_.Service -eq “nvlddmkm”} |

Where-Object {$_.FriendlyName -eq “NVIDIA Grid K1”}

$MyNVidiaGRIDK1 | ft -AutoSize

We grab that first entry to enable the display adapter (or do it in device manager)

#Enable the display adapater

Enable-PnpDevice -InstanceId $MyNVidiaGRIDK1[0].InstanceId -Confirm:$false

The GPU is now back and available to the host. When your run you Get-VMHostAssignableDevice it won’t return this display adapter anymore.

We’ve enabled the display adapter and it’s ready for use by the host or RemoteFX again. Finally, we set the memory resources & configuration for the VM back to its defaults before I start it again (PS: these defaults are what the values are on standard VM without ever having any DDA GPU installed. That’s where I got ‘m)

#Let’s set the memory resources on our VM for the GPU to the defaults

Set-VM RFX-WIN10ENT -GuestControlledCacheTypes $False -LowMemoryMappedIoSpace 256MB -HighMemoryMappedIoSpace 512MB

Now tell me all this wasn’t pure fun!

“This is mandatory our you can’t assign hardware via DDA. It will throw an error if you forget this”

Are you actually saying that when DDA is used in a VM any reboot of the Host results in a brute “Power Off” of the VM ? Or can you set this back to shutdown or save after you have assigned the device…?

Nope, you cannot do that. It acts as hardware, both in the posivitive way (stellar peformance for certain use cases) and in the negative way (you lose some capabilities you’ve become used to with virtualization). Now do note that this is TPv4 or a v1 implementation. We’ll see where this lands in the future. DDA is only for selecte use cases & needs whee the benefits outweigh the drawback and as it breaks through the virtualization layer as such it is also only for trusted admin scenarios.

Haha, yes, understand. But suppose you add a NMVe this way and then reboot the host while heavy IO is going on… “power Off” -> Really ??? 🙂

Even it’s real HW, you don’t need to turn off or cut power to a real HW system either… Same goes for SRIOV actually, so just sounds like it’s still in beta-testingstage for that matter… Put differently: DD is totally useless if Power Off will be your only choice @RTM…

I would not call that totally useless 🙂 A desktop is not totally useless because it can’t save state when you have a brown out. And you also manage a desktop, for planned events you shut it down. The use case determines what’s most immportant.

Shutdown wasn’t an option. Byebye CAU in VDI environment… Are would you go shutdown each VM manually ? I guess it will get better by the time it RTMs. I reckon MS understands that aswell…

Depends on use case. Ideally it comes without any restrictions. Keep the feedback coming. MSFT reads this blog every now and then 🙂 and don’t forget about uservoice https://windowsserver.uservoice.com/forums/295047-general-feedback !

So do you think some of the newer graphics cards that will “support” this type of DDA will be able to expose segments of their hardware? let’s say, an ATI FirePro s7150. It has the capability to serve ~16 users, but today, only one VM can use the entire card.

It’s early days yet and I do hope more information both from MSFT and card vendors will become available in the next months.

Pingback: The Ops Team #018 – “Bruised Banana” | The Ops Team | | php Technologies

Pingback: The Ops Team #018 - "Bruised Banana"

Pingback: Discrete Device Assignment with Storage Controllers - Working Hard In IT

I’m super close on this. I have the GPU assigned (a K620), but when I install the drivers and reboot, windows is ‘corrupt’. It won’t boot, and it’s not repairable. I can revert to snapshots pre-driver, but that’s about it. I’ve tried this in a Win 8 VM and a Win 10 VM. Both generation 2.

I have not seen that. Some issues with Fast Ring Windows 10 releases such as driver issues / errors but not corruption.

I think my issues is due to my video card. I’m testing this with a K620. I’m unclear if the K620 supports Access Control Services. I’m curious, your instructions use the -force flag on the Dismount-VmHostAssignableDevice command. Was the -force required with your GRID card as well? That card would absolutely support Access Control Services, I’m wondering if the -force was included for the card you were using, or for the PCI Express architecture. Thanks again for the article, I’m scrambling to find a card that supports Access Control Services to test further. I’m using the 620 because it does not require 6-pin power (My other Quadro cards do).

Hi, I’m still trying to get the details from MSFT/NVIDIA but without the force it doens’t work but throws an error. You can always try that. It’s very unclear what’s exactly supported and what not and I’ve heard (nor read) contradicting statements by the vendors involved. Early days yet.

The error is:

The operation failed.

The manufacturer of this device has not supplied any directives for securing this device while exposing it to a virtual

machine. The device should only be exposed to trusted virtual machines.

This device is not supported when passed through to a virtual machine.

Hi, I’m wondering if you have any information or experience with using DDA combined with windows server remoteApps technology. I have set up a generation 2 Windows 10 VM with a NVIDIA Grid K2 assigned to it. Remote desktop sessions work perfectly, however my remoteApp sessions occasionally freeze with a dwm.exe appcrash. I’m wondering if this could be something caused by the discrete device assignment? Are remoteApps stable with DDA?

I’m also used a PowerEdge R730 and a Tesla K80, Everything goes fine following your guide by the letter, until installing the driver on the VM, where I get a Code 12 error “This device cannot find enough free resources that it can use. (Code 12)” in Device Manager (Problem Status : {Conflicting Address Range}

The specified address range conflicts with the address space.)

Any ideas what might be causing this, the driver is the latest version, and installed on the host without problems.

Hi, i have kinda same problem, same error msg but on IBM x3650 M3 with a gtx970 (thing its gtx960 works well..) u fixed it in any way? thx in advice =))

Same here with the R730 and a Tesla K80. Just finished up the above including installing Cuda and I get the same Code 12 error. Anyone figure out how to clear this error up?

Hy there,

i have the same problem with a HP DL 380p Gen.8:

I had the Problem in the HOST System too,

there i had to enable the “PCI Express 64-BIT BAR Support” in the Bios. Then die Card works in the HOST System.

But not in the VM.

Nice read. I’ve been looking around for articles about using pass through with non-quadro cards, but haven’t been able to find much. Yours is actually the best I’ve read. By that I mean two nvidia retail geforce series cards, one for the host one for a pass through to a VM. From what I’ve read I don’t see anything to stop it working, so long as the guest card is 700 series or above, since the earlier cards don’t have support. Is that a fair assesment?

Hi. I have an error when Dismount-VmHostAssignableDevice: “The current configuration does not allow for OS control of the PCI Express bus. Please check your BIOS or UEFI settings.” What check in BIOS? Or maybe try Uninstall in Device manager?

Hello, did you found solution to this problem?

I have same problem on my HP Z600 running Windows Server 2016.

I assigned a K2 GPU to a VM but now I am not able to boot the VM anymore…

I will get an error that a device is assigned to the VM and therefore it cannot be booted.

Cluster of 3x Dell R720 on Windows Server 2016, VM is limited to a single Node which has the K2 card (the other two node don’t have graphics cards yet).

Sure you didn’t assing it to 2 VMs by mistake? If both are shut down you can do it …

It looks like it just won’t work when the VM is marked as high available. When I remove this flag and store it on a local hdd of a cluster node it works.

Do you know if HP m710x with Intel P580 support DDA?

No I don’t. I’ve only use the the NVidia GRID/Tesla series so far. Ask your HP rep?

Tried to add a MVIDIA TESLA M10 (GRID) card (4xGPU) to 4 different VMs. It worked flawlessly but after that I could not get back all the GPUs when I tried to remove it from the VM. After Remove-VMAssignableDevice the GPU disappeared from the VM Device manager but I could not mount it back at the host. When listing it shows the “System PCI Express Graphics Processing Unit – Dismounted” line with the “Unknown” status. GPU disappeared from the VM but cannot be mounted and enabled as of your instructions. GPU disappeared? What could possibly caused this?

I have not run into that issue yet. Sorry.

This is great work and amazing. I have tried with NVIDIA Quadro K2200 and able to use OpenGL for apps I need.

One thing I noticed, Desktop Display is attached to Microsoft Hyper V Video and dxdiag shows it as primary video adapter. I am struggling to find a way if HYper V Video could be disabled and VM can be forced to use NVIDIA instead for all Display processing as primary video adapter. Thoughts?

Well, my personal take on this it’s not removable and it will function as it does on high end systems with an on board GPU and a specialty GPU. It used the high power one only when needed to save energy – always good, very much so on laptops. But that’s maybe not a primary concern. If your app is not being served by the GPU you want it to be serviced by you can try and dive into the settings in the control panel / software of the card, NVIDIA allows for this. Look if this helps you achieve what you need.

My vm is far from stable with a gpu through dda. (Msi r9 285 Gaming 2Gb). Yes it does work, performance is great, sometimes the vm locks up and gives a gpu driver issue. I dont get errors that get logged, just reboots or black/blue screens. Sometimes the vm crashes and comes online during the connection time of a remote connection. (Uptime reset).

I dont know if it is a problem with Ryzen. (1600x) 16Gb gigabyte ab350 gaming 3.

Launching Hwinfo64 within the vm those complete lockup the host and the vms. Outside the vm no problems.

Still great guide, the only one I could find.

Vendors / MSFT need to state support – working versus supported and this is new territory.

I disable ULPS, to prevent the gpu from idleing this morning. Vm did keep online for over 4 hours. But still at somepoint it goes doen. Here alle the error codes of the bluescreens -> http://imgur.com/a/vNWuf

It seems like a driver issue to me.

when reading “Remove a GPU from the VM & return it to the host.” there is a typo.

Where-Object {$_.Class -eq “System” -AND $_.FriendlyName -like “PCI Express Graphics Processing Unit – Dismounted”}

the –, should be –

I got stuck when trying to return the gpu back to the main os, this helped

I see your website formats small -‘s as big ones

hmm now it doesnt, anyway, the –, should be –

(guess i made a typo myself first)

ok something weird is going on..

Pingback: MCSA Windows Server 2016 Study Guide – Technology Random Blog

We are running the same setup with Dell 730 and Grid K1, all the setup as you listed works fine and the VM works with the DDA but after a day or so the grid inside the VM reports “Windows has stopped this device because it has reported problems. (Code 43)”

I have read that NVidia only support Grid k2 and above with DDA, so I am wondering if that’s the reason the driver is crashing?

We are running 22.21.13.7012 driver version

Have you seen this occur in your setup

It’s a lab setup only nowadays. The K1 is getting a bit old and there are no production installs I work with using that today. Some drivers do have know issues. Perhaps try R367(370.16) the latest update of the branch that still support K1/K2 with Windows Server 2016.

Thanks for your quick reply,

Yes it is an older card, we actually purchased this card some time ago for use with a Windows 2012 R2 RDS session host not knowing that it wouldn’t work with remotefx through session host.

We are now hoping to make use of it in server 2016 remotefx but I don’t think this works with a session host either, so are now testing out DDA.

We installed version 370.12 which seems to install driver version 22.21.13.7012 listed in Device manager.

I will test this newer version and let you know the results

Thanks again.

Did a quick check:

RemoteFX & DDA with 22.21.13.7012 works and after upgrading to 23.21.13.7016 it still does. Didn’t run it for loner naturally. I have seen error 43 with RemoteFX VM a few times, but that’s immediate and I have never found a good solution but replacing the VM with a clean one. Good luck.

Hello, you can read more on how to clean BIOS. Whether or not to include SR-IOV and what else will be needed.

Need help setting up the BIOS Motherboard “SuperMicro X10DRG-Q”, GPU nVIDIA K80

I assigned the video card TESLA k80, it is defined in the virtual machine, but when I look dxdiag I see an error

Hi,

I have attached a Grid K1 card via DDA to a Windows 10 VM and it shows up fine and installs drivers OK in the VM but the VM still seems to be using the Microsoft Hyper-V adapter for video and rendering (Tested with Heaven Benchmark). GPU Z does not pick up any adapter. When I try to access the Nvidia Control panel I get a message saying “You are not currently using a display attached to an Nvidia GPU” Host is Windows Server 2016 Standard with all updates.

If anyone has any ideas that would help a lot, thanks.

Hi Everybody,

Someone can help me out here? 🙂

I have a “problem” with the VM console after implementing DDA.

When installed the drivers on the HyperV host and configured DDA on the host and assigned a GPU to the VM that part works fine.

After installing the drivers on the VM to install the GPU the drivers gets installed just fine.

But after installing and a reboot of the VM I cannot manage the VM through the hyper-V console and the screen goes black.

RDP on the VM works fine.

What am I doing wrong here?

My setup is:

Server 2016 Datacenter

Hyper-V

HP Proliant DL380.

Nividia Tesla M10

128 GB

Profile: Virtualisation Optimisation.

I have tested version 4.7 NVIDIA GRID (on host and VM) and 6.2 NVIDIA Virtual GPU Software (on host and VM.

Kind regards

Does the GRID K1 need a nvidia vGPU license? I’m considering purchasing a K1 on ebay but am concern once I install in my server that the functionality of the card will be limited w/o a license. Is their licensing “honor” based? My intent is to use this card in a DDA configuration. If the functionality is limited I will likely need to return. Please advise. Thanks!

Nah, that’s an old card pre-licensing era afaik.

Thanks! Looks like I have this installed per the steps in this thread – a BIG THANK YOU! My guest VM (Win 10) sees the K1 assigned to it but is not detected on the 3D apps I’ve tried. Any thoughts on this?

I was reading some other posts on nvidia’s gridforums and the nvidia reps said to stick with the R367 release of drivers (369.49); which I’ve done on both the host and guest VM (I also tried the latest 370.x drivers). Anyway, I launch the CUDA-Z utility from the guest and no CUDA devices found. Cinebench sees the K1 but a OpenGL benchmark test results in 11fps (probably b/c it’s OpenGL and not CUDA). I also launch Blender 2.79b and 2.8 and it does not see any CUDA devices. Any thoughts on what I’m missing here?

I’m afraid no CUDA support is there with DDA.

Thanks for the reply. I did get CUDA to work by simply spinning up a new guest… must of been something corrupt with my initial VM. I also use the latest R367 drivers w/ no issue (in case anyone else is wondering).

Good to know. Depending on what you read CUDA works with passthrough or is for shared GPU. The 1st is correct it seems. Thx.

Great post, thank you for the info. My situation is similar but slightly different. I’m running a Dell PE T30 (it was a black Friday spur of the moment buy last year) that I’m running windows 10 w/Hyper-V enabled. There are two guests, another Windows 10 which is what I use routinely for my day-to-day life, and a Windows Server 2016 Essentials. This all used to be running on a PE2950 fully loaded running Hyper-V 2012 R2 core…

When moving to the T30 (more as an experiment) I was blow away at home much the little GPU on the T30 improved my windows 10 remote desktop experience. My only issue, not enough horse power. It only has two cores and I’m running BlueIris video software, file/print service and something called PlayOn for TV recording. This overwhelmed the CPU.

So this year I picked up a T130 with Windows 2016 standard with four cores and 8 threads. But, the T130 does not have a GPU, so, I purchased a video card and put it in. Fired it up, and, no GPU for the Hyper-V guests. I had to add the Remote desktop role to the 2016 server to then let Hyper-V use it, and then, yup, I needed an additional license at an additional fee, which I don’t really want to pay if I don’t have to… So my question:

– Is there an EASY way around this so I can use WS2016S as the host and the GPU for both guests but not have to buy a license? I say easy because the DDA sounds like it would meet this needs (for one guest?), but, also seems like more work than I’d prefer to embark on..

OR

– Do I just use windows 10 as my Host and live the limitations, which sounds like the only thing I care about is the Virtualizing GPUs using RemoteFX. But I’m also confused on this since windows 10 on the T30 is using the GPU to make my remote experience better. So I know I’m missing some concept here…

Thanks for the help – Ed.

I cannot dismount my Grid K1 as per your instructions

My setup is as follows

Motherboard: Supermicro X10DAi (SR-IOV enabled)

Processor: Intel Xeon E2650-V3

Memory: 128GB DDR4

GPU: Nvidia Grid K1

When I try to dismount the card from the host I get the following:

Dismount-VmHostAssignableDevice : The operation failed.

The current configuration does not allow for OS control of the PCI Express bus. Please check your

BIOS or UEFI settings.

At line:1 char:1

+ Dismount-VmHostAssignableDevice -force -locationpath $locationpath

+ ~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~

+ CategoryInfo : InvalidArgument: (:) [Dismount-VMHostAssignableDevice], Virtualizat

ionException

+ FullyQualifiedErrorId : InvalidParameter,Microsoft.HyperV.PowerShell.Commands.DismountVMHos

tAssignableDevice

Either a BIOS (EUFI) configuration issues that can be corrected or a BIOS (EUFI) that does not fully support OS control of the bus. Check the BIOS settings, but normally the vendors should specify what gear is compatible with DDA. If is is not an BIOS upgrade might introduce it. But this is a game of dotting the i’s and crossing the t’s before getting the hardware. Opportunistic lab builds with assembled gears might work but no guarantees given.

OK I now got 2 Nvidia Grid K1 cards installed in a HPDL380p server, 6 GPUs are showing healthy, 2 are showing error code 43, I have tried every variation of driver, BIOS, firmware, and am at my wits end, I know these cards are not faulty

Hi, thanks for the post.

I did all the steps of the publication and I have an error when starting the VM windows10 Generation 2: I get the following message:

[Content]

‘VMWEBSCAN’ failed to start.

Virtual Pci Express Port (Instance ID 2614F85C-75E4-498F-BD71-721996234446): Failed to Power on with Error ‘A hypervisor feature is not available to the user.’.

[Expanded Information]

‘VMWEBSCAN’ failed to start. (Virtual machine ID B63B2531-4B4D-4863-8E3C-D8A36DC3E7AD)

‘VMWEBSCAN’ Virtual Pci Express Port (Instance ID 2614F85C-75E4-498F-BD71-721996234446): Failed to Power on with Error ‘A hypervisor feature is not available to the user.’ (0xC035001E). (Virtual machine ID B63B2531-4B4D-4863-8E3C-D8A36DC3E7AD)

I am using a PowerEdge R710 gen2 and Nvidia QUADRO P2000 that is supposed to support DDA.

Well. make sure you have the latest BIOS. But it is old hardware and support for DDA is very specific with models, hardware, Windows versions etc. The range of supported hardware is small. Verify everything like moel of CPU, chipset, R-IOV, VT-d/AMD-Vi, MSI/MSI-X, 64 PCI BAR, IRQ remapping. I would note even try with Windows Server 2019. That is only for the Tesla models, not even GRID is supported. Due to the age of the server and required BIOS support I’m afraid this might never work and even if it does can break at any time. Trial and error. You might get lucky but it will never be supported and it might break at every update.

Pingback: How-to install a Ubuntu 18.04 LTS guest/virtual machine (VM) running on Windows Server 2019 Hyper-V using Discrete Device Assignment (DDA) attaching a NVIDIA Tesla V100 32Gb on Dell Poweredge R740 – Blog

Any idea if this’ll work on IGPU such as intel’s UHD? Can’t find anything about it on the net

Can you add multiple gpu’s to the vm ?

As long as the OS can handle it sure, multiple GPUs, multiple NVMEs …

Hi

Need your advise.

We are planning to create Hyper -V cluster based on two HP DL380 servers. Both servers will have Nvidia GPU card inside.

The question is if it’s possible to create Hyper-v cluster based on those 2 nodes with most VMs with high availability and one VM on each node without it but with DDA to GPU?

So, if I understand from this thread and comments correctly, I have to store VMs on shared data storage as usual, but for VMs with DDA I have to store them on local drive of the node. And I have to unflag HA for VMs with DDA. That’s all.

Am I right?

Thanks in advance

You can also put them on shared storage but they cannot live migrate. the auto-stop action has to be set to shutdown. Whether you can use local storage depends on the storage array. On S2D having storage, other than the OS, outside of the virtual disks from the storage pool is not recommended. MSFT wants to improve this for DDA but when or if that will be available in vNext is unknown. Having DDA VM’s on shared storage also causes some extra work and planning if you want them to work on another node. Also see https://www.starwindsoftware.com/blog/windows-2016-makes-a-100-in-box-high-performance-vdi-solution-a-realistic-option

“Now do note that the DDA devices on other hosts and as such also on other S2D clusters have other IDs and the VMs will need some intervention (removal, cleanup & adding of the GPU) to make them work. This can be prepared and automated, ready to go when needed. When you leverage NVME disks inside a VM the data on there will not be replicated this way. You’ll need to deal with that in another way if needed. Such as a replica of the NVMe in a guest and NVMe disk on a physical node in the stand by S2D cluster. This would need some careful planning and testing. It is possible to store data on a NVMe disk and later assign that disk to a VM. You can even do storage Replication between virtual machines, one does have to make sure the speed & bandwidth to do so is there. What is feasible and will materialize in real live remains to be seen as what I’m discussing here are already niche scenarios. But the beauty of testing & labs is that one can experiments a bit. Homo ludens, playing is how we learn and understand.”

Many thanks for you reply. Very useful.

And what about GPU virtualization (GPU-PV)? Just as an idea to install Windows 10 VM and use GPU card on it. We’ll install CAD system on this VM and users will have access to it via RDP.

Will it work fine?

Hyper-V only has RemoteFX which is disabled by default as it has some security risks being older technology. Then there DDA. GPU-PV is not available and while MSFT has plans/ is working on improvements I know no roadmap or timeline details for this.

Pingback: How to Customize Hyper-V VMs using PowerShell

Hi,

I try to use DDA on my Dell T30 with an i7-6700k build in.

Unfortunately I get the error when I try to dismount my desired device. Andy idea? Is the system not able to use DDA?

Dismount-VMHostAssignableDevice : The operation failed.

The current configuration does not allow for OS control of the PCI Express bus. Please check your BIOS or UEFI settings.

At line:1 char:1

+ Dismount-VMHostAssignableDevice -LocationPath “PCIROOT(0)#PCI(1400)#U …

+ ~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~

+ CategoryInfo : InvalidArgument: (:) [Dismount-VMHostAssignableDevice], VirtualizationException

+ FullyQualifiedErrorId : InvalidParameter,Microsoft.HyperV.PowerShell.Commands.DismountVMHostAssignableDevice

Kind regards

Jens

I have only used DDA with certified OEM solutions. It is impossible for me to find out what combinations of MobBo/BIOS/GPU cards will work and are supported.

Dell T30 with an i7-6700k and enabled all virtual things in BIOS.

When I try to dismount the card from the host I get the following:

Dismount-VmHostAssignableDevice : The operation failed.

The current configuration does not allow for OS control of the PCI

Did someone get this running with an Dell T30?

Pingback: How to Customize Hyper-V VMs using PowerShell - Virtualization DOJO | Hyper-V