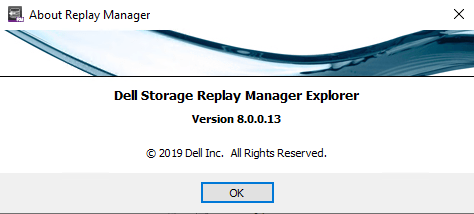

DELL Released Replay Manager 8.0

On September the 4th 2019 DELL released Replay Manager 8.0 for Microsoft Servers. This brings us official Windows Server 2019 support. You can download it here: https://www.dell.com/support/home/us/en/04/product-support/product/storage-sc7020/drivers The Dell Replay Manager Version 8.0 Administrator’s Guide and release notes are here: https://www.dell.com/support/home/us/en/04/product-support/product/storage-sc7020/docs

I have Replay Manager 8.0 up and running in the lab and in production. The upgrade went fast and easy. And everything kept working as expected. The good news is that Replay Manager 8 service is compatible with the Replay Manager 7.8 Manager and vice versa. This means there was no rush to upgrade everything asap. We could do smoke testing at a releaxed pace before we upgraded all hosts.

Replay Manager 8.0 adds official support for Windows Server 2019 and Exchange Server 2019. I have tested Windows Server 2019 with Replay Manager 7.8 as well for many months. I was taking snapshots every 30 minutes for months with very few issues actually. But no we had oficial support. Replay Manager 8.0 also introduces support for SCOS 7.4.

No improvements with Hyper-V backups

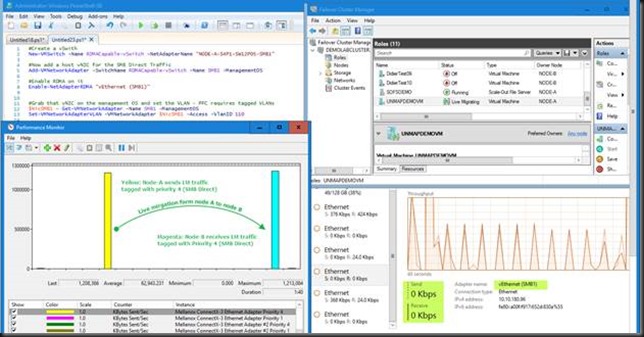

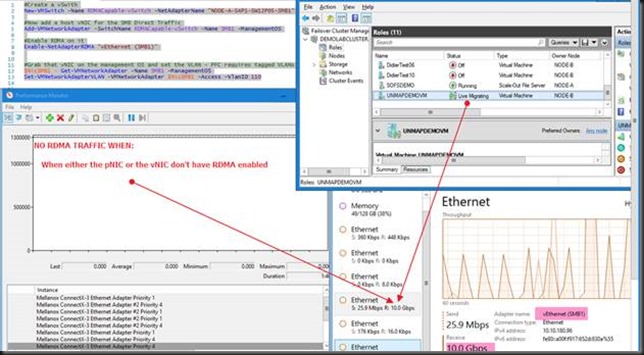

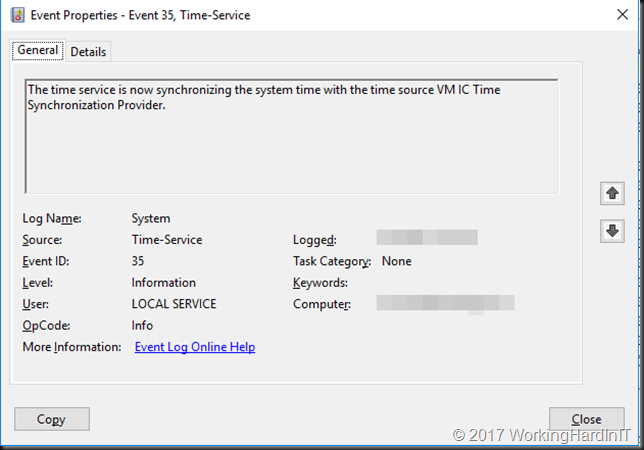

Now, we don’t have SCOS 7.4 running yet. This will take another few weeks to go into general available status. But for now, with both Windows Server 2016 and 2019 host we noticed the following dissapointing behaviour with Hyper-V workloads. Replay Manager 8 still acts as a Windows Server 2012 R2 requestor (backup software) and hence isn’t as fast and effectice as it could be. I actually do not expect SCOS version 7.4 to make a difference in this. If you leverage the hardware VSS provider with backup software that does support Windows Server 2016/2019 backup mechanisms for Hyper-V this is not an issue. For that I mostly leverage Veeam Backup & Replication.

It is a missed opportunity unless I am missing something here that after so many years Replay Manager requestor still does not support Windows Server 2016/2019 native Hyper-V backups capabilities. And once again, I didn’t even mention the fact that the indivual Hyper-V VMs backups need modernization in Replay Manager to deal with VM mobility. See https://blog.workinghardinit.work/2017/06/02/testing-compellent-replay-manager-7-8/. I also won’t mention Live Volumes as I did then. Now as I leverage Replay Manager as a secondary backup method, not a primary I can live with this. But it could be so much better. I really need a chat with the PM for Replay Manager. Maybe at Dell Technologies World 2020 if I can find a sponsor for the long haul flight.