Introduction

I’ve used NVMe disks on a modest scale already for code build servers, SQL Server deployments (physical or virtual) and basically for any workload where the benefits of better storage performance outweigh the loss of high availability (clustering, live migration) such as workstation use, I can run a pretty nice lab on my workstation and not feel miserable due to disk IO contention. Let’s see what NVMe Storage for Backup Targets can do!

For the price you pay and the problems they solve, the performance benefits of NVMe are a great deal. Just run Windows Server 2016 with nested Hyper-V on an NVME as a developer with a dozen VMs for AD, IIS, Middle ware and SQL Server. You’ll see what it means. Anything less than 8 cores, DDR 4 and a modern motherboard need not apply by the way.

We’re looking forward to NVMe deployments where high available storage is available (shared or shared nothing) for virtualized workloads. We’re seeing the first examples of this in certain Storage Spaces Direct deployments with Windows 2016. I’m pretty sure the industry will push NVMe usage to new heights for use in such scenarios the coming years with NVMe Fabrics.

Recently we’ve been looking at NVMe disks as a high performant backup tier in our backup storage targets. Yup, read on. Sometimes I get this crazy idea I need to scratch, or better, test out in the lab.

NVMe Storage for Backup Targets

When needed you can build pretty solid backup target with cheap, “high capacity” SATA SSDs as well. The thing is that you’ll be limited by the capabilities of SATA itself. You also need decent controllers leading to costs associated with mitigating those. SATA isn’t exactly the best choice for high throughput, concurrent workloads either. You can move up to SAS in order to go beyond the limits of SATA for SSD but the cost goes up accordingly.

When it comes to cost versus performance, that’s where PCIe shines brighter than anything we have today. Sure it’s not yet feasible to do so for large data volumes but we’re not looking at this for the bulk of our VMs or data. We’re looking a use case where we need stellar performance in a reasonable volume we can drop into a server.

Some people will shout in a visceral reaction (*) that I’m nuts spending that amount of money on backup storage. Well no, I’m not. You have to look at the needs of the use case and the economics of achieving a solution. For a company that has the need to back up a number of state full virtual machines every 10 minutes and want to keep 12-24 or so restore points around NVMe disks can deliver a very cost effective solution. You’re probably running those VMs high available, shared tier 1 storage already, the cost of which is a multitude of a couple of NVMe disks. Let’s look at an example. Say we’re leveraging Scale-Out Repositories with Veeam Backup and Replication and we have 3 to 4 repositories. Dropping 1 or 2 NVME disks to every node can deliver 6 to 8 TB of stellar performance to your existing setup. In many of my deployments we get all the other resources in those nodes cost effectively because we typically recycle our Hyper-V hosts. So cores, memory and bandwidth are plentiful without huge investments in new dedicated servers. If you do buy some of the high density kit the cost of memory and the CPU cores won’t kill the project. So am I nuts for trying or not? Heck no, we’ll learn a lot and I’m sure prices will drop and capacities will rise without sacrificing on performance.

Really, the price isn’t that bad. Just look on Amazon for the cheapest pricing of Intel 750 series NVMe disks of 1.2 TB and come back.

Today you won’t be buying 20 of them anyway to put in a JBOD as those don’t exist yet. You’ll put one or 2 in 1 or more backup target servers to provide high performance backup storage.

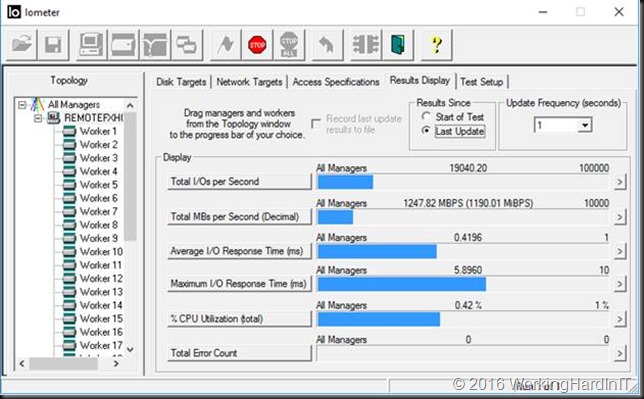

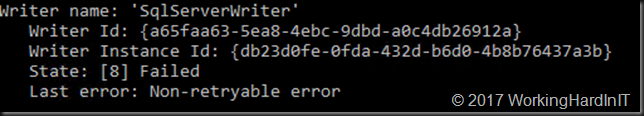

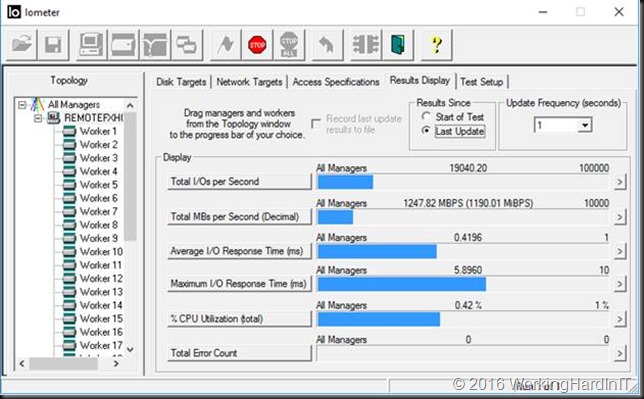

Testing 64K 100% sequential writes with 8 worker nodes enabled … not too shabby

NVME disks have stellar IOPS and throughput at low latencies. If you ever wear them out they are cheap enough to swap out for a new one. They absolutely rock under concurrent use, with multiple sessions and heavy workloads. Their massive IO queues make them shine as server storage in many to one scenarios. So backing up many different Hyper-V nodes (clustered or not) concurrently and continuously throughout the day is a use case where they should rock. Just search for some of the reviews out there for details.

Do you need bigger sized NVMe disks and a bit more “enterprise grade” comfort? Look at the Intel 3700 series or equivalents. Simplistically these are the same family but the 750 series disk has been tuned to do better for workstation workloads. But even then most people won’t get to see their true capabilities. Anyway the 3700 are more expensive and the 2TB seize mark might be what pushes you to buy them. Compared to some OEM enterprise grade SAS SSDs you’re still getting a pretty good deal. In any case many workstations cannot even make the Intel 750 series break out in single drop of sweat. We can push them a bit more in server workloads.

If you need redundancy with local NVMe storage you have some options. You can make local NVMe disks redundant today via Storage Spaces if you want or mitigate the risk by using 2 and have to backup jobs protecting the same VMs to different targets.

The Intel 750 NVMe disk installed in a Dell R730 dual socket server

Booting the DELL R730 which provides sufficient resources to evaluate the capabilities of an NVMe disk.

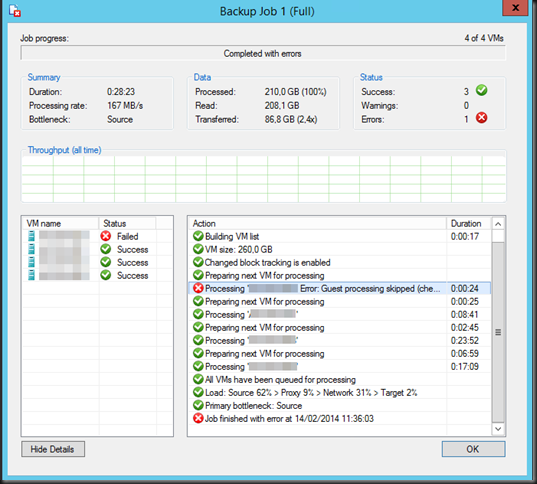

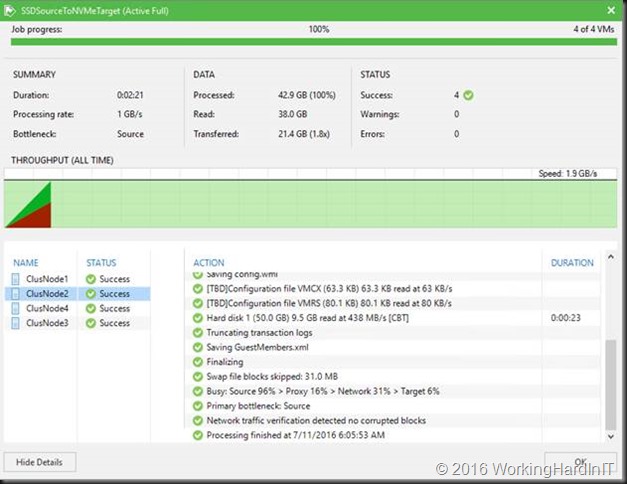

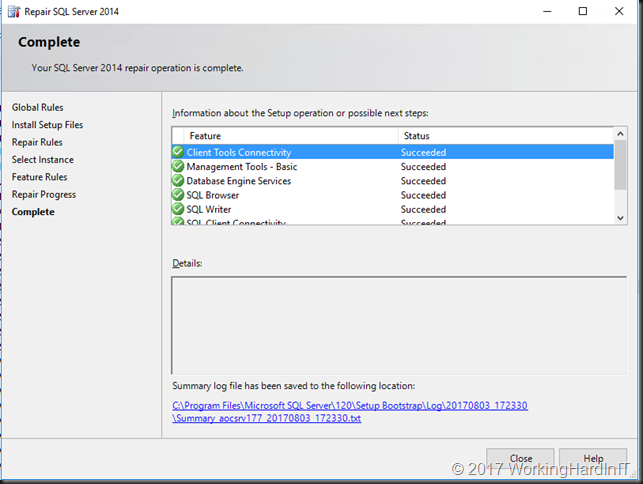

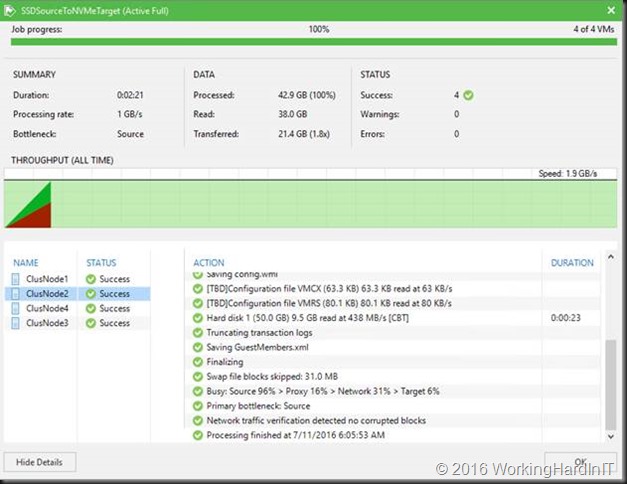

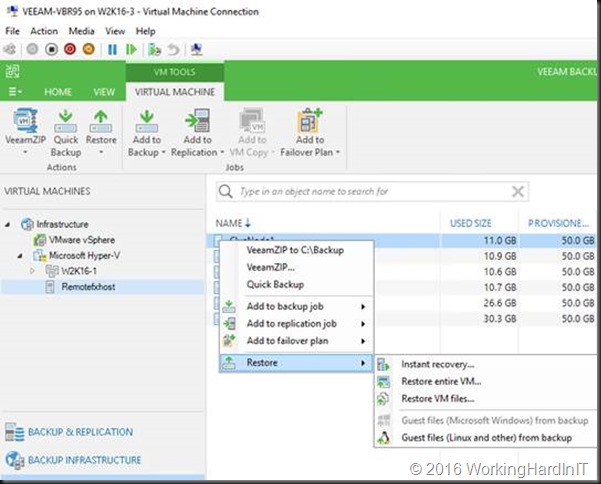

I cannot share to much info on this yet but look at the screenshot below. The VMs run on Storage Spaces (pure SSD) and the backup Target is the Intel 750 1.2 TB NVMe disk.

When the delta in the VMs is low, the amount of data you’ll need to backup with Veeam and Windows 2016 CBT is minimal so backup target performance is not that a big deal. But when you have bigger delta’s and multiple backup jobs running simultaneously that becomes a point that requires attentions.

Look at the above screen shot of some tests backing up VMs on Storage Spaces (Windows Server 2016) ReFS v3 source storage to NVMe with ReFS v3 target storage. Continuously protecting a company’s gold doesn’t have to cost you a king’s ransom in diamonds. We’re running Windows Server 2016 TPv5 and Veeam backup & Replication 9.5 Beta. I hope to discuss the capabilities of Windows Server 2016, ReFS and Veeam Backup and Replication 9.5 in later posts.

What will that cost me?

So let’s say you need 2 TB of backup storage in your backup target for your “always on” mission critical, state full virtual machines. For under 1600 € you can have that in Intel NVMe 750 Series. Today this really is not the technology to build a 300TB backup capacity solution with but when used for the right reasons in the right place with the right use cases this is a good solution.

Now, this isn’t the cheapest per GB, far from, but it is the absolutely best offering when with comes to fantastic throughput even, or better, especially when hitting that target storage with multiple concurrent backups from multiple sources. That’s where its shines beyond anything we have today. The real challenge there will be for the other resources to keep up as well as for the operating system and backup software to be capable of delivering what the NVMe disk(s) can handle. Compared to the OEM prices for their enterprise SAS SSD’s this is still reasonable.

We’ll compare this to “standard” SSD with controllers and see where this gets us. You can learn whether this works for you at relatively low cost, gain experience (i.e. find the bottle necks in the rest of your stack) and deliver a great result for the workloads you’re testing it with. Good backup software lets you fine tune the backups and even throttle backups based on latency of the source storage so you don’t have to worry about it killing the performance of your primary workloads.

Disclaimer: Don’t run of to your boss telling her or him I told you do implement NVMe backup storage targets. Only do so if you have a use case for this and are willing to try it out. Heck, I bought one on my own dime. So I could try it out and see if we can leverage this. If not, I have a great use case for the disk in my workstation for all those Hyper-V virtual machines.

For those 20 ultra-special stateful virtual machines in an “Always-On” environment … this might be the current solution. And please think beyond backups, think recovery of those virtual machines!

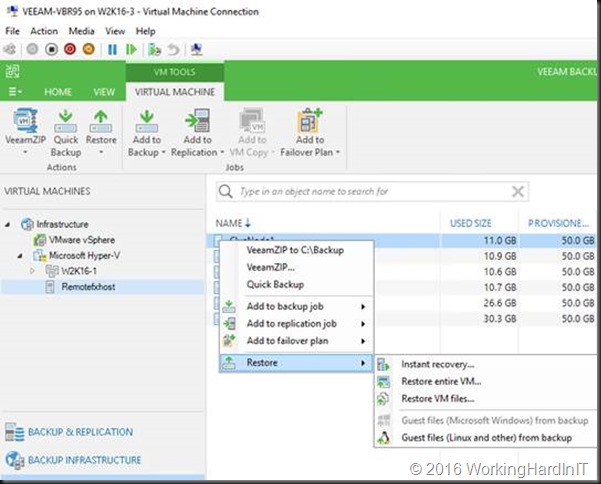

It’s kind of cool to use Veeam’s Instant VM recovery when the backup resides on an NVMe.

The future

Today, even with the NVMe Fabric v1.0 specifications published recently we don’t yet have “NVMe JBODS” or fabrics we can buy as commodity components but I’m rather sure those will come soon. These are interesting times and I’ll keep a keep a keen eye on the evolutions around NVMe.

Until then I’ll leverage commodity SSDs for landing the short term backups of VMs. When speed & frequency of those backups become crucial I’ll add a one or more NVMe disks to the mix.

I can put long term backup to other backup targets either via different jobs that run at night and/or via copies.

On top of all this the availability of 7.5 and 15 TB 3D NAND disks are about to change the way we look at high capacity disk based storage solutions. Those capacities in small form factors provide tremendous opportunities to deliver high capacity and performance in small building blocks making the power & cooling economics significantly better. Needing half a rack or a full rack of 3 or 6TB HDD to get both capacity & IOPS doesn’t seem that attractive anymore looking at the TCO over 5 years compared with 2 disk bays full with 7.5 or 15TB SSDs. In the future, with the rise of high capacity SSDs and dropping prices we might soon find that ever bigger SSDs deliver the bulk of our storage & NVMe is reserved for the truly demanding workloads.

Slowly but surely we can put most businesses in my country in one or half a rack without compromising in anything or needing to by vendor lock in converged solutions to make it happen. The scenario where we deliver on premises where it makes the most sense and move to the public cloud where it matters the most is more and more cost effective for those that can’t make data center zero happen yet. Combine that with a software defined approach and you’re looking good.

(*) I had a discussion about using NVMe for certain backup loads with some data center architects recently and they were convinced it was too expensive, too early and needed a consulting engagement leading to a POC to determine if this was a good idea. That would involve project & administrative costs, time and materials etc. Well, we just bought a couple of NVMe disk with on our own budget to test out the idea and concept. It works and is affordable for the right use cases. Just make sure you don’t put an NVMe disk in an anemic budget server where all other resources will be the bottle necks. Also make sure you have the intra host bandwidth to deliver the throughput. Last but not least, it’s pretty silly to have super performant backup targets when your backup source storage can’t deliver the data fast enough. Use common sense and you’ll be alright. It doesn’t need to cost you 10K to find out if buying 800 or 1600 € of NVME storage will work for you. If it seems to work, we can drop 2TB worth of NVMe storage in 3 backup target servers for under 4800 €. Using that in production for 6 months will teach us more than an expensive POC anyway.

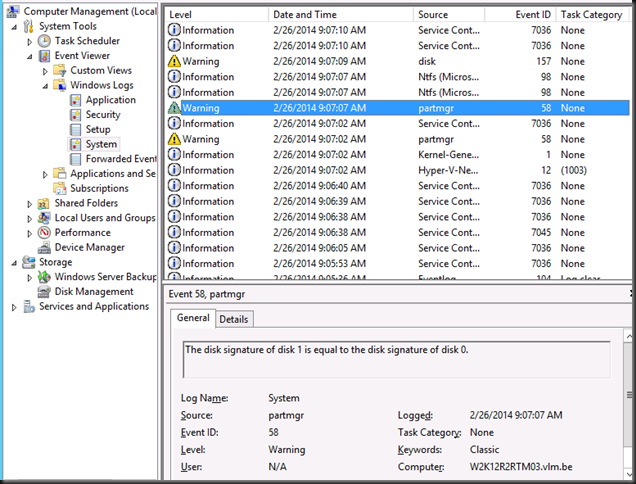

![image_thumb[3] image_thumb[3]](https://blog.workinghardinit.work/wp-content/uploads/2014/02/image_thumb3_thumb1.png?w=644&h=167)