The PowerEdge VRTX

I went to have a chat with Dell at TechEd 2013 Europe in Madrid. The VRTX was launched during DELL Enterprise Forum early June 2013 this concept packs a punch and I encourage you to go look at the VRTX (pronounced as “Vertex”) in more detail here. It’s a very quite setup which can be hooked up to standard power. Pretty energy efficient when you consider the power of the VRTX. And the entire setup surely packs a lot of punch at an attractive price point.

|

|

It can serve perfectly for Remote Office / Branch Office (ROBO) deployments but has many more use cases as it’s very versatile. In my humble opinion DELL’s latest form factor could be used for some very nice scale out scenarios. It’s near perfect for a Windows Server 2012 Scale Out File Server (SOFS) building block. While smaller ones can be build using 1Gbps the future just needs 10Gbps networking.

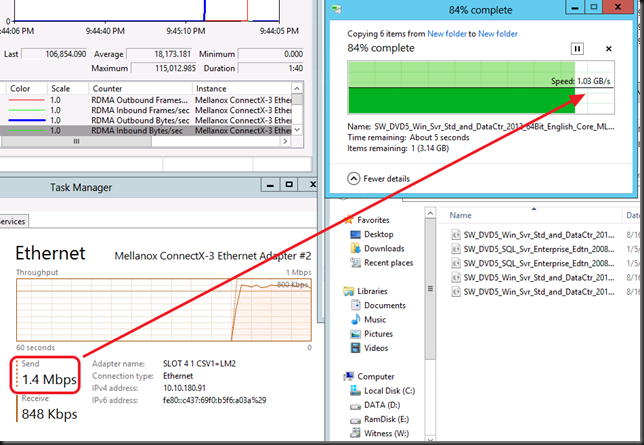

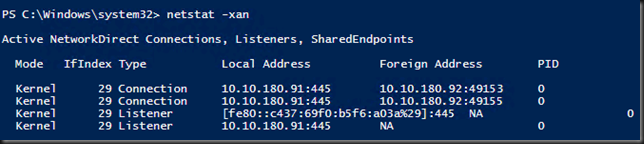

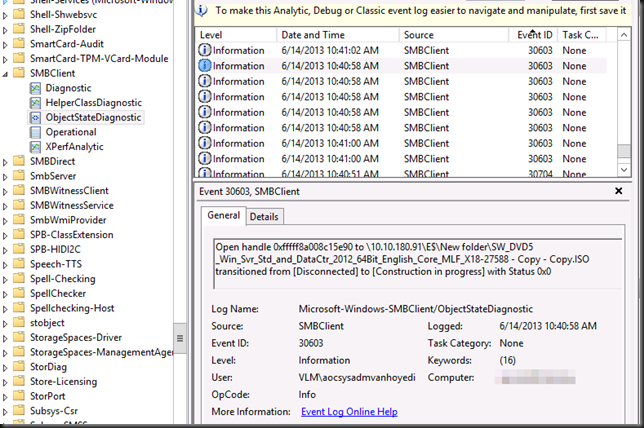

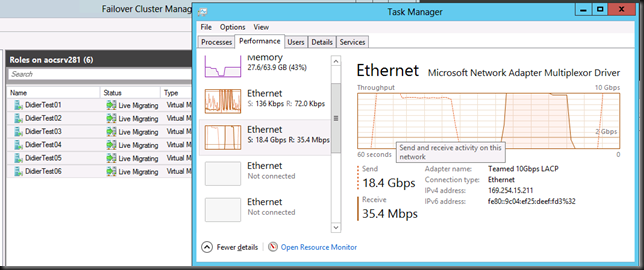

10Gbps, RDMA (iWarp, RoCE)

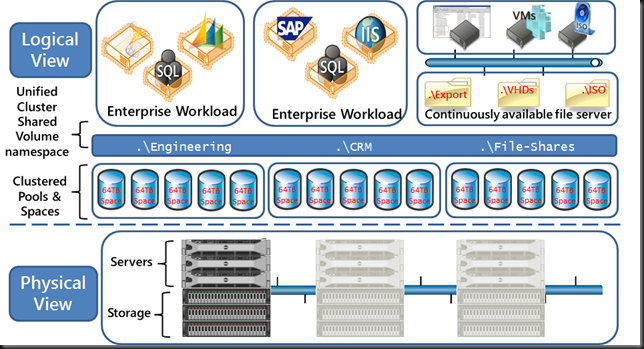

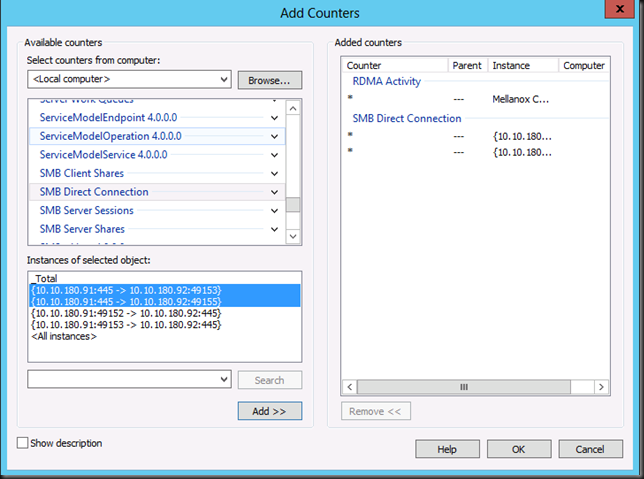

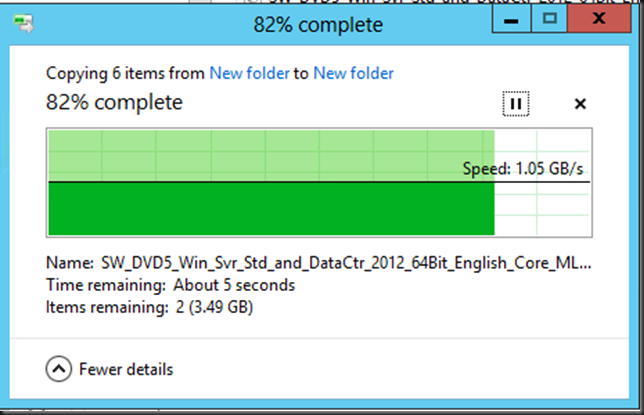

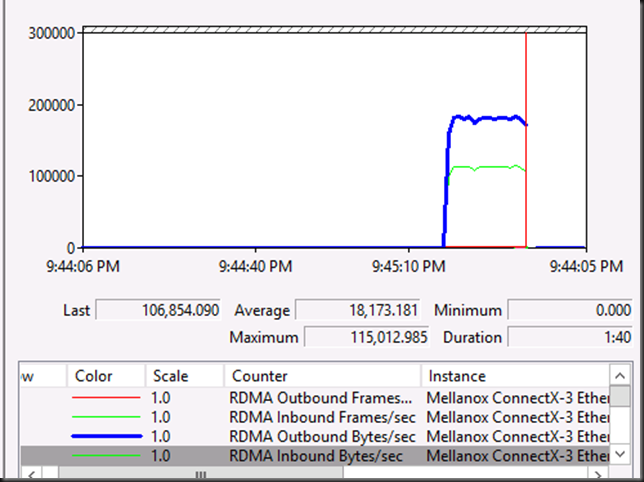

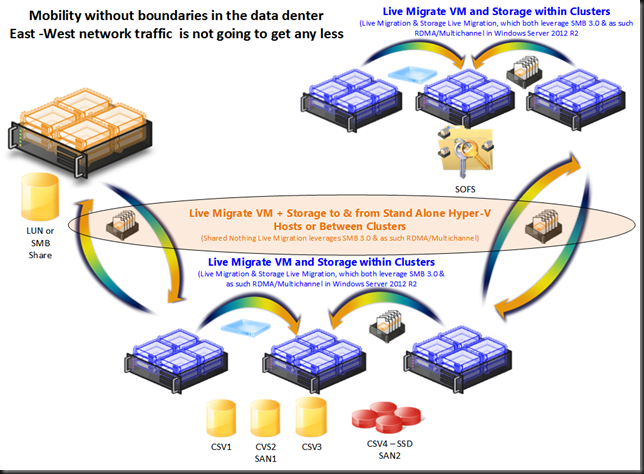

That’s the first thing I missed and the first thing I was told that would arrive very soon. So I’m very happy with that. With sufficient 10Gbps ports to servers and iWarp or RoCE RDMA capable NICs (there’s cheap enough compared to ordinary 10Gbps cards not to have to leave that capability out) we have all we need to function as powerful building block for the Scale Out File Server model with Windows Server 2012 (R2) where the CSV network becomes the storage network leveraging redirected IO. For this concept look at this picture from a presentation a year ago. SMB 3.0 Multichannel and RDMA make this possible.

While then I drew the SOFS building blocks out of R720 7 & MD1200 hardware, the VRTX could fit in there perfectly!

Storage Options

Today DELL uses their implementation of Clustered PCI Raid for shared storage which is supported since Windows Server 2012. This is great. For the moment it’s a non redundant setup but a redundant one is in the works I’m told. Nice, but think positive, redirected IO (block level) over SMB 3.0 would save our storage IO even today. It would be a very wise and great addition to the capabilities of this building block to add the option & support for Storage Spaces. This would make the Scale Out File Server concept shine with the VRTX.

Why? Well I would give us following benefits in the storage layer and the VHDX format in Hyper-V can take benefits :

- Deduplication

- Thin Provisioning

- Management Delegation

- UNMAP

- Write Cache

- Full benefits of ReFS on storage spaces for data protection

- Automatic Data Tiering with commodity SSD (ever cheaper & bigger) and SAS disks perhaps even the Near Line ones (less power & cooling, great capacity)

- Potential for JBOD redundancy

Look at that feature set people, in box, delivered by Windows. Sweet! Combine this with 10Gbps networking and DELL has not only a SOFS building block in their port folio, it also offers significant storage features in this package. I for one would like them to do so and not miss out on this opportunity to offer even more capabilities in an attractive price package. Dell could be the very first OEM to grab this new market opportunity by supporting the scale out approach and out maneuver their competitors

Anything Else?

Combine such a building block as described above with their unmatched logistical force for distribution and support this will be a hit a a prime choice for Windows shops. They already have the 10Gbps networking gear & features (DCB) in the PowerConnect 81XX & Force10 S4810 switches. It could be an unbeatable price / capabilities / feature combo that would sell very well.

If we go for SOFS we might need more storage in a single building block with a 4 node cluster. Extensibility might be nice for this. More not just as in capacity but I need to work out the IOPS the available configurations can give us.