If you’re running a DELL Compellent SAN you’re probably familiar with Replay Manager. It’s Compellent’s solution to take VSS based (and as such application consistent) snapshots.

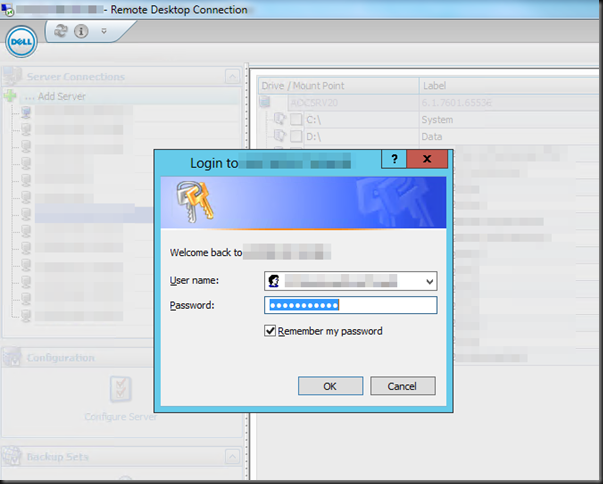

When you’re running Replay Manager you might run into the following issue when trying to access a host.

Every time, you access a host for the first time after opening Replay Manager you’ll be prompted for your password, even if you select Remember my password. You don’t need to retype it so that’s fine, but you do need to click it.

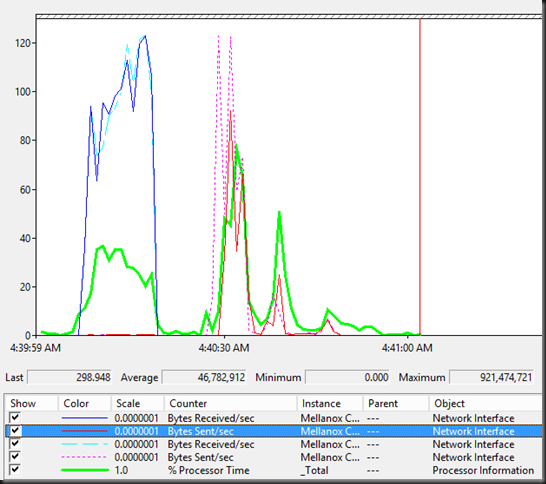

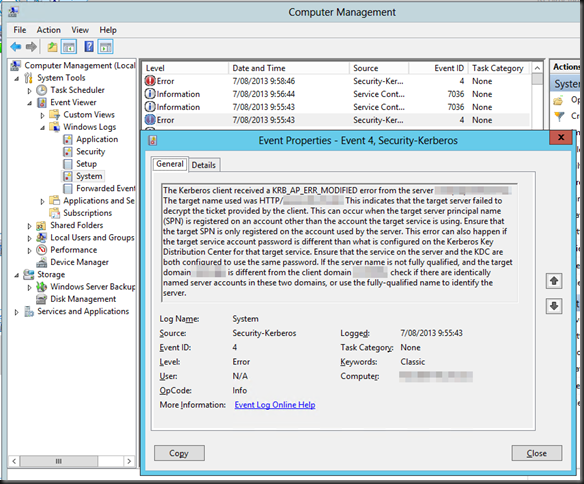

In the system log you’ll see the below error logged.

Log Name: System

Source: Microsoft-Windows-Security-Kerberos

Date: 7/08/2013 9:55:43

Event ID: 4

Task Category: None

Level: Error

Keywords: Classic

User: N/A

Computer: replayserver.test.lab

Description:

The Kerberos client received a KRB_AP_ERR_MODIFIED error from the server replaymanagerservice. The target name used was HTTP/myhost.test.lab. This indicates that the target server failed to decrypt the ticket provided by the client. This can occur when the target server principal name (SPN) is registered on an account other than the account the target service is using. Ensure that the target SPN is only registered on the account used by the server. This error can also happen if the target service account password is different than what is configured on the Kerberos Key Distribution Center for that target service. Ensure that the service on the server and the KDC are both configured to use the same password. If the server name is not fully qualified, and the target domain (TEST.LAB) is different from the client domain (TEST.LAB), check if there are identically named server accounts in these two domains, or use the fully-qualified name to identify the server.

Well, this a rather well know issue in the Microsoft world. Take a look here IIS 7+ Kerberos authentication failure: KRB_AP_ERR_MODIFIED. Browse to the possible causes & solutions. You’ll find this situation right in there. So what we do is execute the following command to register the correct SPN for the host or hosts on the Replay Manager service account:

SetSPN -a HTTP/myhost.test.lab TESTreplaymanagerservice

Do note to run this from an elevated command prompt using a account with sufficient AD permissions in AD. You’ll now no longer have to click on the username/password prompt and get rid of that error.

You can verify if the SPB for your hosts exists on your Replay Manager Service account by running:

SetSPN -l TESTreplaymanagerservice

If this is the biggest issue you’ll ever have with a hardware snapshot service & hardware provider you know you’ve got a good solution.