What is it?

One of the cool new features that takes scalability in Windows Server 2012 R2 Hyper-V to a new level is virtual Receive Side Scaling (vRSS). While since In Windows Server 2012, Receive Side Scaling (RSS) over SR-IOV is supported it’s best suited for some specialized environments that require the best possible speeds at the lowest possible latencies. While SR-IOV is great for performance it’s not as flexible as for example you can’t team them so if you need redundancy you’ll need to do guest NIC Teaming.

vRSS is supported on the VM network path (vNIC, vSwitch, pNIC) and allows VMs to scale better under heavier network loads. The lack of RSS support in the guest means that there is only one logical CPU (core 0) that has to deal with all the network interrupts. vRSS avoid this bottleneck by spreading network traffic among multiple VM processors. Which is great news for data copy heavy environments.

What do you need?

Nothing special, it works with any NICs that supports VMQ and that’s about all 10Gbps NICs you can buy or posses. So no investment is needed. It’s basically the DVMQ capability on the host NIC that has VMQ capabilities that allows for vRSS to be exposed inside of the VM over the vSwitch. To take advantage of vRSS, VMs must be configured to use multiple cores, and they must support RSS => turn it on in the vNIC configuration in the guest OS and don’t try to use a home PC 1Gbps card ![]()

vRSS is enabled automatically when the VM uses RSS on the VM network path. The other good news is that this works over NIC Teaming. So you don’t have to do in guest NIC Teaming.

What does it look like?

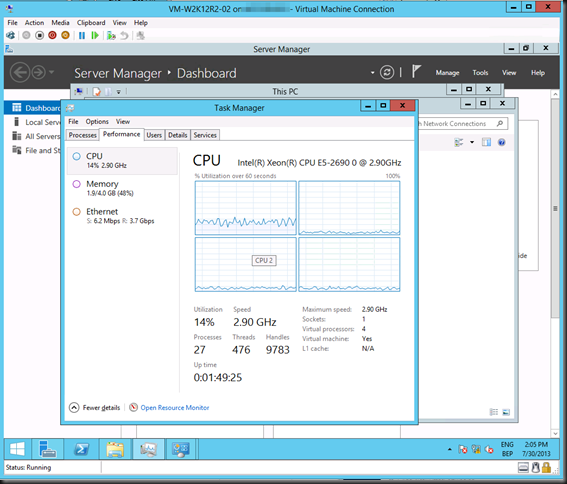

Now without SR-IOV it was a serious challenge to push that 10Gbps vNIC to it’s limit due to all the interrupt handling being dealt with by a single CPU core. Here’s what a VMs processor looks like under a sustained network load without vRSS. Not to shabby, but we want more ![]()

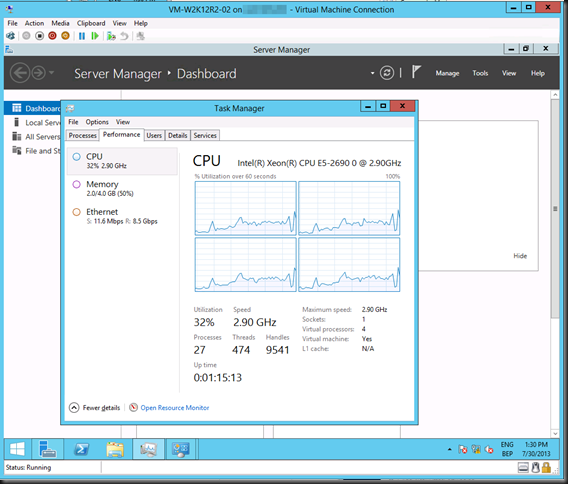

As you can see the incoming network traffic has the be dealt with by good old vCore 0. While DVMQ allows for multiple processors on the host dealing with the interrupts for the VMs it still means that you have a single core per VM. That one core is possibly a limiting factor (if you can get the network throughput and storage IO, that is). vRSS deals with this limitation. Look at the throughput we got copying lot of data to the VM below leveraging vRSS. Yeah that’s 8.5Gbps inside of a VM. Sweet ![]() . I’m sure I can get to 10Gbps …

. I’m sure I can get to 10Gbps …

Pingback: Windows Server 2012 R2 Hyper-V Feature List Glossary

Pingback: Week of July 26: Start testing Windows Server 2012 R2 with the Windows Server MVPs - Server and Cloud Partner and Customer Solutions Team Blog - Site Home - TechNet Blogs

Pingback: Microsoft Most Valuable Professional (MVP) – Best Posts of the Week around Windows Server, Exchange, SystemCenter and more – #40 - Flo's Datacenter Report

Pingback: Microsoft Most Valuable Professional (MVP) – Best Posts of the Week around Windows Server, Exchange, SystemCenter and more – #40 - TechCenter - Blog - TechCenter – Dell Community

Pingback: Microsoft Most Valuable Professional (MVP) – Best Posts of the Week around Windows Server, Exchange, SystemCenter and more – #40 - Dell TechCenter - TechCenter - Dell Community

Pingback: What’s New in 2012 R2: Hybrid Networking - In the Cloud - Site Home - TechNet Blogs

Pingback: 2012 R2 新增功能:混合网络连接 - 微软中国 TechNet 团队博客 - Site Home - TechNet Blogs

Awesome article really helps someone like me, Hyper-V Newbie, understand the networking side of it. But I do have one question. What were you using to stress the network to test? I have tried following your steps and I am have been copying an ISO around to test and not seeing anything like you have, I am seeing roughly 1Gb even though even that speed varies quite a bit. I do have a 10Gb network card in a Proliant server. I have RSS enabled, SR-IOV, JumboFrames, etc..any suggestions to maybe point me in the right direction? sorry if I didn’t provide enough details.

Hi ther, thanks for your kind words. Take a look here http://gallery.technet.microsoft.com/NTttcp-Version-528-Now-f8b12769 it’s a tool that has been around a while but got updated to handle 10Gbps as well. Read up on the parameters and experiment a bit. If you do file copies you’ll need fast storage to get anywhere or try to play with RAMDisk to get decent speed in the absence of a flah only SAN 🙂

Thanks for reading

Hi Didier,

Thanks for the article.

I was commenting Hans Vredevoort blog (http://www.hyper-v.nu/archives/hvredevoort/2012/06/building-a-converged-fabric-with-windows-server-2012-powershell/#comment-31361) about Converged Fabric implementation and specifically about the 1 LP / ~5 Gbit/s limit when dealing with Virtual switches. In fact, without RSS, 1 LP is engaged when processing data. With RSS, many LPs can be involved and we can reach the desired throughput (10 Gbit/s or more). With the Conveged Fabric architecture introduced with Windows Server 2012, we can create many virtual NICs on the management host and have a role for each virtual NIC. The limit is that the maximum throughput is limited (~5gbit/s) because RSS is not supported on these virtual NICs or on the top Virtual Switch (this limit the Live Migration to 5Gbit/s if we dedicate a virtual NIC for LM). My question was : Is this the on Windows Server 2012 R2 ? (I didn’t have the chance to test that and report the answer)

As it says in the title Virtual Receive Side Scaling (vRSS) In Windows Server 2012 R2 Hyper-V, it’s R2 and vRSS is in VMs only, not vNICs leveraged on management partition.

Thanks. Not very smart from Microsoft since they are both Virtual NICs and this will make the Converged Fabric implementation very attractive.

Thanks Didier !

They’ve made great progress and I’m convinced they are not finished yet.

I agree, the progress alone is worth the patience !!