Move from RAID to AHCI

The good news is you do not need to reinstall Windows to move from RAID to AHCI. You just need to do it right and in the correct order to make this pretty straightforward.

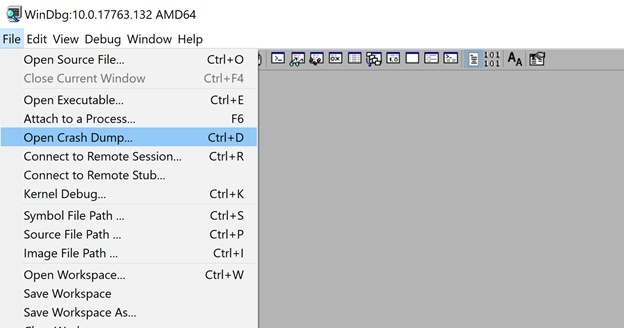

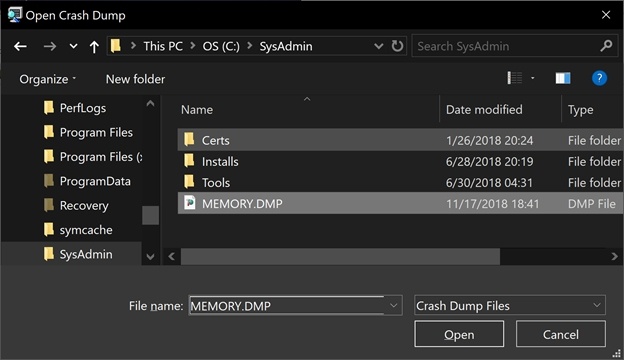

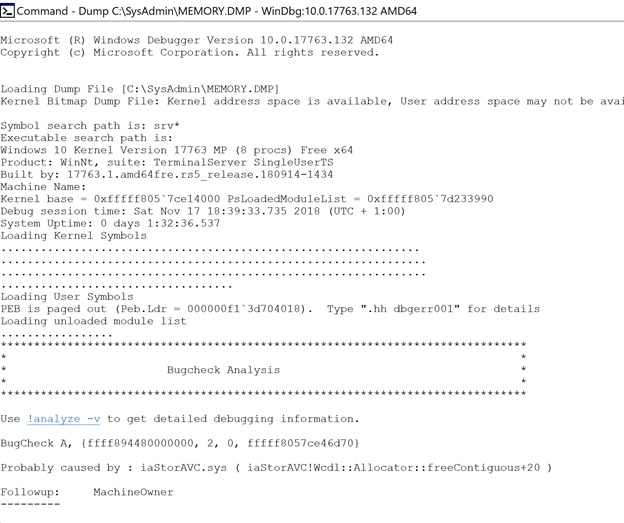

As you might recall from my previous blog DELL XPS Laptops and seemingly random Blue Screens of Death the reason for moving to AHCI is that our laptop blue screens on the iaStorAVC.sys driver of the Intel Rapid Storage Technology when in SATA RAID mode. If an update is not available or doesn’t help we can move to SATA AHCI mode and avoid any more BSOD due to the Intel RST driver.

The process for moving from RAID TO AHCI (and back again if you so desire) is described very well over here https://gist.github.com/chenxiaolong/4beec93c464639a19ad82eeccc828c63. Note that this can also be done via

Step by step RAID to AHCI

To move from RAID to AHCI is surprisingly easy. All that’s needed is a successful boot to Safe Mode after switching from RAID to AHCI in the BIOS settings. It is important you first boot into safe mode after making the change in the BIOS. This process was tested with an XPS 13 9360 and a lab Lattitude E6220. The process, step by step, is as follows.

- To set the default boot mode to Safe Mode, usemsconfig.exe or open an admin cmd/PowerShell window and run:

bcdedit /set ‘{current}’safeboot minimal - Reboot and hit F2 to enter the BIOS.

- Change the SATA mode to AHCI.

- Save and reboot.

- After Windows successfully boots into Safe Mode, disable Safe Mode with msconfig.exe or open an admin cmd/PowerShell window and run:

bcdedit /deletevalue ‘{current}’safeboot - Reboot again.

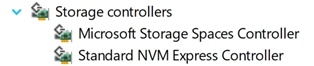

- Log in and verify in Device Manager, that you now have the Standard NVM Express Controller device under Storage Controllers.

The performance with my KXG50ZNV512G NVMe Toshiba disk is still OK. Not as good as it could be but that’s reality for you. It’s worth to note that DELL does not officially support other NVMe drivers than the inbox Windows one for AHCI mode. Not saying you cannot try to install them but thenyou would be out of support. As noted above, standard these laptops come with RAID mode enabled by default. AHCI is supported and sometimes even needed (dual boot with Linux for example).

Anyway, things work and perform well. They are also stable and that is of utmost performance for me. If at a later date you want to move to RAID again read the next blog: Moving from AHCI to Raid