Introduction

Yes, Windows Server 2012 R2. Me, the most vocal proponent of keeping your environments up to date, to the level I barely have a Windows Server 2022 under my care anymore.

So what gives? Some people spun up a brand-new Windows Server 2025 Hyper-V cluster and migrated a truckload of their virtual machines over. I love modern infrastructure, so this all sounds very good until they reach out with a little issue. About a dozen of their virtual machines do not boot properly, but into the recovery console. My first question was what is the OS on those virtual machines? When the answer is Windows Server 2012 R2, maybe some Windows Server 2016, I had heard all I needed to know to help “fix” this. The real solution is not running those old out-of-support OS versions anymore, but we can “fix” it so your apps keep running while you upgrade or migrate.

Symptoms

Their older but business-critical Windows Server 2012 R2 VMs—and these were Generation 2, UEFI VMs, no less, but did not boot on their shiny new Hyper-V cluster. The migration itself went smoothly, they said, but when they started the virtual machines, the apps did not come up. So they checked the console logs of those virtual machines and saw STOP 0x0000007B: INACCESSIBLE_BOOT_DEVICE errors and recovery consoles. Rebooting did not help at all. This was a solid, reproducible crash loop, exactly at the point where the OS should hand off the bootloader to the kernel and find its disk. If you’ve been in the game for a while, you know that this usually spells one thing: a fundamental storage or bus driver issue. But why now?

The ACPI Identity Crisis

Windows Server 2012 (R2) and Windows Server 2016 are not supported on Windows Server 2025 Hyper-V. Upgrade or migrate before you move them.

This wasn’t just some random corruption. We were looking at a fundamental compatibility issue. To understand why, you need to understand how Hyper-V and the Guest OS communicate during the boot process.

Server 2012 R2 came out in 2013. Hyper-V 2025 is the latest of the greatest at the time of writing. In the decade between those releases, the “hardware signatures” (Hardware IDs, or HWIDs) that Hyper-V presents to a virtual machine have evolved.

In Gen 2 VMs, Windows relies heavily on the ACPI (Advanced Configuration and Power Interface) tables to find its critical components, especially the virtual machine bus (VMBus) and the storage controllers that attach to it.

When 2012 R2 boots, the kernel says, “Okay, ACPI, show me my storage bus.”

The Hyper-V 2025 host says, “Here is your storage bus, its ID is MSFT1000.”

The 2012 R2 kernel looks in its driver database and goes, “MSFT1000? I have no idea who that is. I’m looking for VMBus or nothing.”

Boom. It can’t see the bus, it can’t load the disk driver, and it can’t find itself so it suffers an Inaccessible Boot Device crash. As the guest has no clue what to do.

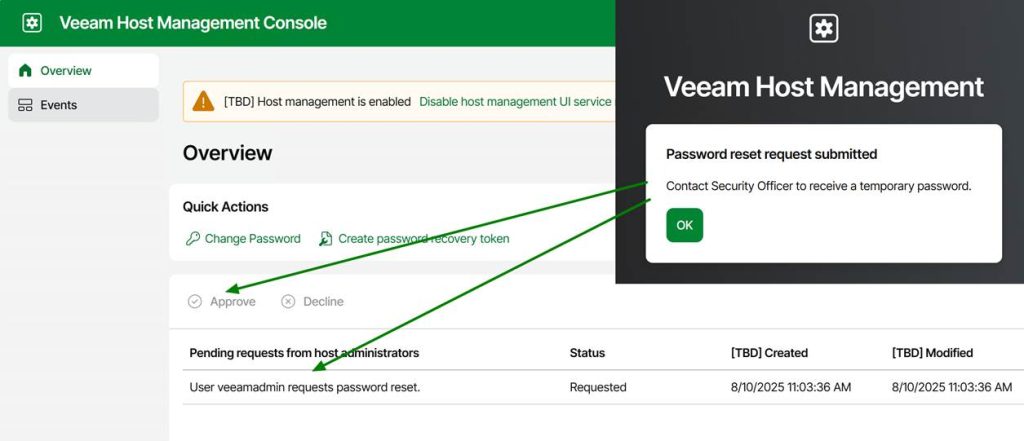

The “fix” is in some offline registry editing

Since the VM was in a crash loop and couldn’t boot to Windows, we had to perform some offline registry surgery. Luckily for them, the virtual machines could boot into their recovery environments, so they did not have to boot from an ISO to reach a command prompt and access the offline system hive.

We used a combination of reg load to “mount” the system registry from the VM’s disk onto our repair environment, and then some strategic reg copy commands to “spoof” the IDs.

Step by step

- Mounting the Hive:

Code snippet

reg load HKLM\TempHive c:\Windows\system32\config\SYSTEM

(This assumes c: is where the VM’s Windows volume is mounted.) - Map the VMBus to MSFT1000

Code snippet

reg copy HKLM\TempHive\ControlSet001\Enum\ACPI\VMBus HKLM\TempHive\ControlSet001\Enum\ACPI\MSFT1000 /s

This is the core fix. We are telling the 2012 R2 system: “Look, if you ever see a device calling itself MSFT1000, don’t ignore it. Duplicate every single setting, driver binding, and service permission you have for ‘VMBus’ and apply it to this new ‘MSFT1000’ identity.” This essentially links the modern host’s ID to the older OS’s native VMBus driver stack. - Mapping the Generation Counter to MSFT1002

Code snippet

reg copy HKLM\TempHive\ControlSet001\Enum\ACPI\Hyper_V_Gen_Counter_V1 HKLM\TempHive\ControlSet001\Enum\ACPI\MSFT1002 /s

This maps the older Hyper_V_Gen_Counter_V1 identity—used for snapshots and consistency—to its modern equivalent on the 2025 host, MSFT1002. This is crucial for making sure integration services load properly.

After these commands, we had to run reg unload HKLM\TempHive to save our changes. We removed the ISO, rebooted, and… Bingo. The Server 2012 R2 boot screen appeared, and the login prompt followed shortly after.

This works because Server 2012 R2 has the necessary VMBus and storage drivers; it just doesn’t know they are compatible with the hardware IDs reported by Hyper-V 2025. This registry trick just creates that necessary driver-to-hardware binding.

But remember that this is an “unsupported” hack! While this gets the VM booting, moving 2012 R2 to newer hosts often means features might be degraded. Microsoft deprecated official support for 2012 R2 guests on modern hosts a while ago. Windows Server 2016 RTM without modern patching will suffer from the same issue, by the way.

Below is a complete script you can copy and paste into CMD.exe in your recovery environment to fix a virtual machine with this issue.

@echo off

echo.

echo ============================================================

echo Hyper-V 2025 ACPI Fix for Windows Server 2012 R2 / 2016 RTM

echo - Adds MSFT1000 (VMBus) and MSFT1002 (GenCounter)

echo - Auto-detects ControlSet

echo - Creates SYSTEM hive backup

echo ============================================================

echo.

:: --- Step 1: Detect Windows drive ---

echo Detecting Windows installation drive...

set WINDRV=

for %%d in (C D E F G H I J K L M N O P Q R S T U V W X Y Z) do (

if exist %%d:\Windows\System32\Config\SYSTEM (

set WINDRV=%%d:

)

)

if "%WINDRV%"=="" (

echo ERROR: Could not find Windows installation drive.

echo Aborting.

exit /b 1

)

echo Windows installation found on %WINDRV%

echo.

:: --- Step 2: Backup SYSTEM hive ---

echo Creating SYSTEM hive backup...

copy "%WINDRV%\Windows\System32\Config\SYSTEM" "%WINDRV%\Windows\System32\Config\SYSTEM.bak"

if errorlevel 1 (

echo ERROR: Backup failed. Aborting.

exit /b 1

)

echo Backup created: SYSTEM.bak

echo.

:: --- Step 3: Load SYSTEM hive ---

echo Loading SYSTEM hive into HKLM\TempHive...

reg load HKLM\TempHive "%WINDRV%\Windows\System32\Config\SYSTEM"

if errorlevel 1 (

echo ERROR: Failed to load SYSTEM hive. Aborting.

exit /b 1

)

echo Hive loaded.

echo.

:: --- Step 4: Detect active ControlSet ---

echo Detecting active ControlSet...

for /f "tokens=3" %%a in ('reg query HKLM\TempHive\Select /v Current') do set CS=ControlSet00%%a

if "%CS%"=="" (

echo ERROR: Could not determine active ControlSet.

reg unload HKLM\TempHive

exit /b 1

)

echo Active ControlSet: %CS%

echo.

:: --- Step 5: Apply ACPI fixes ---

echo Applying ACPI fixes...

echo - Cloning VMBus -> MSFT1000

reg copy HKLM\TempHive\%CS%\Enum\ACPI\VMBus HKLM\TempHive\%CS%\Enum\ACPI\MSFT1000 /s /f

echo - Cloning Hyper_V_Gen_Counter_V1 -> MSFT1002

reg copy HKLM\TempHive\%CS%\Enum\ACPI\Hyper_V_Gen_Counter_V1 HKLM\TempHive\%CS%\Enum\ACPI\MSFT1002 /s /f

echo ACPI fixes applied.

echo.

:: --- Step 6: Unload hive ---

echo Unloading SYSTEM hive...

reg unload HKLM\TempHive

echo Hive unloaded.

echo.

echo ============================================================

echo FIX COMPLETE

echo You may now reboot the VM.

echo ============================================================

echo.

pause

Better to do this proactively; I have a PowerShell solution on GitHub that also includes the above .cmd script. The Invoke-TestAndFixHyperV2025ReadinessForLegacyVMs.ps1 script can handle virtual machines that are online, before you move them to Hyper-V 2025. https://github.com/WorkingHardInIT/Invoke-TestAndFixHyperV2025ReadinessForLegacyVMs

But I cannot migrate or upgrade yet!

I call bull shit on most of these in 99% of cases. And if it is not bullshit you really need to get your act together and work on fixing your apps/vendors to never allow getting into such a mess in the first place.

Conclusion

Tech debt. You know that thing every IT manager and department is preventing or solving for the last 30 years is still very much around. Despite all that ITIL, risk and change management, or maybe even due to all that talk and very little action.

Sometimes, saving the day isn’t about deploying the latest and greatest tech; it’s about diving into the deepest, darkest corners of the OS and tricking it into working just one more time. There are no guarantees, and this is a ticking time bomb.

I bought these people some time. Now they need to get working! I also kindly suggested they should read their backup vendors’ support statements 😉.