Imagine you’re a storage vendor until a few years ago. Racking in the big money with profit margins unseen by any other hardware in the past decade and living it up in dreams along the Las Vegas Boulevard like there is no tomorrow. To describe your days only a continuous “WEEEEEEEEEEEEEE” will suffice.

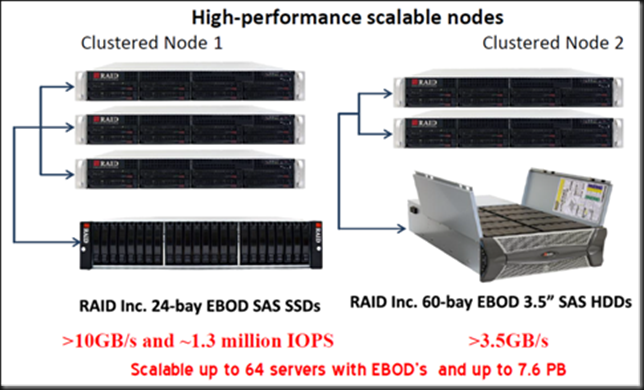

Trying to make it through the economic recession with less Ferraris has been tough enough. Then in August 2012 Windows Server 2012 RTMs and introduces Storage Spaces, SMB 3.0 and Hyper-V Replica. You dismiss those as toy solutions while the demos of a few 100.000 to > million IOPS on the cheap with a couple of Windows boxes and some alternative storage configurations pop up left and right. Not even a year later Windows Server 2012 R2 is unveiled and guess what? The picture below is what your future dreams as a storage vendor could start to look like more and more every day while an ice cold voice sends shivers down your spine.

“And I looked, and behold a pale horse: and his name that sat on him was Death, and Hell followed with him.”

OK, the theatrics above got your attention I hope. If Microsoft keeps up this pace traditional OEM storage vendors will need to improve their value offerings. My advice to all OEMs is to embrace SMB3.0 & Storage Spaces. If you’re not going to help and deliver it to your customers, someone else will. Sure it might eat at the profit margins of some of your current offerings. But perhaps those are too expensive for what they need to deliver, but people buy them as there are no alternatives. Or perhaps they just don’t buy anything as the economics are out of whack. Well alternatives have arrived and more than that. This also paves the path for projects that were previously economically unfeasible. So that’s a whole new market to explore. Will the OEM vendors act & do what’s right? I hope so. They have the distribution & support channels already in place. It’s not a treat it’s an opportunity! Change is upon us.

What do we have in front of us today?

- Read Cache? We got it, it’s called CSV Cache.

- Write cache? We got it, shared SSDs in Storage spaces

- Storage Tiering? We got it in Storage Spaces

- Extremely great data protection even against bit rot and on the fly repairs of corrupt data without missing a beat. Let me introduce you to ReFS in combination with Storage Spaces now available for clustering & CSVs.

- Affordable storage both in capacity and performance … again meet storage spaces.

- UNMAP to the storage level. Storage Spaces has this already in Windows Server 2012

- Controllers? Are there still SAN vendors not using SAS for storage connectivity between disk bays and controllers?

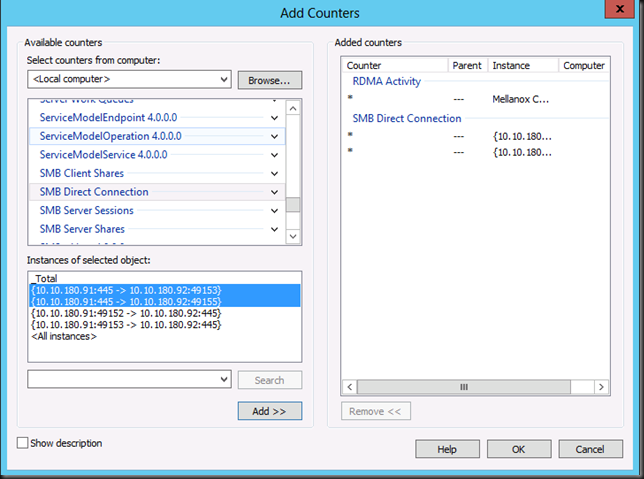

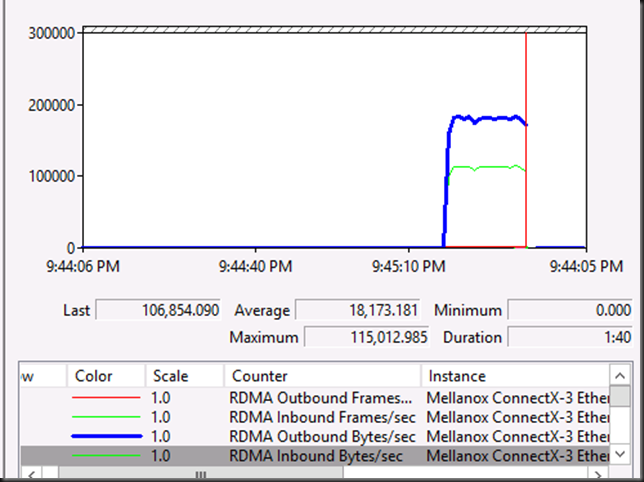

- Host connectivity? RDMA baby. iWarp, RoCE, Infiniband. That PCI 3 slot better move on to 4 if it doesn’t want to melt under the IOPS …

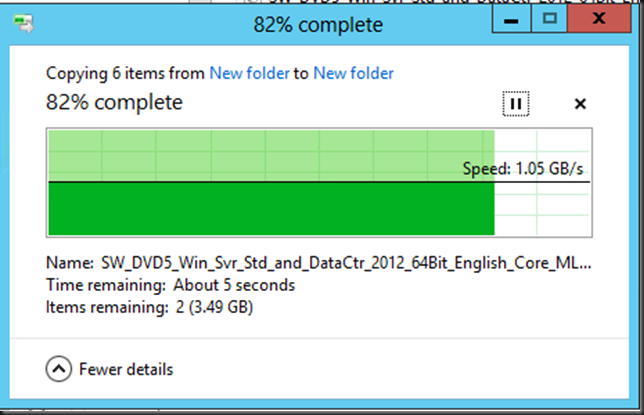

- Storage fabric? Hello 10Gbps (and better) at a fraction of the cost of ridiculously expensive Fiber Channel switches and at amazingly better performance.

- Easy to provision and manage storage? SMB 3.0 shares.

- Scale up & scale out? SMB 3.0 SOFS & the CSV network.

- Protection against disk bay failure? Yes Storage Spaces has this & it’s not space inefficient either

. Heck some SAN vendors don’t even offer this.

. Heck some SAN vendors don’t even offer this. - Delegation capabilities of storage administration? Check!

- Easy in guest clustering? Yes via SMB3.0 but now also shared VHDX! That’s a biggie people!

- Hyper-V Replication = free, cheap, effective and easy

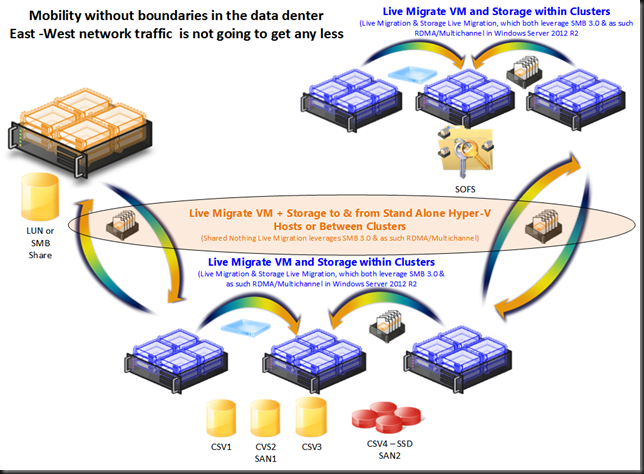

- Total VM mobility in the data center so SAN based solutions become less important. We’ve broken out of the storage silo’s …

You can’t seriously mean the “Windoze Server” can replace a custom designed SAN?

Let’s say that it’s true and it isn’t as optimized as a dedicated storage appliance. So what, add another 10 commodity SSD units at the cost of one OEM SSD and make your storage fly. Windows Server 2012 can handle the IOPS, the CPU cycles, memory demands in both capacity and speed together with a network performance that scales beyond what most people needs. I’ve talked about this before in Some Thoughts Buying State Of The Art Storage Solutions Anno 2012. The hardware is a commodity today. What if Windows can and does the software part? That will wake a storage vendor up in the morning!

Whilst not perfect yet, all Microsoft has to do is develop Hyper-V replica further. Together with developing snapshotting & replication capabilities in Storage Spaces this would make for a very cost effective and complete solution for backups & disaster recoveries. Cheaper & cheaper 10Gbps makes this feasible. SAN vendors today have another bonus left, ODX. How long will that last? ASIC you say. Cool gear but at what cost when parallelism & x64 8 core CPUs are the standard and very cheap. My bet is that Microsoft will not stop here but come back to throw some dirt on a part of classic storage world’s coffin in vNext. Listen, I know about the fancy replication mechanisms but in a virtualized data center the mobility of VM over the network is a fact. 10Gbps, 40Gbps, RDMA & Multichannel in SMB 3.0 puts this in our hands. Next to that the application level replication is gaining more and more traction and many apps are providing high availability in a “shared nothing“ fashion (SQL/Exchange with their database availability groups, Hyper-V, R-DFS, …). The need for the storage to provide replication for many scenarios is diminishing. Alternatives are here. Less visible than Microsoft but there are others who know there are better economies to storage http://blog.backblaze.com/2011/07/20/petabytes-on-a-budget-v2-0revealing-more-secrets/ & http://blog.backblaze.com/2011/07/20/petabytes-on-a-budget-v2-0revealing-more-secrets/.

The days when storage vendors offered 85% discounts on hopelessly overpriced storage and still make a killing and a Las Vegas trip are ending. Partners and resellers who just grab 8% of that (and hence benefits from overselling as much a possible) will learn just like with servers and switches they can’t keep milking that cash cow forever. They need to add true and tangible value. I’ve said it before to many VARs have left out the VA for too long now. Hint: the more they state they are not box movers the bigger the risk that they are. True advisors are discussing solutions & designs. We need that money to invest in our dynamic “cloud” like data centers, where the ROI is better. Trust me no one will starve to death because of this, we’ll all still make a living. SANs are not dead. But their role & position is changing. The storage market is in flux right now and I’m very interested in what will happen over the next years.

Am I a consultant trying to sell Windows Server 2012 R2 & System Center? No, I’m a customer. The kind you can’t sell to that easily. It’s my money & livelihood on the line and I demand Windows Server 2012 (R2) solutions to get me the best bang for the buck. Will you deliver them and make money by adding value or do you want to stay in the denial phase? Ladies & Gentleman storage vendors, this is your wake-up call. If you really want to know for whom the bell is tolling, it tolls for thee. There will be a reckoning and either you’ll embrace these new technologies to serve your customers or they’ll get their needs served elsewhere. Banking on customers to be and remain clueless is risky. The interest in Storage Spaces is out there and it’s growing fast. I know several people actively working on solutions & projects.

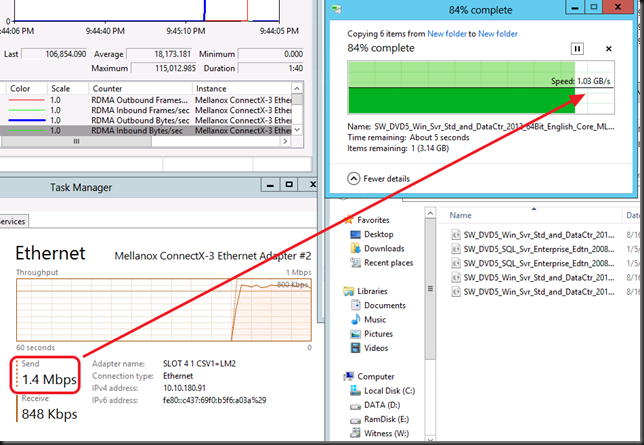

You like what you see? Sure IOPS are not the end game and a bit of a “simplistic” way to look at storage performance but that goes for all marketing spin from all vendors.

Can anyone ruin this party? Yes Microsoft themselves perhaps, if they focus too much on delivering this technology only to the hosting and cloud providers. If on the other hand they make sure there are feasible, realistic and easy channels to get it into the hands of “on premise” customers all over the globe, it will work. Established OEMs could be that channel but by the looks of it they’re in denial and might cling to the past hoping thing won’t change. That would be a big mistake as embracing this trend will open up new opportunities, not just threaten existing models. The Asia Pacific is just one region that is full of eager businesses with no vested interests in keeping the status quo. Perhaps this is something to consider? And for the record I do buy and use SANs (high-end, mid-market, or simple shared storage). Why? It depends on the needs & the budget. Storage Spaces can help balance those even better.

Is this too risky? No, start small and gain experience with it. It won’t break the bank but might deliver great benefits. And if not .. there are a lot of storage options out there, don’t worry. So go on ![]()