Introduction

The goal was to make sure the KempTechnologies LoadMaster Application Delivery Controller was capable to handle the traffic to all load balanced virtual machines in a high volume data and compute environment. Needless to say the solution had to be highly available.

A highly redundant Application Delivery Controller Setup with KempTechnologies

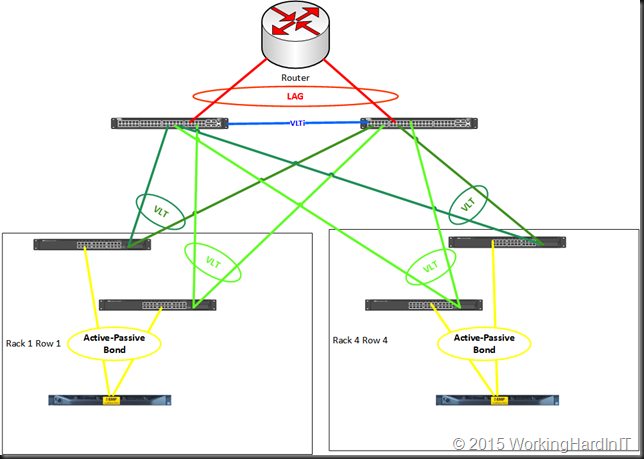

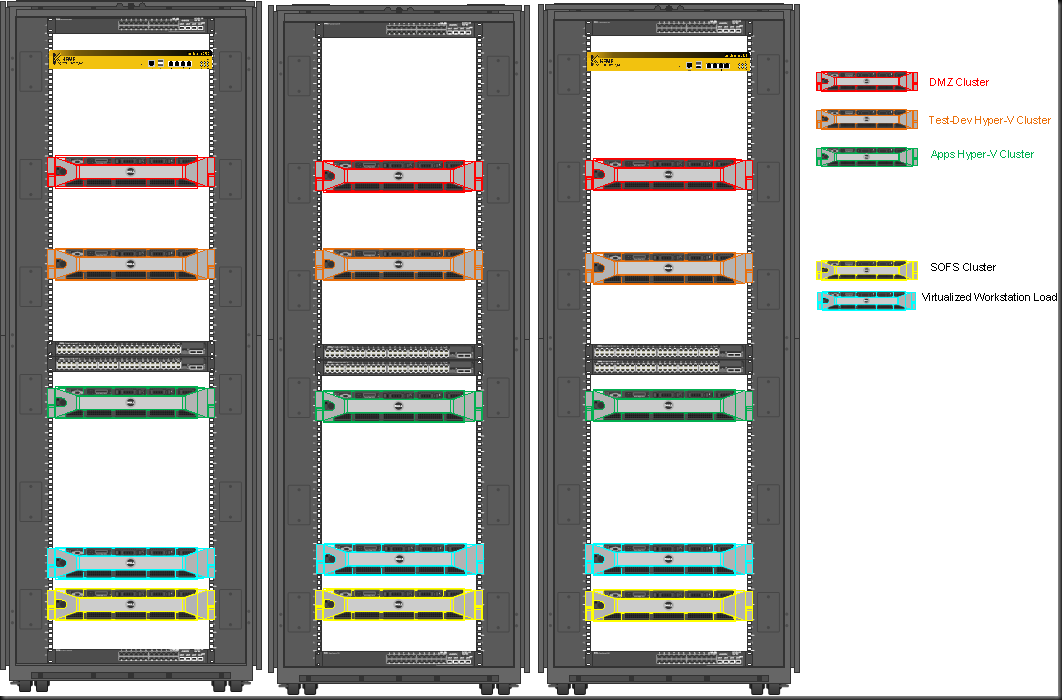

The environment offers rack and row as failure units in power, networking and compute. Hyper-V clusters nodes are spread across racks in different rows. Networking is high to continuously available allowing for planned and unplanned maintenance as well as failure of switches. All racks have redundant PDUs that are remotely managed over Ethernet. There is a separate out of band network with remote access.

The 2 Kemp LoadMasters are mounted a different row and different rack to spread the risk and maintain high availability. Eth0 & Eth2 are in active passive bond for a redundant management interface, eth1 is used to provide a secondary backup link for HA. These use the switch independent redundant switches of the rack that also uplink (VLT) to the Force10 switches (spread across racks and rows themselves). The two 10GBps ports are in an active-passive bond to trunked ports of the two redundant switch independent 10 Gbps switches in the rack. So we also have protection against port or cable failures.

Some tips: Use TRUNK for the port mode, not general with DELL switches.

This design allows gives us a lot of capabilities.We have redundant networking for all networks. We have an active-passive LoadMasters which means:

- Failover when the active on fails

- Non service interrupting firmware upgrades

- The rack is the failure domain. As each rack is in a different row we also mitigate “localized” issues (power, maintenance affecting the rack, …)

Combine this with the fact that these are bare metal LoadMasters (DELL R320 with iDRAC – see Remote Access to the KEMP R320 LoadMaster (DELL) via DRAC Adds Value) we have out of band management even when we have network issues. The racks are provisioned with PDU that are managed over Ethernet so we can even cut the power remotely if needed to resolve issues.

Conclusion

The results are very good and we get “zero ping loss” failover between the LoadMaster Nodes during testing.

We have a solid, redundant Application Deliver Controller deployment that does not break the switch independent TOR setup that exists in all racks/rows. It’s active passive on the controller level and active-passive at the network (bonding) level. If that is an issue the TOR switches should be configured as MLAGs. That would enable LACP for the bonded interfaces. At the LoadMaster level these could be configured as a cluster to get an active-active setup, if some of the restrictions this imposes are not a concern to your environment.

Important Note:

Some high end switches such as the Force10 Series with VLT support attaching single homes devices (devices not attached to both members on an VLT). While VLT and MLAG are very similar MLAGs come with their own needs & restrictions. Not all switches that support MLAG can handle single homed devices. The obvious solution is no to attach single homed devices but that is not always a possibility with certain devices. That means other solutions are need which could lead to a significant rise in needed switches defeating the economics of affordable redundant TOR networking (cost of switches, power, rack space, operations, …) or by leveraging MSTP and configuring a dedicates MSTP network for a VLAN which also might not always be possible / feasible so solve the issue. Those single homed devices might very well need to be the same VLANs as the dual homed ones. Stacking would also solve the above issue as the MLAG restrictions do not apply. I do not like stacking however as it breaks the switch independent redundant network design; even during planned maintenance as a firmware upgrade brings down the entire stack.

One thing that is missing is the ability to fail over when the network fails. There is no concept of a “protected” network. This could help try mitigate issues where when a virtual service is down due to network issues the LoadMaster could try and fail over to see if we have more success on the other node. For certain scenarios this could prevent long periods of down time.