This question came up recently, once again, and deserves it a little blog post. If you want to see the benefits of ODX you’ll need to connect your virtual disks to a vSCSI controller or other supported controller option. These are iSCSI, vFC, a SMB 3 File Share or a pass-through disk. But unless you have really good reason to use pass-through disks, don’t. It’s limiting you in to many ways.

Basically in generation 1 virtual machines that boot from a vIDE this rules out the system disk. So the tip here is to store your data that’s moved around in or between virtual machines in vSCSI attached VDH or (preferably) VHDX virtual disks. If you can use generation 2 virtual machines, you’ll be able to leveraged ODX on the system partition as well as it boots from vSCSI ![]() .

.

It goes without saying you need to store any virtual disks involved on ODX capable LUNs via iSCSI, FC, FCoE, SMB 3 File Share or SAS for ODX to be available to the virtual machine.

Also beware that ODX only works on NTFS partitioned disks. The files cannot be compressed or encrypted. Sparse files are not supported either. And finally, the volume cannot be BitLocker protected.

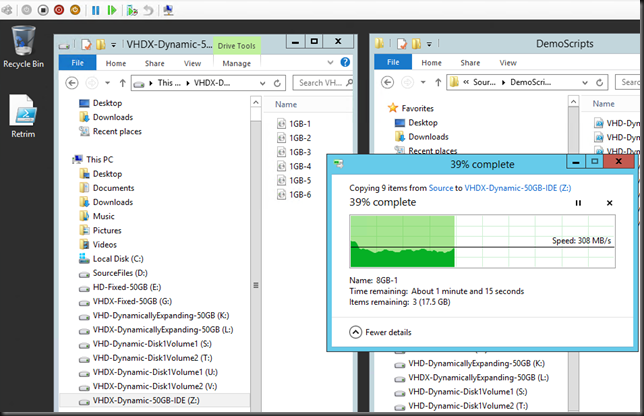

Here’s a screenshot of a copy of 30GB worth of ISO files to a VHDX attached to a vSCSI controller:

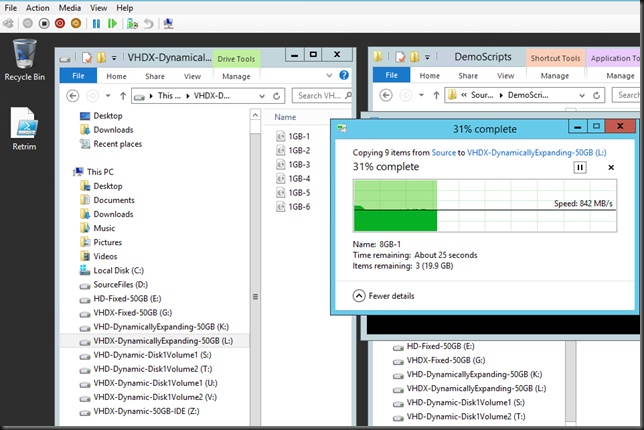

Here’s a screenshot of a copy of 30GB worth of ISO files to a VHDX attached to a vIDE controller.

You’ll notice quite a difference. Depending on the load on the controllers/SAN it’s on average 3 times slower than the same action to a VHDX disk on a vSCSI controller.