Introduction

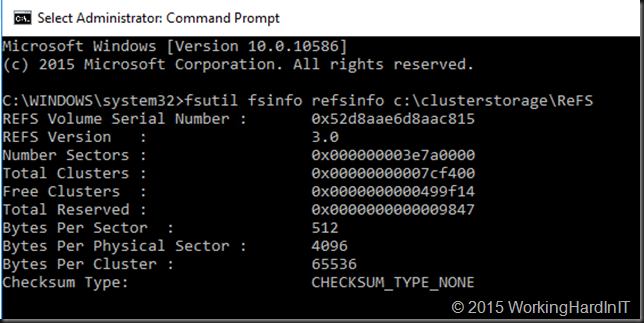

With Windows Server 2016 we also get a new version of ReFS, which I’ll designate aptly as ReFS vNext. It offers a few new abstractions that allow applications and virtualization to control how files are created, copied and moved. The features that are crucial to this are block cloning and data tiering.

Block cloning allows to clone any block of a file into any other block of another file. These operations are based on low cost metadata actions. Block cloning remaps logical clusters (physical locations on a volume) form the source region to the destination region. It’s important to note that this works within the same file or between files. This combined with “allocate on write” ensures isolation between those regions, which basically means files will not over write each other’s data if they happen to reference the same region and one of them writes to that region. Likewise, for a single file, if a region is written to, that changed data will not pop up in the other region. You can learn more about it on this MSDN page on block cloning which explains this further.

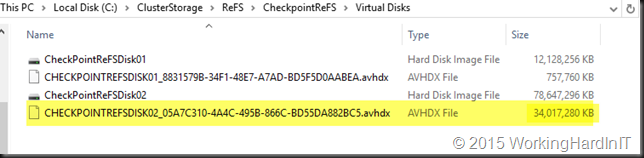

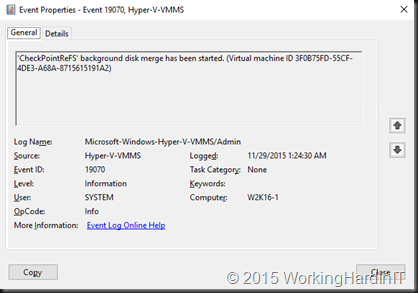

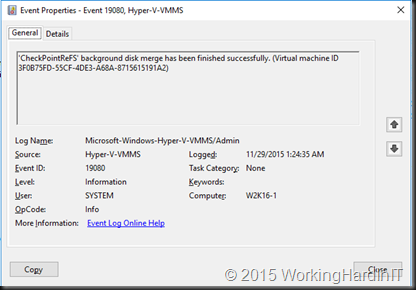

ReFS vNext does not do this for every workload by default. It’s done on behalf of an application that calls block cloning, such as Hyper-V for example when merging VHDX files. For these purposes the meta data operations counting references to regions make data copies within a volume fast as it avoids reading and writing of all the data when creating a new file from an existing one, which would mean a full data copy. It also allows reordering data in a new file as with checkpoint merging and it also allows for “data projection” where data can be projected form one area in to another without an actual copy. That’s how speed is achieved.

Now some of the benefits of ReFS vNext are tied into Storage Space Direct. Such as the tiering capability in relation to the use of erasure encoding / parity to get the best out of a fast tier and a slower tier without losing too much capacity due to multiple full data copies. See Storage Spaces Direct in Technical Preview 4 for more information on this.

I’m still very much a student of all this and I advise you to follow up via blogs and documentation form Microsoft as they become available.

What does it mean?

In the end it’s all about making the best use of available resources. The one that you already have and the one that you will own in the future. This lowers TCO and increases ROI. It’s not just about being fast but also optimizing the use of capacity while protecting data. There is one golden rule in storage: “Thou shalt not lose data”.

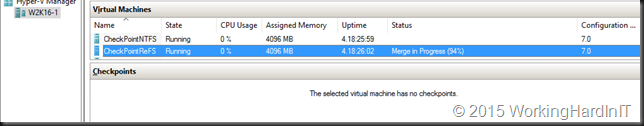

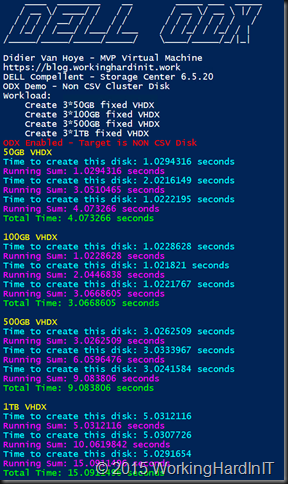

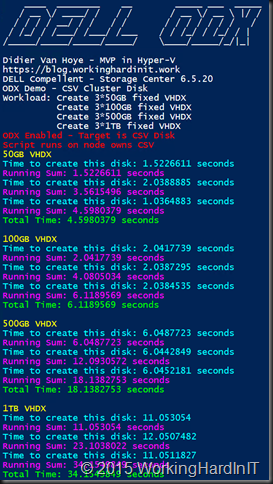

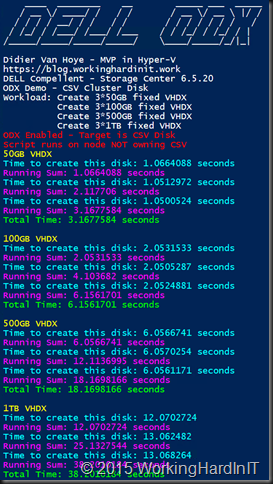

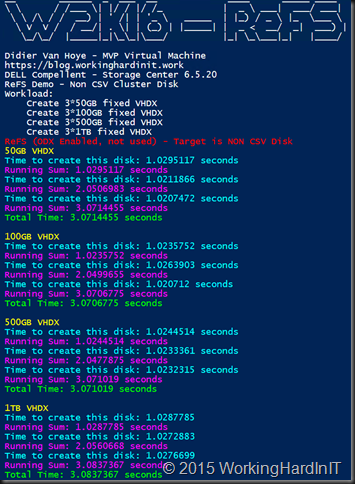

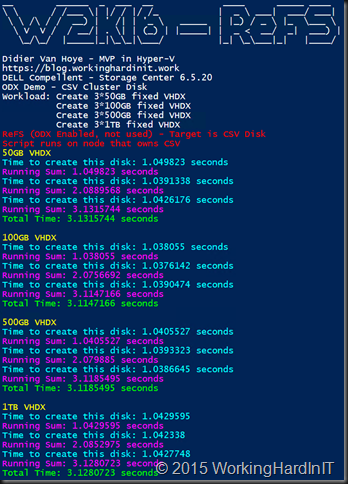

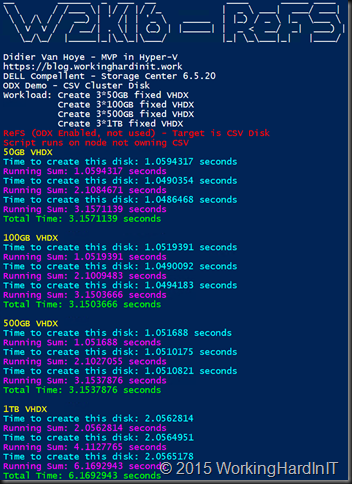

For now, even when you’re not yet in a position to evaluate Storage Space Direct, ReFS vNext on existing storage show a lot of promise as well. I have blogged about file creation speeds (VHDX files) in this blog post: Lightning Fast Fixed VHDX File Creation Speed With ReFS vNext on Windows Server 2016. In another blog post, Accelerated Checkpoint merging with ReFS vNext in Windows Server 2016 you can read about the early results I’ve seen with Hyper-V checkpoint merging in Windows Server 2016 Technical Preview 4. These two examples are pretty amazing and those results are driven by ReFS metadata operations as well.

Does it replace ODX?

While the results so far are impressive and I’m looking forward to more of this, it does not replace ODX. It complements it. But why would we want that you might ask, as we’ve seen in some early testing that it seems to beat ODX in speed? It’s high time to take a look at ReFS vNext Block Cloning and ODX in Windows Server 2016 TPv4.

The reality is that sometimes you’ll probably don’t want ODX to be used as the capabilities of ReFS vNext will provide for better (faster) results. But sometimes ReFS vNext cannot do this. When? Block cloning for all practical purposes works within a volume. That means can only do its magic with data living on the same volume. So when you copy data between two volumes on the same LUN or between volumes on a different LUNs you will not see those speed improvements. So for deploying templates stored on another LUN/CSV fast it’s not that useful. Likewise, if for space issues or performance issues you were storing your checkpoints on a different LUN you will not see the benefits of ReFS vNext block cloning when merging those checkpoints. So you will have to revise certain design and deployment decisions you made in the past. Sometimes you can do this, sometime you can’t. But as ODX works at the array level (or beyond in certain federated systems) you can get excellent speeds wile copying data between volumes / LUNs on the same server, between volumes / LUNs on different servers. You can also leverage SMB 3.0 to have ODX kick in when it makes sense to avoid senseless data copies etc. So ODX has its own strengths and benefits ReFS vNext cannot touch and vice versa. But they complement each other beautifully.

So as ReFS vNext demonstrates ODX like behavior, often outperforming ODX, you cannot just compare those two head on. They have their own strengths. Just remember and realize that ReFS vNext actually does support ODX so when it’s applicable it can be leveraged. That’s one thing I did not understand form the start. This is beginning to sound like an ideal world where ReFS vNext shines whenever its features are the better choice while it can leverage the strengths of ODX – if the underlying storage array provides it – for those scenarios where ReFS vNext cannot do its magic as described above.

The Future

I’m not the architect at Microsoft working on ReFS vNext. I do know however, that a bunch of very smart people is working on that file system. They see, hear and listen to our experiments, results, and requests. ReFS is getting a lot of renewed attention in Windows Server 2016 as the preferred file system for Storage Space Direct and as such for CSVs. Hyper-V is clearly very much on board with leveraging the capabilities of ReFS vNext. The excellent results of that, which we can see in speeding up VHDX creation/ extending and checkpoint merges, are testimony to this. So I’m guessing this file system is far from done and is going places. I’m expecting more and more workloads to start leveraging the ReFS vNext capabilities. I can see ReFS itself also become more and more feature complete and for example Microsoft has now stated that they are working on deduplication for ReFS, although they do not yet have any specifics on release plans. It makes sense that they are doing this. To me, a more feature complete ReFS being leveraged in ever more uses cases is the way forward. For now, we’ll have to wait and see what the future holds but I am positive albeit a bit impatient. As always I’m providing Microsoft with my feedback and wishes. If and when they make sense and are feasible they probably have them on their roadmap or I might give them an idea for a better product, which is good for me as a customer or partner.

![]() .

.