It’s all about application consistent hardware VSS provider snapshots

I was browsing to see if I could already download Replay Manager 7.8 for our Compellent (SC) SANs. No luck yet, but I did find the release notes. There was a real gem in there on Off Host Backup Jobs with Veeam and Replay Manager 7.8. We’ll get back to that after the big deal here.

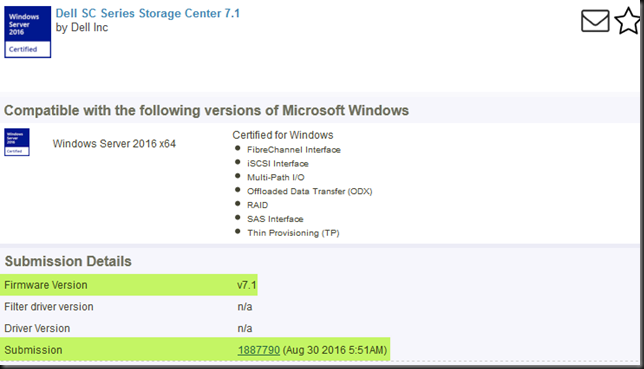

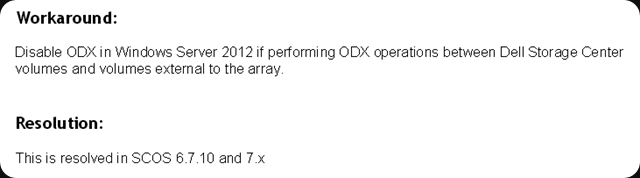

So what kind of goodness is in there? Well obviously there is the way too long overdue support for Windows Server 2016, including the Hyper-V role and its features. That is great news. We now have application consistent hardware VSS provider snapshots.I do not know what took them so long but they need to get with the program here. I have given this a s feedback before and again at DELL EMC World 2017 The Compellent still is one of the best “traditional” centralized storage SAN solutions out there hat punches fare above its weight. On top of that, having looked at Unity form DELL EMC, I can tell you that in my humble opinion the Compellent has no competition from it.

Off Host Backup Jobs Veeam Replay Manager 7.8

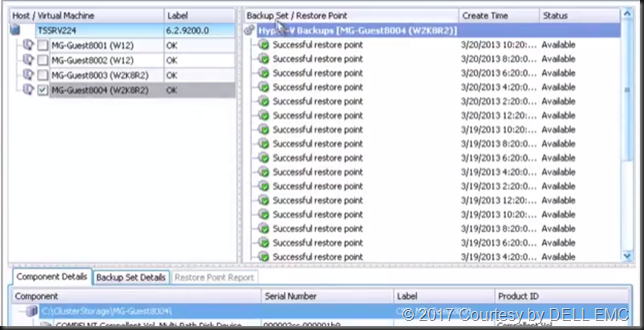

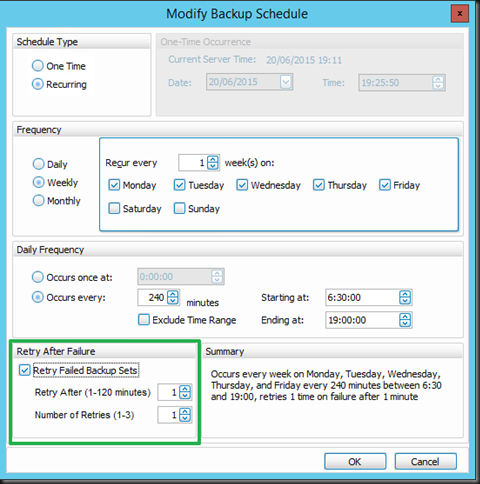

Equally interesting to me, as someone who leverages Compellent and Veeam Baclup & Replication with Off Host Proxies (I wrote FREE WHITE PAPER: Configuring a VEEAM Off Host Backup Proxy Server for backing up a Windows Server 2012 R2 Hyper-V cluster with a DELL Compellent SAN (Fiber Channel)) is the following. Under fixed Issues is found:

RMS-24 Off-host backup jobs might fail during the volume discover scan when using Veeam backup software.

I have Off host proxies with transportable snapshots working pretty smooth but it has the occasional hiccup. Maybe some of those will disappear with Replay Manager 7.8. I’m looking forward to putting that to the test and roll forward with Windows Server 2016 for those nodes where we need and want to leverage the Compellent Hardware VSS provider. When I do I’ll let you know the results.