Introduction

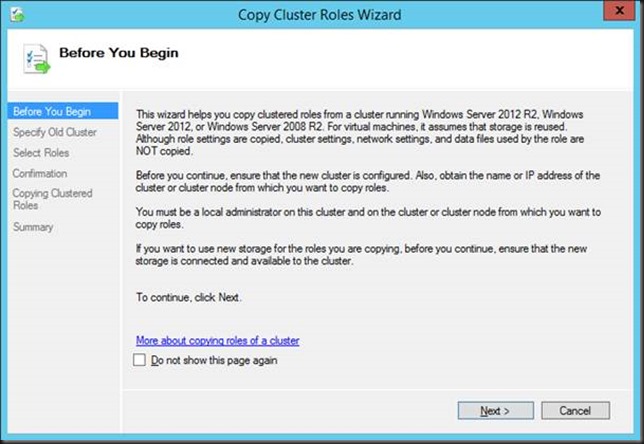

We’ll walk through a transition of a Windows Server 2012 Hyper-V Cluster to R2. For this we’ll use the Copy Cluster Roles Wizard (that’s how the Migration Wizard is now called). You have to approaches. You can start with a R2 cluster new hardware and you might even use new storage. For the process in my lab I evicted the nodes one by one and did a node by node migration to the new cluster. How you’ll do this in your environments depends on how many nodes you have, the number of CSVs and what workload they run. This blog post is an illustration of the process. Not a detailed migration plan customized for you environment.

Step by Step

1. Preparing the Target node(s)/cluster

Install the OS, add the Hyper-V role & the Failover cluster feature on the new or the evicted node.You’ll already have some updates to install only a week after the preview bits came available. Microsoft is on top of things, that’s for sure.

Configure the networking for the virtual switch, CSV, LM, management and iSCSI (that’s what I use in the lab) on the OS. Nothing you don’t know yet for this part.

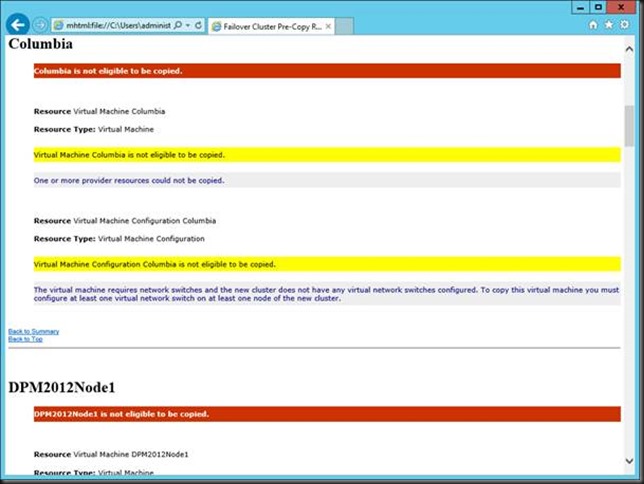

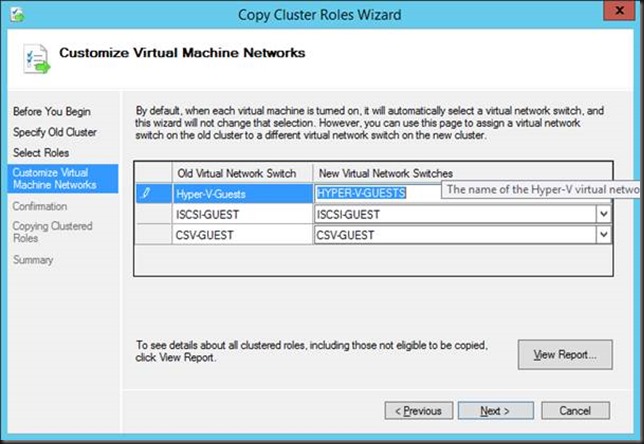

Create a Virtual switch in Hyper-V manager. This is important. If you can you should give it the same name as the ones on the old cluster. Also note that the virtual switch name is case sensitive. If you forget to create one you will not be able to copy the hyper-v virtual machine roles.

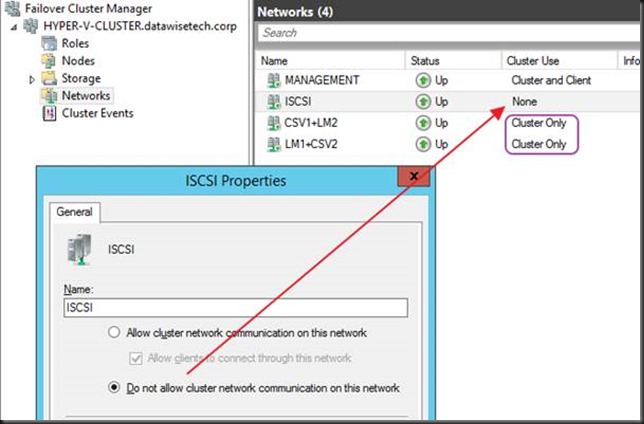

Create a new Cluster & configure networking. One of the nice things of R2 is that is intelligent enough to see what type of connectivity suits the networks best and it defaults to that. It sees that the ISCSI network should be excluded for cluster use and that the CSV/LM network doesn’t need to allow client access.

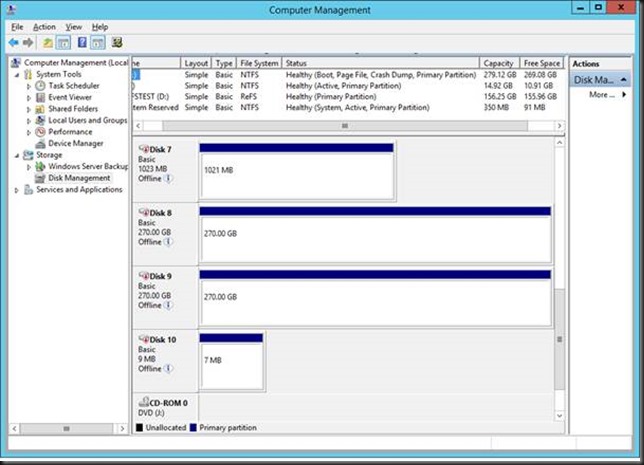

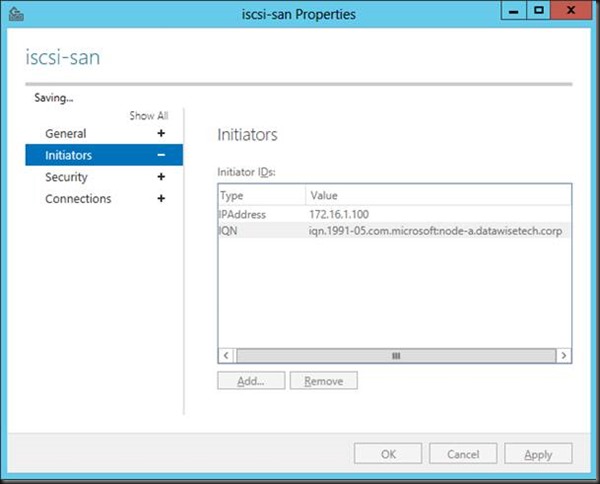

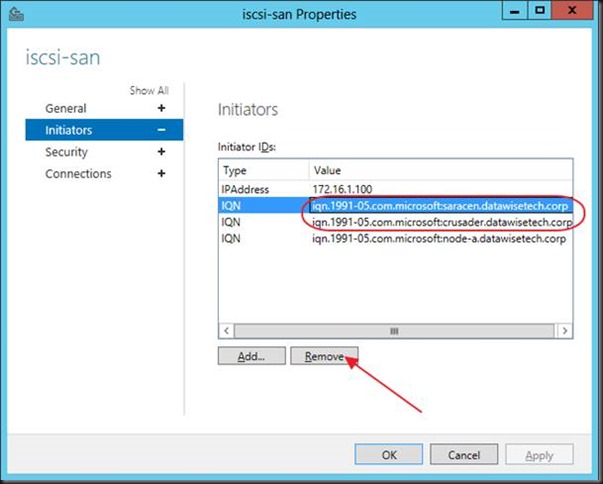

On the ISCSI Target (a W2K12RTM box) I remove the evicted node from the ISCSI initiators and I add the newly installed new cluster node. You can wait to do this out of precaution but the cluster itself does leave the newly detected LUNS off line by default. On top of that it detects the LUNs are in use (reservations) and you can’t even bring them on line.

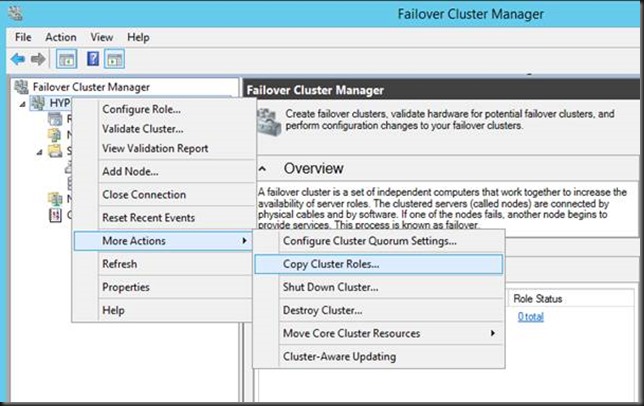

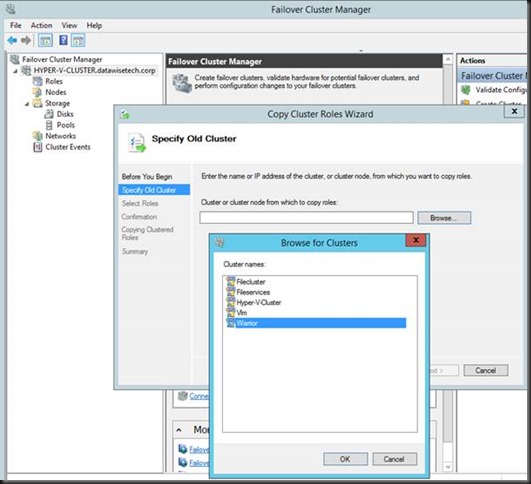

Right click the cluster and select “Copy Cluster Roles”

Follow the wizard instructions

Click Next and then click Browse to select the source cluster (W2K12)

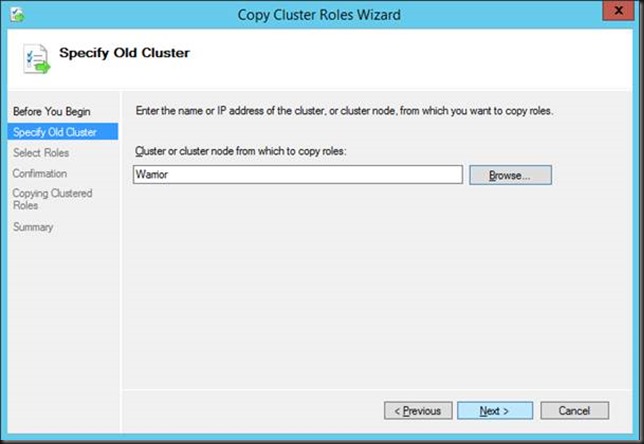

Select “Warrior” and click OK.

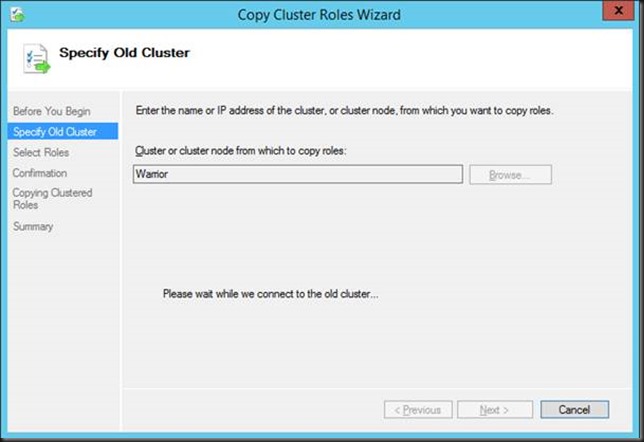

Click Next and see how the connection to the old cluster is being established.

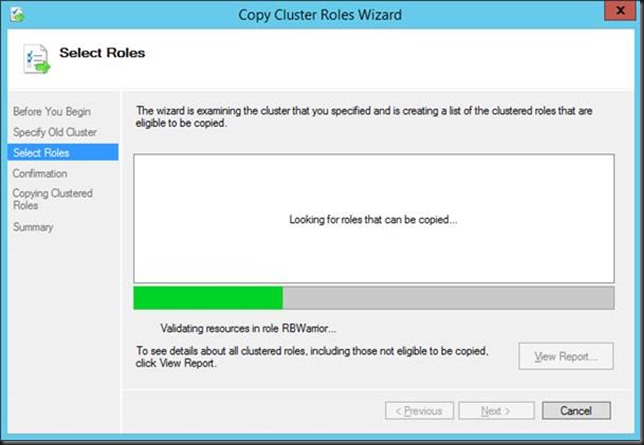

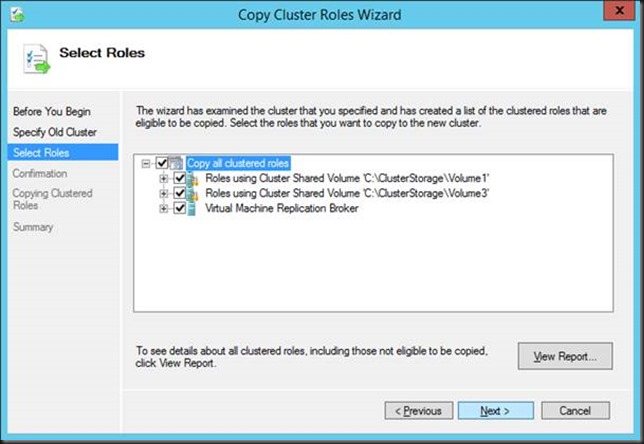

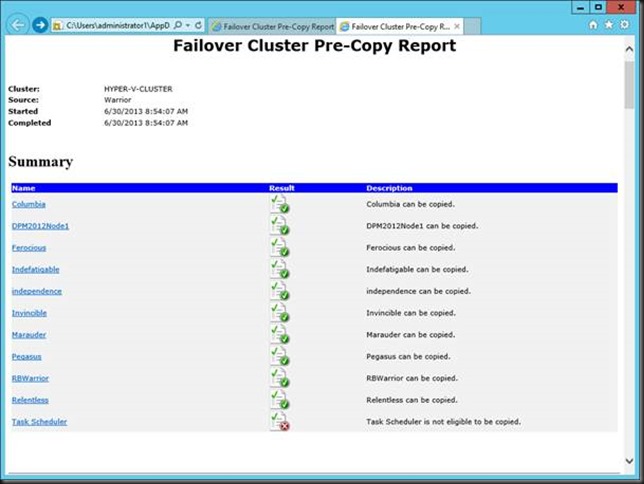

The wizard scans for roles on the old cluster that can be migrated.

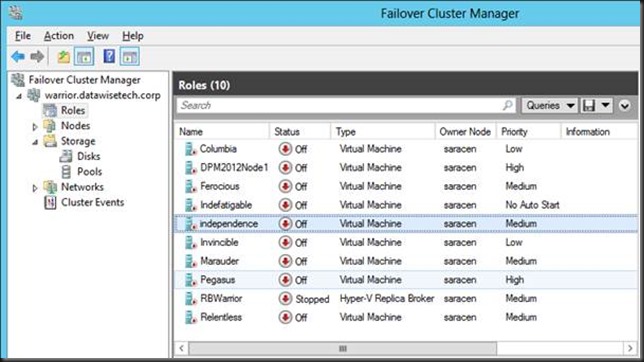

In this example the results are the Roles per CSV and the Replication Broker. For migration you can select one of the CSV’s and work LUN per LUN, select multiple of them or even all. It all depends on your needs and environment. I migrated them all over at once in the lab. In real live I usually do only 1 or a couple of interdependent LUNS (for example OS, DATA, LOGS, TempDB on separate CSVs of virtualized SQL Servers).

Note that if you did not specify a virtual switch you will not see any Roles on the CSV listed. So that’s why it’s important defining a virtual switch.

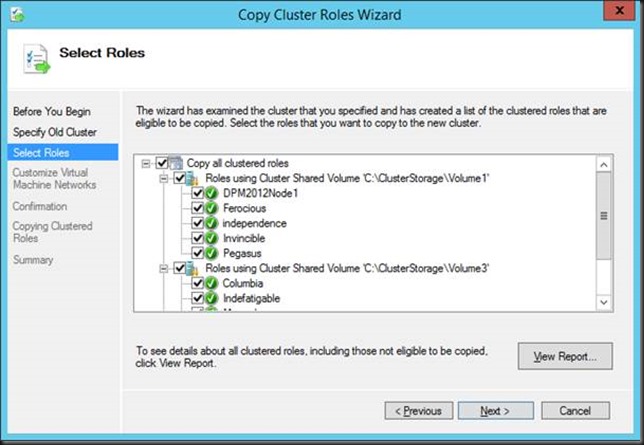

As we did it right we do see all the virtual machines. As we are not migrating to new storage we have to move all VMs on a LUN in one go. That’s because you cannot expose a LUN to multiple clusters.

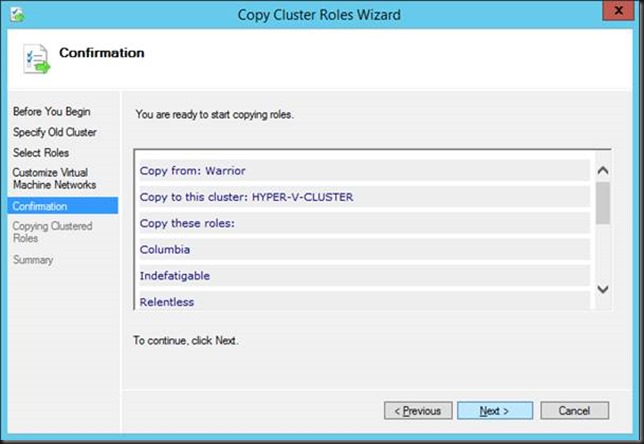

So we select all the clustered roles and click Next. As we use all capitals in the new virtual switch for the guests we are asked to map it manually. When the names are one on one identical AND in the same case, the mapping happens automatically. You can see this for the virtual switches for guest clustering CSV & ISCSI network connectivity.

We view the report before we click next and see exactly what will be copied.

Close the report and click Next.

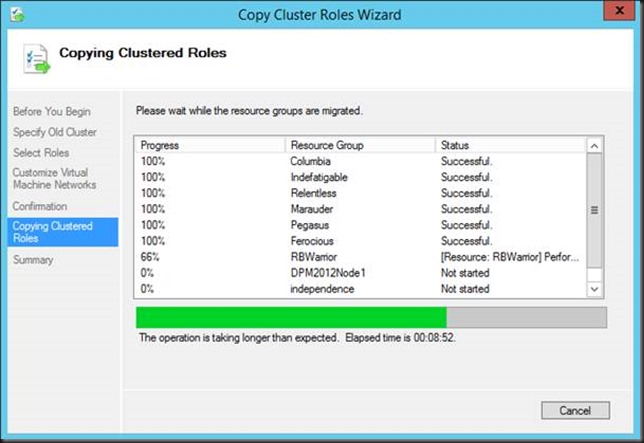

Click Next again to kick of the migration.

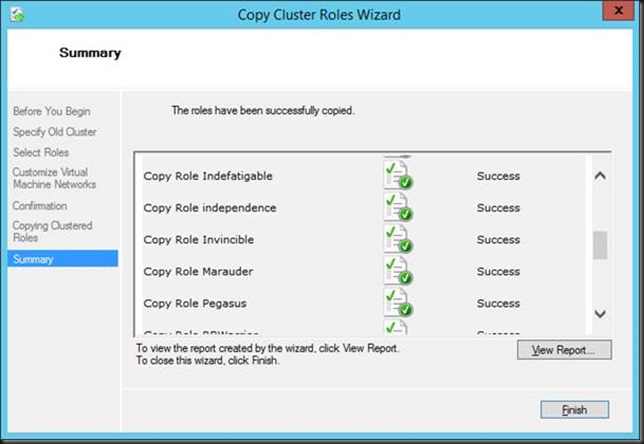

When don you can view a report of the results. Click finish to close the wizard.

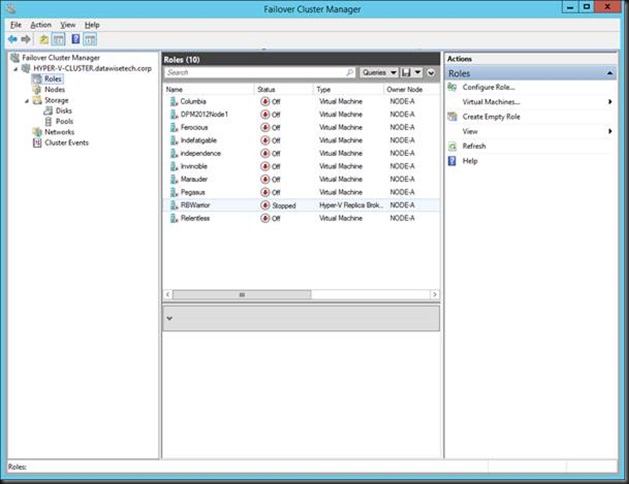

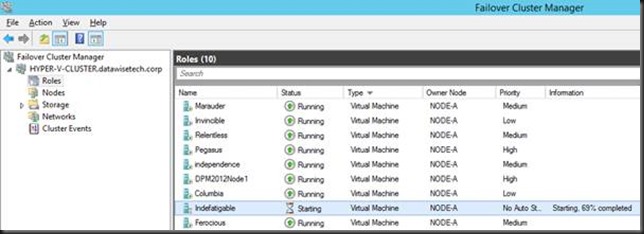

You now have copied your virtual machine cluster roles and the Replication Broker role.

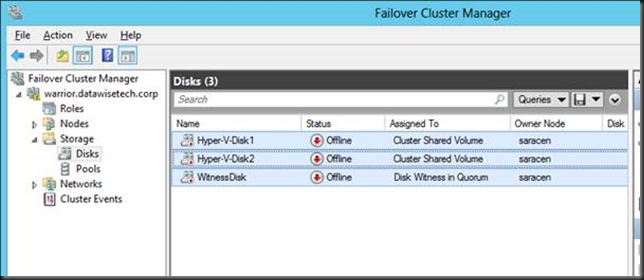

Even the storage is already there in the cluster but off line as it’s not yet available to the new cluster. Remember that your workload is still running on the old cluster so all what you have do until now is a zero down time exercise.

2. Preparing to make the switch on the source cluster node(s)

On the source cluster we shut down all the virtual machines and the Replication Broker role.

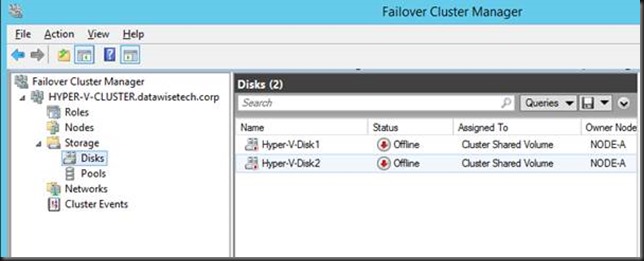

We then take the CSVs off line.

If you did not (or could not due to lack of disk space) create a new witness disk you can recuperate this LUN as well. Not ideal I a production migration but dynamic quorum will help you staying protected. Not so with Windows 2008 R2. But you’ll fight as you are. Things are not always perfect.

3. Swapping The Storage From the Source To The Target

We’ll now disconnect the ISCSI initiator from the target on the old cluster node and remove the target.

On the ISCSI target we’ll also remove the old nodes from the list of ISCSI initiators to keep the environment clean. You could wait until you see the VMs up and running on the new environment to facilitate putting the old environment back up if the migration should fail.

Wait until the process has completed and click apply

4. Bringing the new cluster into production

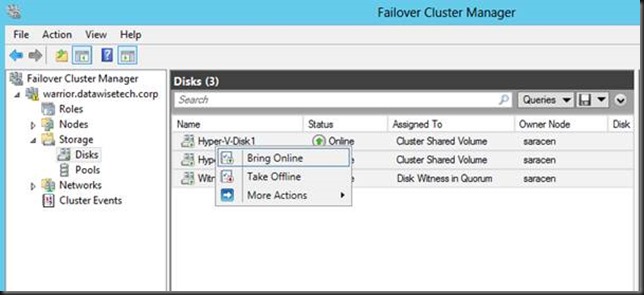

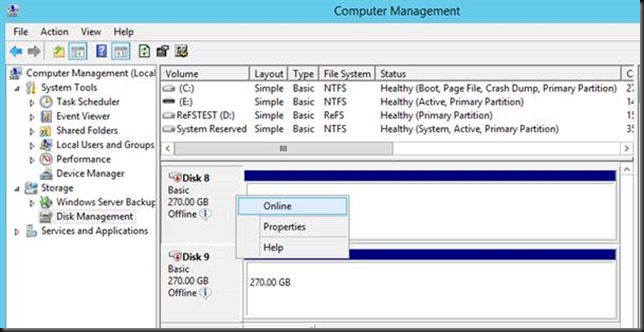

Bring the CSV LUNs on line on the new cluster.

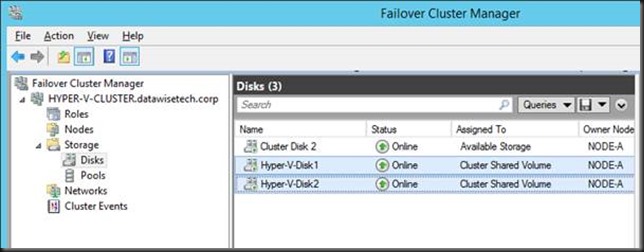

You’ll see then become available / on line in the cluster storage.

You are now ready to start up your virtual machines on the new cluster. With careful planning the down time for the services running in the virtual machines can be kept as low as 10 to 15 minutes depending on how fast you can deal with the storage switch over. Pretty neat.

Conclusion

You can now keep adding new nodes as you evict them from the old cluster. As said the exact scenario will vary based on the workload, number of nodes and CSV in your environment. But this should give you a good head start to work out your migration part. Keep in mind this is all done using the R2 Preview bits. Things are looking pretty good.

In a future blog I’ll discuss some best practices like making sure you have no snapshots on the machines you migrate as this still causes issues like before. At least in this R2 preview.

Pingback: Dell Community

Great article, could you perhaps also outline the steps to migrate from 2008 R2, or list any differences between the two.

Thanks for great article .Helped a Lot with migration.

however just keep in mind it does not work in case your VM’s are created by using SCVMM 2008r2 or scvmm2012r2. Everything shows fine and roles are migrated to destination cluster but when your try to start them it start giving error 1069 and configuration of vm can not be brought online.on looking in Hyper-v manager there is nothing in there as VM’s. Hope it will help someone in same situation we are at the moment.Thanks

Worked like a charm! Thanks!

You’re most welcome. Thank you for reading.

Pingback: Copy Cluster Roles Hyper-V Cluster Migration Fails at Final Step with error Virtual Machine Configuration ‘VM01′ failed to register the virtual machine with the virtual machine service | Working Hard In IT

This guide worked a treat, many thanks 🙂

You’re welcome. Spread the word to others who need some guidance on this and thanks for reading.

Excellent read! I’m currently preping our 4 node to go ahead with this. One question, you say install the 2012 R2 OS on the evicted node. I assume the nodes can be in-place OS upgrades from 12 to 12 R2 and it doesn’t have to be a fresh install?

Absolutely, you can do an in place upgrade. With enterprise grade servers & hardware you might have to deal with some issues left and right with drivers/firmware or it might even fail on certain hardware. If so do a clean install. Do note that prior to an upgrade/clean install you should get the BIOS, firmware etc upgrade to the latest possible version to avoid any compatibility problems.

Excellent! Thanks for that. I tend to keep our DC up to date as much as possible, but I’ll certainly double check before any upgrades 🙂

Ok. Now I just need to pick a weekend to waste!

Hi there, again!

I’m confused as to how the new cluster node would see the existing LUNs, as the LUNs are allocated to current hosts via a host group within the MDSM software.

My understanding would be that a new host group, with the new node, would have to be created via MDSM before attempting any soft of role copy or migration. Would it not be easier to create a new LUN, assign it to the new host group, and migrate storage via SCVMM 2012 R2?

MD3200 SAN, that’s what I’m using.

Yes and after you’ve done that, you run the wizard and, as described in the blog you un-present the LUN(s)s form the old cluster and present them to the new one via MDSM. So either you do 1 LUN/CSV at the time or all of them depending on how fast you want to go. Try it in a lab with the MSFT iSCSI target first and do a test with an demo CSV on your old cluster to new cluster.

You can do storage live migration via SCVMM 2012 to (if the source cluster is W2K8R2SP1 at least) but it takes longer. The benefit is that there is no down time, but do note you need sufficient free storage space to be able to do that. The best/better/optimal way depends on your options & needs.

Good luck!

Sweet, thought as much. Thanks! 🙂

Hi,

“Also note that the virtual switch name is case sensitive.” <= are you sure of that? I don't see why is that if at the end, this is stored in readable xml files (for the time being :-).

Thanks,

Eole

If I recall correctly form memory it’s to have the automatic mapping of the source vSwitch & the new vSwith to happen other wise you might need to select it if there is more than one. It’s a small thing of little importance for the success of the migration as long as you map the names correctly

hey – thanks for the detailed steps. I have read a couple articles now, but I am still unsure of how it would work with my environment..

Firstly I have a single CSV which contains 70+ LUNs and VMs, spread across 8 hyper-v 2012 hosts. I have configured a new 2012r2 hyper-v host, configured the virtual adapters and ready to run the wizard. If I have to select all 70 roles, and ‘move’ them to 1 single new host, how will this cope with the load?

Even if I evict one of the 8 hosts, and join it to my new 2012r2 cluster, I still couldn’t see how 70 roles will survive on 2 hosts??

Is my only option export/import 1 by 1?

With 70+ LUNs I’m assuming you mean VHD/VHDx files for Vms right?

Even if you import them one by one at a given moment you’ll need to have enough hosts to have all VMs up and running. I don’t know how performant your new cluster nodes are but if it’s the same as the old cluster nodes you’re in a pickle if you need 7 to keep ‘m all running.

Having one giant CSV can is a limiting factor and not the best way to set it all up (all eggs in one basket). You can still investigate other options: storage live migration if you prefer to new/more CSVs (create 2 or 3 new ones at least if you can, I’d go for 4 minimal), that is if you have the space to do so. Other wise, if you can have 2 or 3 nodes in the new cluster from the start migrate them all on the one CSV, start only a couple after moving them, upgrade the integrations services, reboot etc … than live migrate or quick migrate them to another node of the new cluster. You’re going to have to work around it and eat more down time than strictly needed under better conditions.

Excellent! I have read a bunch of TechNet articles on this and it always says to make sure the LUNs are connected and available on the new cluster. I couldn’t find clarification as to whether it needs to be seen as a CSV on the new cluster. They just point to instructions for setting up a new cluster which makes you believe it needs to see a CSV. Your post actually says that it can’t be until you release it from the old cluster. That explains the errors I was receiving trying to bring the old LUNs online on the new cluster before I tried migrating. Thanks for a thorough explanation!

You’re welcome, happy it helped!

Hmmm thank you.. Ok the bad news is I do actually mean 1 CSV with 70ish luns/volumes, and each volume containing the vhdx.. and config on a seperate lun (still within the csv).

This was the advised and setup by our equipment reseller and provider.. Something for me to talk to them about obviously..

So what can I do? If I have storage do you recommend creating new smaller CSVs and then start migrating?

If you have 70 VMs on one CSV (that’s the LUN, not the 70 VHD/VHDX files on there) yup … no if you have room to create more CSV why not do so? If you can storage live migrate the 70 VMs to 4 new CSVs (or however many you need depending on the number/capability of the hosts) you can save yourself a lot of concerns. 1 CSV per host is not a rule but it’s handy. It all depends.

We currently have a 3 Node Windows 2012 Cluster, was wondering if its possible to add a Windows 2012 R2 Node to our existing cluster and slowly upgrade the (Windows 2012) nodes to Windows 2012 R2. Any input/guidance would be greatly appreciated. Tnx.

No you need to create a new cluster and do either as described in the blog or use storage live migration, the 2 most efficient ways with the least down time. Rolling cluster node are for vNext (from W2K12R2 to vNext). Clean installs of the evicted clutser nodes (one by one for smaller clusters) and joining them to back to the cluster is the advised path.

Great article. I have a question.

I have a 6 node 2008R2 SP1 Cluster, with 11 CSV LUN’s,95 VM’s, Fiber connected, managed by SCVMM 2012. I have two spare LUN’s I could add to the 2012R2 cluster, but there are some critical vm’s that I just do not have space to create new LUN’s for them. So essentially I have to do this move with no new storage. Lastly, this will have to be done over time.

I am ready to evict one node and install 2012R2, Failover cluster, Hyperv, virtual network setup (MAN,VMCOM, CSV, LIVE) vswitch and its own Quorom disk.

Due to some comments above I am uncertain of how to proceed or what to search for assistance on this issue. Any guidance would be appreciated. Thank you for your time.

Can you point to what you are uncertain about as in regards to the migration model chosen the blog is as complete as I can make it. What is causing your uncertainty? Thx.

I can not do the move at one time, will need to do over several weekends. So the way I read your steps above that is out for me. Another post above mentioned SCVMM issues. SO I am assuming I will need to complete this move within SCVMM, with storage migration??? I am just uncertain never completing before and so far google searching, your article is the most complete, but the way I read not my solution.

This blog is about the use of the role copy wizard in failover clustering. You do not have to do this in one effort, you can do one CSV at the time, taking your time. As more CSV run on the new cluster you can evict another node on the old one, rebuild it and add it to the new one to increase it’s capacity. If you want to leverage SCVMM you must confirm the version your running handles the cluster versions you have and that might be an issue (it’s SCVMM 2012 – no R2).

Hi,

I have SQL 2012 Standard CSV cluster in a Hyper-v with node majority cluster.

I want to move a way from Hyper-v to vSphere, appreciate if you could help me with the best way to achieve this goal.

Regards,

Well done, let me point out that if you are launching the copy roles wizard from a client OS using the RSAT, it will report a WMI error and wont work.

Then I had a problem with the Quorum disk, lost during the migration and not seen anymore as available disk from the cluster