Do you need hard processor affinity in Hyper-V? Good question but let’s set the context first. I tend to virtualize workloads that shock some people. Not because they are super huge solutions requiring Petabytes of storage, 48TB of RAM, 256 cores and a million IOPS. Far from. The shock often comes from people who still consider virtualization as something for the lightweight infra services like DHCP, DNS, WSUS, print servers, or web services and websites. Some of these people even tried to virtualize other services like SharePoint ,SQL, Exchange etc. but they did not take into a account that virtualization is not magic and you’ll need to provision adequate resources and design /manage your environment to do so successfully. So part of them got bitten. They conclude that performance requires physical deployments … and they want to see a material CPU so to speak.

When they see virtual machines with 12 tot 16 vCPUs or > 100GB of memory they seem to thinks that even those workloads are bad candidates to virtualize, let alone even bigger ones. That’s not true by definition. As long as you make sure that you know why (cost/benefits/risks) and how to virtualize it can work. You must provision and allocate the required resources. You must also have the right expertise in both virtualization (servers, storage, networking) and the applications involved (SQL, Exchange, 3rd party products, …) along with good operational processes.

You can really virtualize a lot when done right. My “virtual first” approach is rule of thumb and exceptions do exist even when I’m calling the shots. However just like people quoting costs, latency, security, lock-in to question the suitability of Public Cloud versus on premises in “subjective” ways, they do so when it comes to virtualization as well. The discussion if often more about organizational issues, control, fear, politics, interests and money. Every hosting provider out there loves virtualization as it’s great for their TCO/ROI. But when it comes to Public Cloud they’re often less convinced. That “datacenter zero” concept isn’t that attractive to them so we see Hybrid and Public Cloud offerings that might not be that good of an idea in some cases but it fits their interests more. Have you noticed that there are no highly automated, optimized data centers anymore, only * clouds? There are valid use cases for hybrid and private clouds but just like with virtualization, maybe we should let go of the personal/business interests, the fear, and false assumptions when advising customers. It all depends.

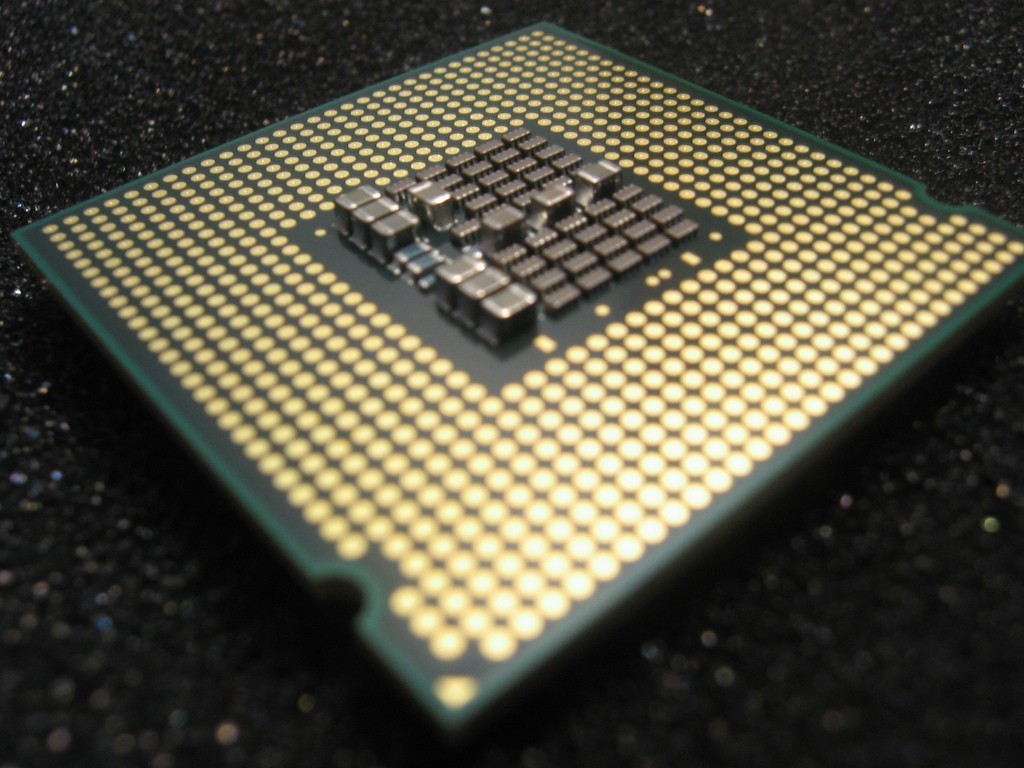

In this regards I had several discussions now with people about the lack of hard processor affinity with Hyper-V. This makes it unfit for high performance workloads in their opinion. Sure, such cases do exist. These are however, not the majority. As I’ve been having this discussion rather often in the past months I wrote an article on the subject that I’ve published in collaboration with StarWind Software Need Hard Processor affinity for Hyper-V? The idea is to reach more people and share insights with the community. Full disclosure: I happen to know Anton Kolomyeytsev (CEO, CTO and Chief Architect at StarWind) professionally as a fellow MVP and I have great respect for his technical expertise, insights and experience. This made me agree to publish some content via their blog. Sharing opinions and ideas with as many people as possible only makes for better technologist everywhere.