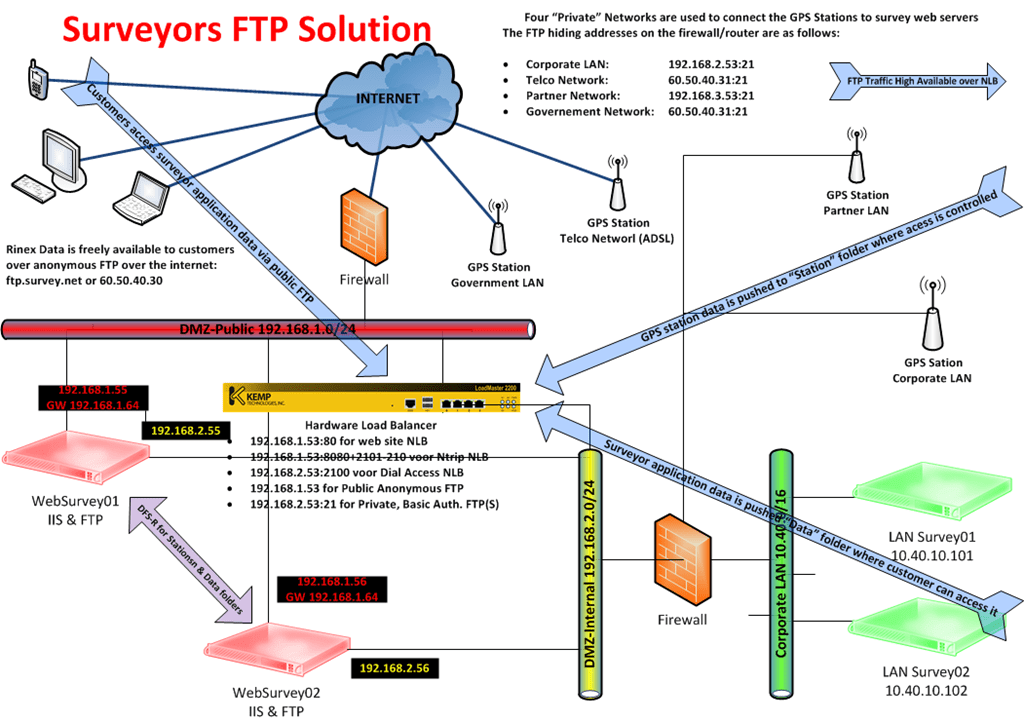

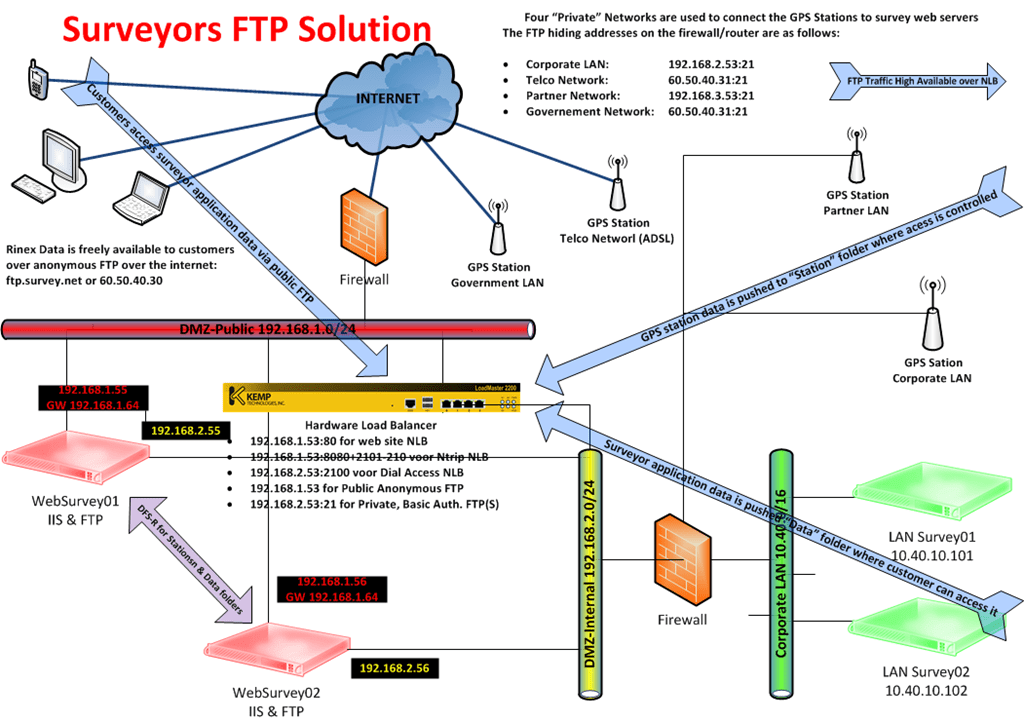

Remember the blog entry about A Hardware Load Balancing Exercise With A Kemp Loadmaster 2200 KEMP Loadmaster to provide redundancy for a surveyor’s GPS network? Well, we got commissioned to come up with a redundant FTP solution for their needs last month and this blog is about what we came up with. The aim was to make due with what is already available.

FTP 7.5 in Windows 2008 R2

We use the FTP Server available in Windows 2008 R2 which provides us with all functionality we need: User Isolation and FTP over SSL.

The data from all the GPS stations is sent to the FTP server for safekeeping and is to be used to overcome certain issues customers might have with missing data from surveying solutions. This data is not being made available to customers by default, it’s only for special cases & purposes. So we need to collect the data in its own folder named after its account so we can configure user isolation. This also prevents GPS Stations from writing in locations where it shouldn’t.

As every GPS Station slogs in with the “Station” account it ends up in the “Station” folder as root FTP folder and can’t read or write out of that folder. The survey solution service desk can FTP into that folder and access any data they want.

The data that’s being provided by the software solution (LanSurvey01 and lanSurvey02) is to be sent to its own folder “Data” that is also set up with user Isolation to prevent the application from reading or writing anywhere else on the file system.

The data from should be publicly available to the customers and for this, we created a separate FTP site called “Public” that is configured for anonymous access to the same Data folder but with read permissions only. This way the customers can get all the data they need but only have read access to the required data and nothing more.

For more information on setting up FTP 7.5 and using FTP over SSL you might take a look here http://learn.iis.net/page.aspx/304/using-ftp-over-ssl/ and read my blog on FTP over SSL Pollution of the Gene Pool a Real Life “FTP over SSL” Story

High Availability

In the section above we’ve taken care of the FTP needs. Now we still need redundancy. We could use Windows NLB but since this network already uses a KEMP Loadmaster due to the fact that the surveyor’s software has some limitations in its configuration capabilities that don’t allow Windows Network Load Balancing being used.

We want both the GPS stations and the surveyor’s application servers to be able to send FTP data when one of the receiving FTF servers is down for some reason (updates, upgrades, maintenance, or failure). What we did is set up a VIP for use with FTP on the Kemp Loadmaster. This VIP is what is used by the GPS Stations and the application to write and by the customers to read the FTP data.

DFS-R to complete the solution

But up until now, we’ve been ignoring an issue. When using NLB to push data to hosts we need to ensure that all the data will be available on all the nodes all of the time. You could opt to only have the users access the FTP service via an NLB VIP address and push the data to both nodes without using NLB. The latter might be done at the source but then you have twice the amount of data to push out. It also means extra work to configure and maintain the solution. We could copy the data to one FTP node and copy it from there. That works but leaves you very vulnerable to a service outage when the node that gets the original copy is down. No new data will be available. Another issue is the fact that you need a rock-solid way to copy the data and have it done it a timely manner, even after downtime of one or more of the nodes.

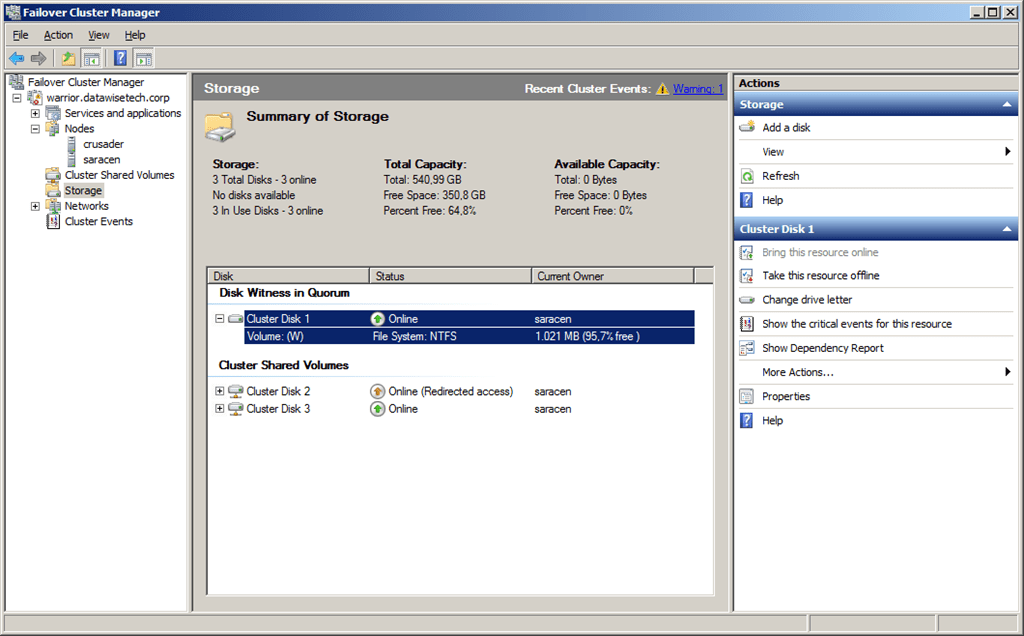

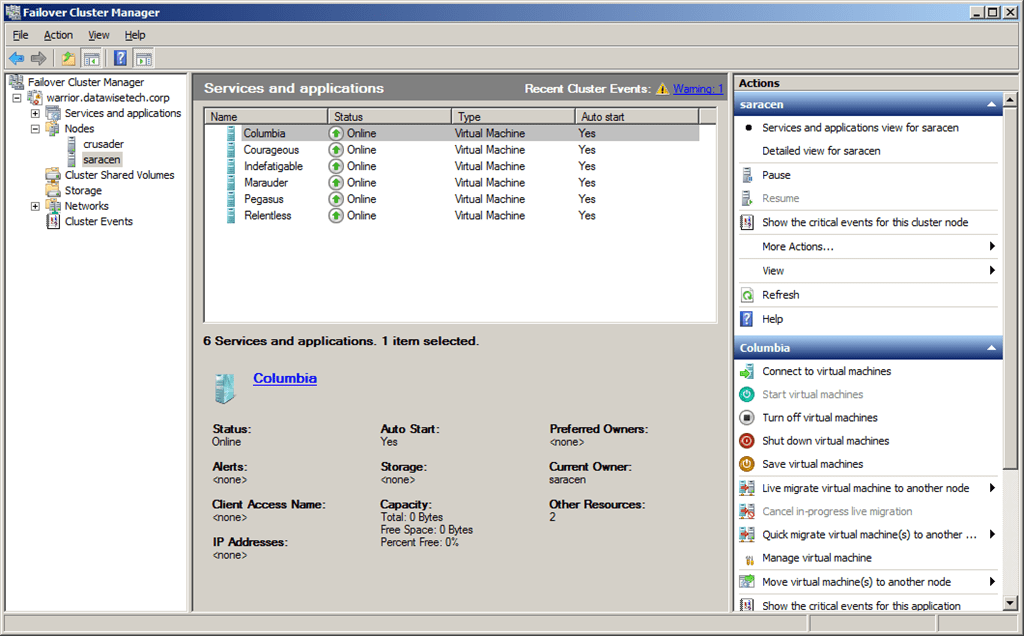

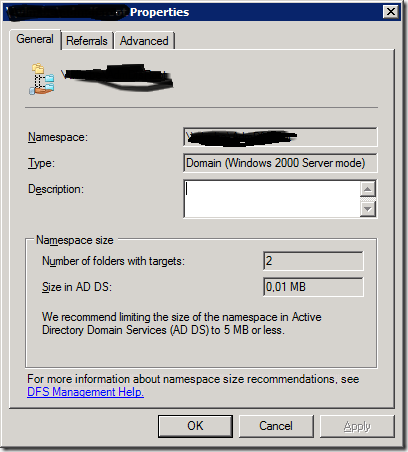

As you read above we provide an NLB VIP as a target for the surveyor’s application and the GPS Stations to send their data to. This means the data will be sent to the FTP NLB array even if one of the nodes is down for some reason. To get the data that arrives from 2 application servers and from 40 GPS Stations synchronized and up to date on both the NLB nodes we use the Data File System – Replication (DFS-R) built into Windows 2008 R2. We have no need for a DFS-Namespace here, so we only use the replication feature. This is easy and fast to set up (add the DFS service from the File Server Role) and it doesn’t require any service downtime (no reboot required). The fact that both the FTP nodes are members of a Windows 2008 R2 domain does help with making this easy. To make sure we have replication in all direction we opt to set it up as a full and the replication schedule is 24/7, no days off J Since we chose to replicate the FTP root folder we have both the Data and the Stations folders covered as well as the folder structure needed to have FTP user Isolation function.

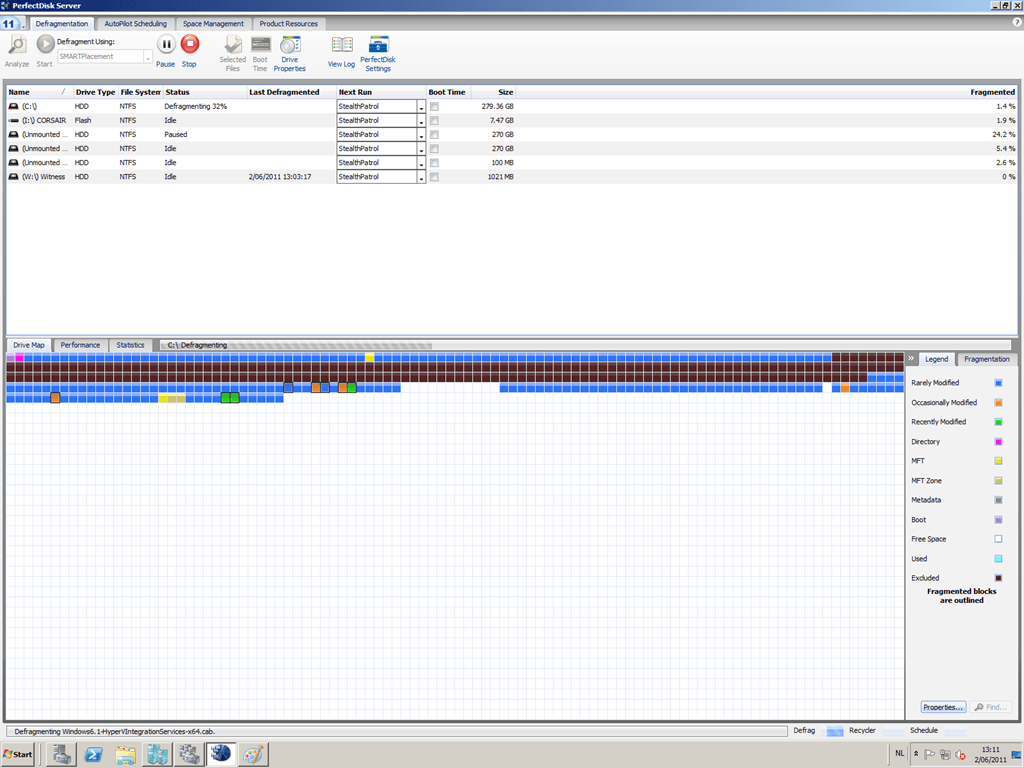

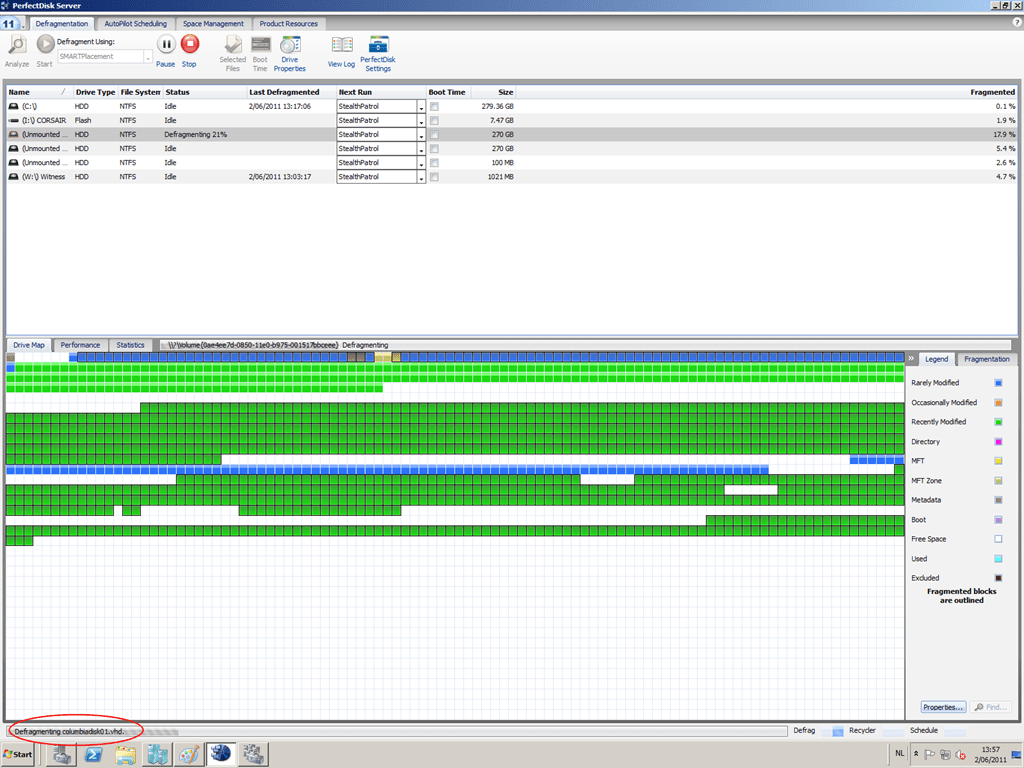

This solution was built fast and easily using Windows 2008 R2 out of the box functionality: FTP(S) with User Isolation and DFS-R. The servers are running as hyper-V guests in a Hyper-V cluster providing high availability through Live Migration.