I’m testing & playing different Windows Server 2012 & Hyper-V networking scenarios with 10Gbps, Multichannel, RDAM, Converged networking etc. Partially this is to find out what works best for us in regards to speed, reliability, complexity, supportability and cost.

Basically you have for basic resources in IT around which the eternal struggle for the prefect balance finds place. These are:

- CPU

- Memory

- Networking

- Storage

We need both the correct balance in capabilities, capacities and speed for these in well designed system. For many years now, but especially the last 2 years it very save to say that, while the sky is the limit, it’s become ever easier and cheaper to get what we need when it comes to CPU, Memory. These have become very powerful, fast and affordable relative to the entire cost of a solution.

Networking in the 10Gbps era is also showing it’s potential in quantity (bandwidth), speed (latency) and cost (well it’s getting there) without reducing the CPU or memory to trash thanks to a bunch of modern off load technologies. And basically in this case it’s these qualities we want to put to the test.

The most trouble some resource has been storage and it has been for quite a while. While SSD do wonders for many applications the balance between speed, capacity & cost isn’t that sweet as for our other resources.

In some environments were I’m active they have a need for both capacity and IOPS and as such they are in luck as next to caching a lot of spindles still equate to more IOPS. For testing the boundaries of one resource one needs to make sure non of the others hit theirs. That’s not easy as for performance testing can’t always have a truck load of spindles on a modern high speed SAN available.

RAMDisk to ease the IOPS bottleneck

To see how well the 10Gbps cards with and without Teaming, Multichannel, RDMA are behaving and what these configuration are capable of I wanted to take as much of the disk IOPS bottle neck out of the equation as possible. Apart from buying a Violin system capable of doing +1 million IOPS, which isn’t going to happen for some lab work, you can perhaps get the best possible IOPS by combining some local SSD and RAMDisk. RAMDisk is spare memory used as a virtual disk. It’s very fast and cost effective per IOPS. But capacity wise it’s not the worlds best, let alone most cost effective solution.

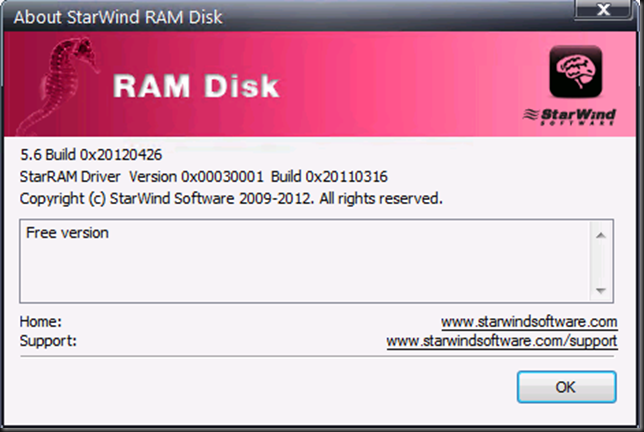

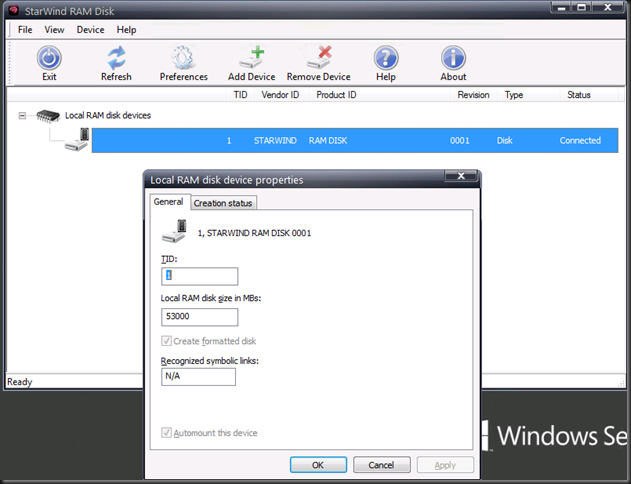

I’m using free RAMDisk software provided by StarWind. I chose this as they allow for large sized RAMDisks. I’m using the ones of 54GB right now to speed test copying fixed sized VHDX files. It install flawlessly on Windows Server 2012 and it hasn’t caused me any issues. Throw in some SSDs on the servers for where you need persistence and you’re in business for some very nice lab work.

You also need to be aware it doesn’t persist data when you reboot the system or lose power. This is not an issue if all we are doing is speed testing as we don’t care. Otherwise you’ll need to find a workaround and realize those ‘”flush the data to persistent storage” isn’t full proof or super fast, the SSDs do help here.

You have to register but the good news is that they don’t spam you to death at all, which I find cool. As said the tool is free, works with Windows Server 2012 and allows for larger RAMDisks where other free ones are often way to limited in size.

It has allowed me to do some really nice testing. Perhaps you want to check this out as well. WARNING: The below picture is a lab setup … I’m not a magician and it’s not the kind of IOPS I have all over the datacenters with 4 Cheapo SATA disks I touched my special magic pixie dust.

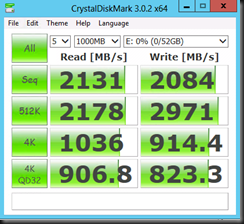

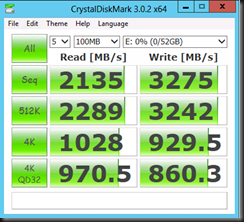

Some quick tests with a 52GB NTFS RAMDisk formatted with a 64K NTFS Allocation unit size.

|

|

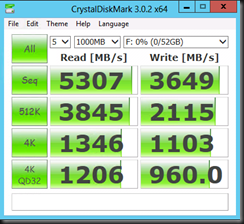

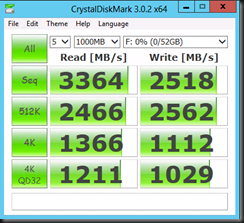

I also tested with another free tool from SoftPerfect ® RAM Disk FREE. It performs well but I don’t get to see the RAMDisk in the Windows Disk Management GUI, at least not on Windows Server 2012. I have not tested with W2K8R2.

| NTFS Allocation unit size 4K | NTFS Allocation unit size 64K |

|

|

Solarwinds doesn’t make RAMDisks. Should be Starwind Software 🙂

Are you pulling this over the wire (what config ? RDMA, Native, x1 or x2 ?) having the RAMdis exposed as iSCSI target ? Using MPIO or without ? What’s utilization (CPU, NICs) like this…?

Nice huge mental typo there 🙂 Fixed.

RAMDISK to RAMDRISK, local, both RDMA & native 10Gbps. And explorer quits sooner than anything else afaik 🙂 You need to do multiple NICs and generate enough IO to see the benefits of RDMA on CPU for example to kick in. This blog post is just about the option of using RAMDisk to get around IO bottlenecks.

We’re interested to see whether with SMB 3.0 we see a benefits from using multichannel RDMA for storage live migration, and shared nothing live migration on overall impact on the system (CPU wise, throughput wise etc) and when => i.e. when do we benefit and when not. For the price of the cards you don’t need leave it out depending on the type of 10Gbps you use. Even the affordable 10Gbps switches have DCB so that’s often not a concern either. The real concern is whether it worth setting up & configuring versus native 10Gbps teamed or not with QoS via the hyper-v Switch. There are a lot of permutations and a lot of questions and while we can use the gear for a while we can’t test out all possible scenarios.

Which switches are you referring to in this context ?

“Even the affordable 10Gbps switches have DCB”

RDMA should work out of the box, doesn’t it ? Or you’re referring to setting up QoS using DCB as being more cumbersome as opposed to built-in Microsoft QoS ?

Mellanox, 8024F, 8132F verus Force10/Cisco .. it all depends on what you need. Even better if you don’t need DCB you can use even cheaper NetGear 10Gbps switches. It all depends on how many ports you need and smaller environments )and just east-west traffic) can get aways with 12-24 port dual switches for redundancy, dump stacking & use switch independent mode …

DCB needs to be supported by the swith, the operating system and it needs to be configured …

iWarp works out of the box but you’ll need some form of QoS there in most cases an you could use DCB or another QoS solution

RoCE requieres DCB even it seems to work out of the box.

Infiniband is another beast al together.

DCB is a bit more cumbersome but not just that, it’s often less controlable or less within reach of a server/virtualization admin. But perhaps you don’t need it so you can go with even cheaper switches.

QoS in MSFT land comes in different tastes, QoS via the hyper-V switch is the easiest and it works & is more convenient in more scenarios.

Pingback: Microsoft Most Valuable Professional (MVP) – Best Posts of the Week around Windows Server, Exchange, SystemCenter and more – #27 - Dell TechCenter - TechCenter - Dell Community

Pingback: Microsoft Most Valuable Professional (MVP) – Best Posts of the Week around Windows Server, Exchange, SystemCenter and more – #27 - TechCenter - Blog - TechCenter – Dell Community

Pingback: Server King » Dell’s Digest for May 6, 2013