As you might very well know by experience sometimes the System Center Virtual Machine Manager GUI and database get out of sync with reality about what’s going on for real on the cluster. I’ve blogged about this before in SCVMM 2008 R2 Phantom VM guests after Blue Screen and in System Center Virtual Machine Manager 2008 R2 Error 12711 & The cluster group could not be found (0×1395)

The Issue

Recently I had to trouble shoot the “Missing” status of some virtual machines on a Hyper-V cluster in SCVMM2008R2. Rebooting the hosts, guests, restarting agents, … none of the usual tricks for this behavior seemed to do the trick. The SCVMM2008R2 installation was also fully up to date with service packs & patches so there the issue dot originate.

Repair was greyed out and was no use. We could have removed the host from SCVMM en add it again. That resets the database entries for that host en can help fix the issues but still is not guaranteed to work and you don’t learn what the root cause or solution is. But none of our usual tricks worked.We could have deleted the VMs from the database as in but we didn’t have duplicates. Sure, this doesn’t delete any files or VM so it should show up again afterwards but why risk it not showing up again and having to go through fixing that.

The Cause

The VMs were in a “Missing” state after an attempted live migration during a manual patching cycle where the host was restarted the before the “start maintenance mode” had completed. A couple of those VMs where also Live Migrated at the same time with the Failover Cluster GUI. A bit of confusion al around so to speak nut luckily all VMs are fully operational an servicing applications & users so no crisis there.

The Fix

DISCLAIMER

I’m not telling you to use this method to fix this issue but you can at your own risk. As always please make sure you have good and verified backups of anything that’s of value to you ![]()

We hade to investigate. The good news was that all VMs are up an running, there is no downtime at the moment and the cluster seems perfectly happy ![]() .

.

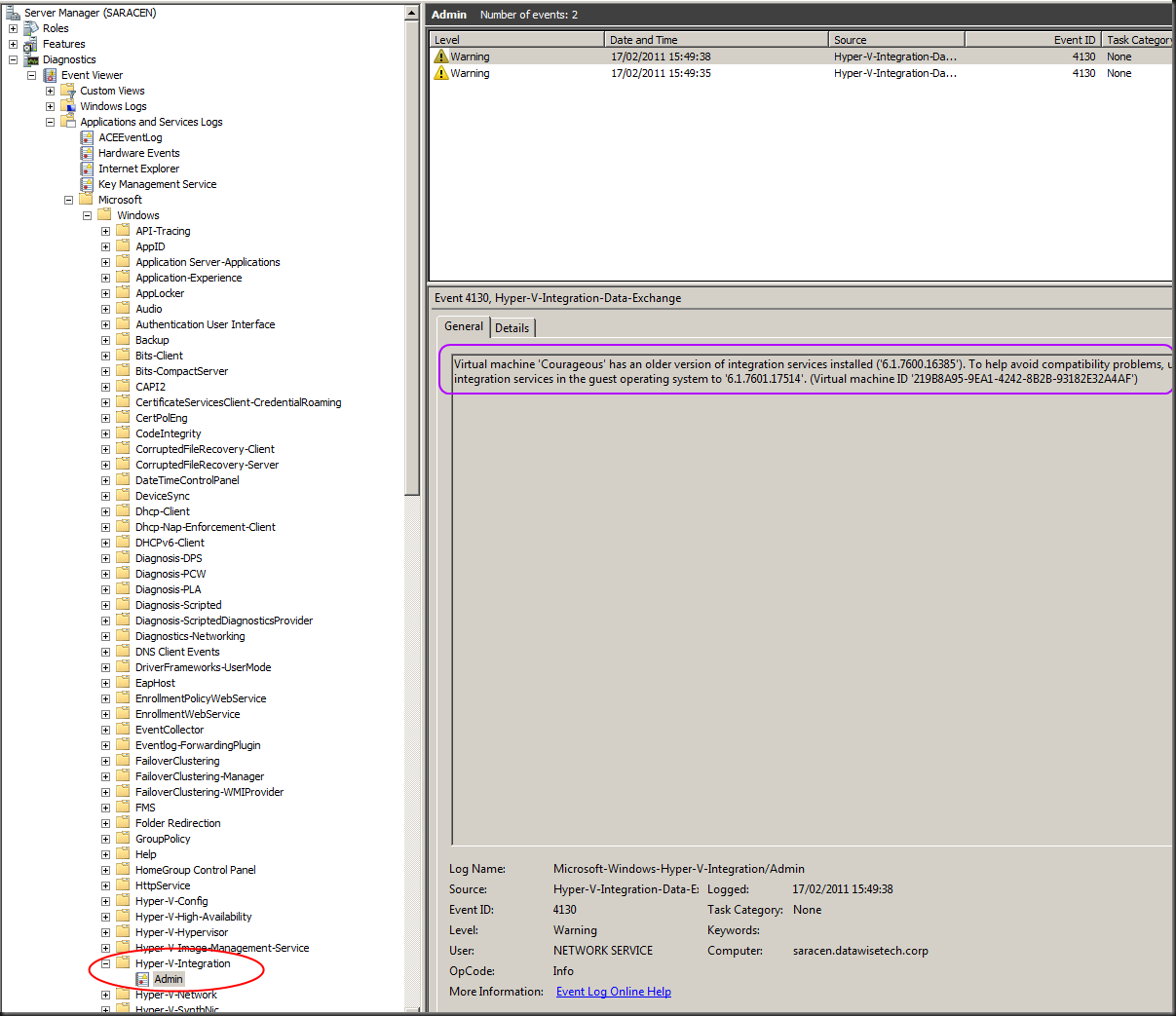

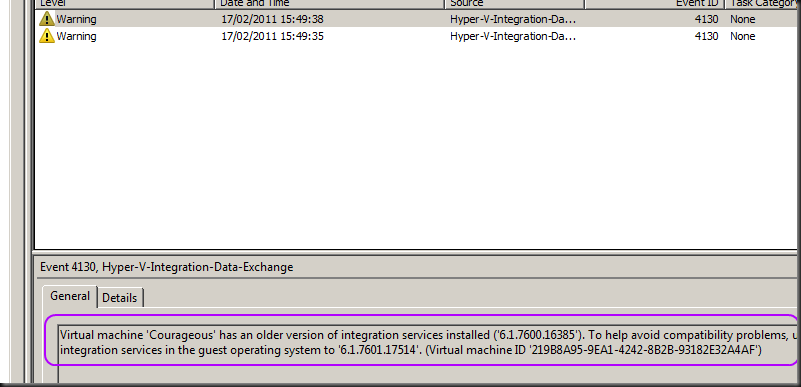

But there we see the first clue. The Virtual machines on the cluster are not running on the node SCVMM thinks they are running, hence the “Missing” status.

First of all let’s find out what host the VM is really running on in the cluster and see what SCVMM thinks on what host the VM is running. We run this little query against the VMM database. That gives us all hosts known to SCVMM.

SELECT [HostID],[ComputerName] FROM [VMM].[dbo].[tbl_ADHC_Host]

HostID ComputerName

559D0C84-59C3-4A0A-8446-3A6C43ABF618 node1.test.lab

540C2477-00C3-4388-9F1B-31DBADAD1D8C node2.test.lab

40B109A2-9E6B-47BC-8FB5-748688BFC0DF node3.test.lab

C2DA03CE-011D-45E3-A389-200A3E3ED62E node4.test.lab

6FA4ABBA-6599-4C7A-B632-80449DB3C54C node5.test.lab

C0CF479F-F742-4851-B340-ED33C25E2013 node6.test.lab

D2639875-603F-4F49-B498-F7183444120A node7.test.lab

CE119AAC-CF7E-4207-BE0B-03AAE0371165 node8.test.lab

AB07E1C2-B123-4AF5-922B-82F77C5885A2 node9.test.lab

(9 row(s) affected)

Voila en now the fun starts. SCVMM GUI tells us “MissingVM” is missing on node4.

We check this in the database to confirm:

SELECT Name, ObjectState, HostId FROM VMM.dbo.tbl_WLC_VObject WHERE Name = 'MissingVM' GO

Which is indeed node4

Name ObjectState HostId

——— — ————————————

node4 220 C2DA03CE-011D-45E3-A389-200A3E3ED62E

(1 row(s) affected)

In SCVMM we see that the moving of the VM failed. Between node 4 and node 6.

Now let’s take a look at what the cluster thinks … yes there it is running happily on node 6 and not on node 4. There’s the mismatch causing the issue.

So we need to fix this. We can Live Migrate the VM with the Failover Cluster GUI to the node SCVMM thinks the VM still resides on and see if that fixes it. If it does, great! You have to give SCVMM some time to detect all things and update its records.

But what to do if it doesn’t work out? We can get the HostId from the node where the VM is really running in the cluster, which we can see in the Failover Cluster GUI, from the query we ran above and than update the record:

UPDATE VMM.dbo.tbl_WLC_VObject SET HostId = 'C0CF479F-F742-4851-B340-ED33C25E2013' WHERE Name = 'MissingVM' GO

We then reset the ObjectState to 0 to get rid of the Missing status. It would do this automatically but it takes a while.

UPDATE VMM.dbo.tbl_WLC_VObject SET ObjectState = '0' WHERE Name = 'MissingVM' GO

After some patience & Refreshing all is well again and test with live migrations proves that all works again.

As I said before people get creative in how to achieve things due to inconsistencies, differences in functionality between Hyper-V Manager, Failover Cluster Manager and SCVMM 2008R2 can lead to some confusing situations. I’m happy to see that in Windows 8 the action you should perform using the Failover Cluster GUI or PowerShell are blocked in Hyper-V Manager. But SCVMM really needs a “reset” button that makes it check & validate that what it thinks is reality.